Azade Nova

21 posts

Azade Nova

@Azade_na

Research Scientist at Google DeepMind

Gemini 1.5 Model Family: Technical Report updates now published In the report we present the latest models of the Gemini family – Gemini 1.5 Pro and Gemini 1.5 Flash, two highly compute-efficient multimodal models capable of recalling and reasoning over fine-grained information from millions of tokens of context, including multiple long documents and hours of video and audio. Our latest report details notable improvements in Gemini 1.5 Pro within the last four months. Our May release demonstrates significant improvement in math, coding, and multimodal benchmarks compared to our initial release in February. Furthermore, the 1.5 Pro Model is now stronger than 1.0 Ultra. The latest Gemini 1.5 Pro is now our most capable model for text and vision understanding tasks, surpassing 1.0 Ultra on 16 of 19 text benchmarks and 18 of 21 of the vision understanding benchmarks. The table below highlights the improvement in average benchmark performance for different categories in 1.5 Pro since Feb, and also shows the strength of the model relative to the 1.0 Pro and 1.0 Ultra models. The 1.5 Flash model also compares very well against the 1.0 Pro and 1.0 Ultra models. One clear example of this can be seen on MMLU On MMLU we find that 1.5 Pro surpasses 1.0 Ultra in the regular 5-shot setting scoring 85.9% versus 83.7%. However with additional inference compute, via majority voting on top of multiple language model samples, we can get a performance of 91.7% versus Ultra’s 90.0%, which extends the known performance ceiling of this task. @OriolVinyalsML and I are very proud of the whole Gemini team, and it’s fantastic to see this progress and to share these highlights from our Gemini Model Family. Read the updated report here: goo.gle/GeminiV1-5

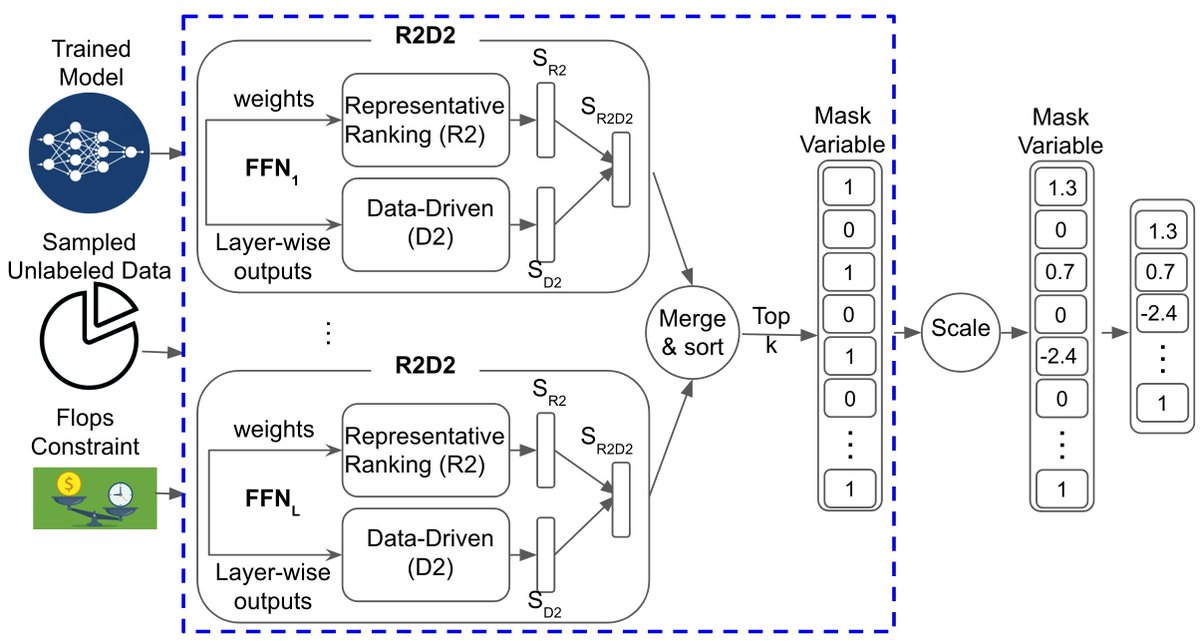

Check out our gradient-free structural pruning approach for large models. No need for retraining or labeled data! In just a few minutes on one GPU, reduces up to 40% of the original FLOPs at minimal loss of accuracy. w/ @hanjundai, Dale Schuurmans paper: arxiv.org/abs/2303.04185

Thank you to everyone on the organizing and steering committee for putting together a great ML for Systems workshop at NeurIPS!

“LLMs can’t even do addition” 📄🚨We show that they CAN add! To teach algos to LLMs, the trick is to describe the algo in enough detail so that there is no room for misinterpretation w/ @Azade_na @hugo_larochelle @AaronCourville @bneyshabur @HanieSedghi arxiv.org/abs/2211.09066

Applications for the 2019 Google AI Residency program are now open! Visit g.co/airesidency/ap… for more information on how to apply. To learn more about the accomplishments of the recently graduated second class of residents, visit ↓ goo.gl/5QZbsF