Biao Zhang

217 posts

Biao Zhang

@BZhangGo

Research Scientist @ Google. Past: PostDoc at UoE. PhD in NLP/MT @edinburghnlp. All opinions are my own.

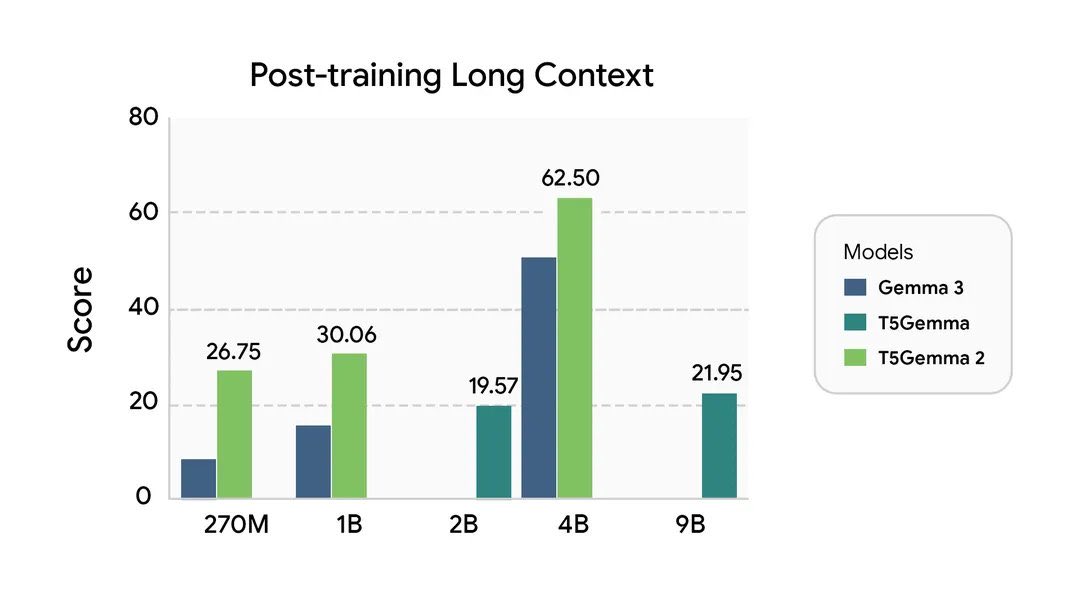

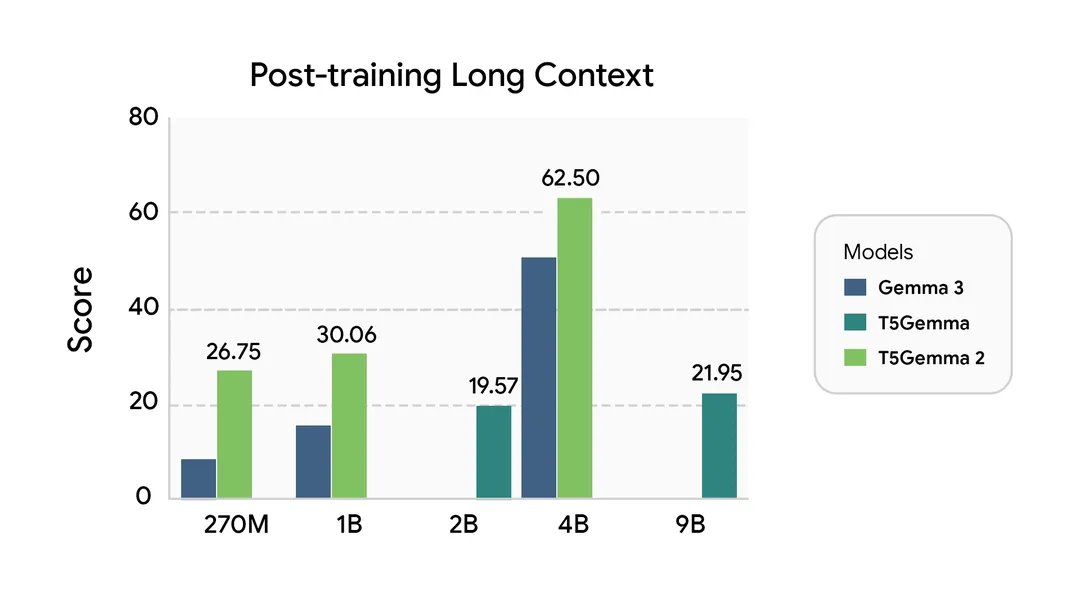

Introducing T5Gemma 2, the next generation of encoder-decoder models 🚀 Built on top of Gemma 3, we were able to build compact models at sizes of 270m-270m, 1B-1B, and 4B-4B sizes. While most models today are decoder-only, T5Gemma 2 is the first (I'm aware of) multimodal, long-context, and heavily multilingual (140 languages) encoder-decoder model out there. We hope this model enables the model research community as well as the community of devs ready to explore with new architectures. Blog: blog.google/technology/dev… Models: huggingface.co/collections/go… Paper: arxiv.org/abs/2512.14856

Introducing T5Gemma 2, the next generation of encoder-decoder models 🚀 Built on top of Gemma 3, we were able to build compact models at sizes of 270m-270m, 1B-1B, and 4B-4B sizes. While most models today are decoder-only, T5Gemma 2 is the first (I'm aware of) multimodal, long-context, and heavily multilingual (140 languages) encoder-decoder model out there. We hope this model enables the model research community as well as the community of devs ready to explore with new architectures. Blog: blog.google/technology/dev… Models: huggingface.co/collections/go… Paper: arxiv.org/abs/2512.14856

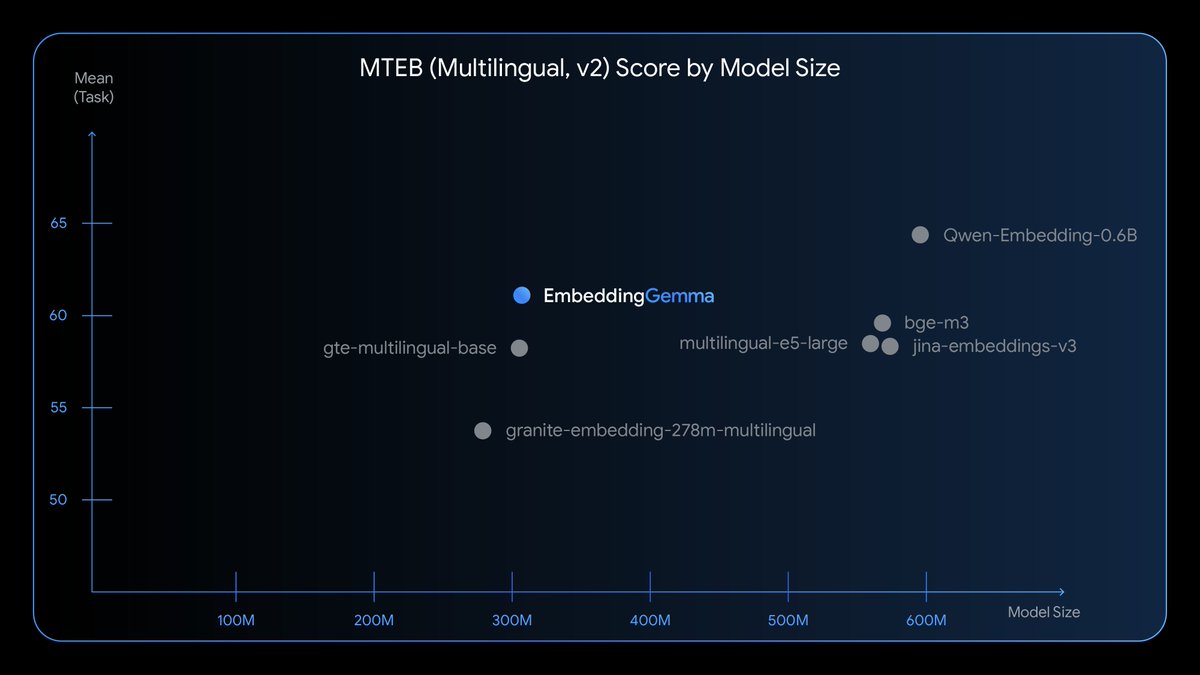

EmbeddingGemma is our new best-in-class open embedding model designed for on-device AI. 📱 At just 308M parameters, it delivers state-of-the-art performance while being small and efficient enough to run anywhere - even without an internet connection.

In our continued commitment to open-science, we are releasing the Voxtral Technical Report: arxiv.org/abs/2507.13264 The report covers details on pre-training, post-training, alignment and evaluations. We also present analysis on selecting the optimal model architecture, which pre-training format to use, and the benefits of DPO.