neural network interpretability is fun both in the way hard math puzzles are fun but also in the way exploring uncharted land is fun. it’s wild to me that such an important problem also happens to be a recreationally fun adventure

Bart Bussmann

253 posts

@BartBussmann

Mechanistic Interpretabilty Researcher | Trying to forge a brighter future

neural network interpretability is fun both in the way hard math puzzles are fun but also in the way exploring uncharted land is fun. it’s wild to me that such an important problem also happens to be a recreationally fun adventure

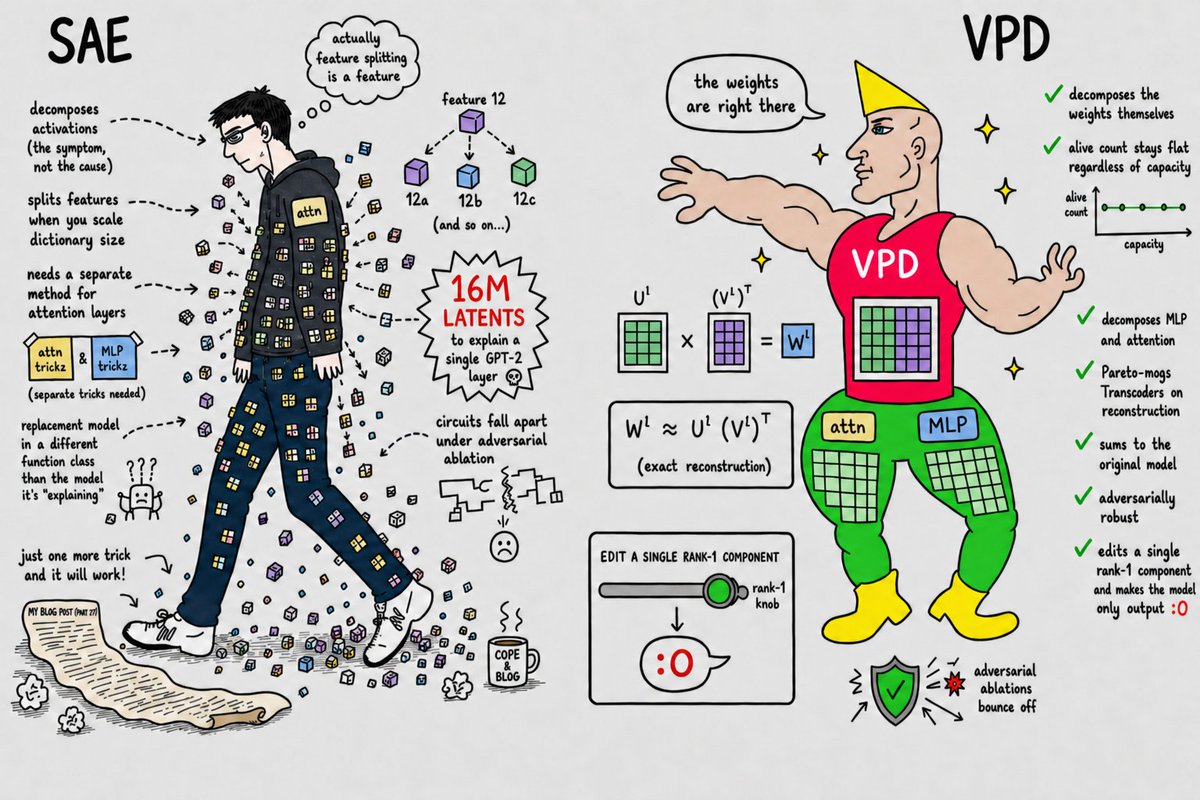

My team at @GoodfireAI has been cooking up a new way to do interpretability: decompose a language model’s weights, not its activations. Our decomposition natively handles attention (!) and behaves less like a lookup table and more like a generalizing algorithm. (1/6)

To build safer AI, we need to understand how models "think". 🧠 Enter Gemma Scope 2, a new set of tools to interpret Gemma 3: our family of lightweight open models. It can help researchers trace internal reasoning, debug complex behaviors and identify risks → goo.gle/gemma-scope-2

Can we understand the chain-of-thought (CoT) of latent reasoning LLMs using current mech interp techniques? It turns out we can uncover interpretable structure, at least on simple math problems! In a short study we show that latent vectors represent eg. intermediate calculations

life hack: you don't have to explain yourself or understand anything, you can just do stuff

@Orgone1 @MylesMcDonough For sure the American attitude that you can just Do Things is really great

PSA: if you feel bad you can just DO THINGS until you feel better

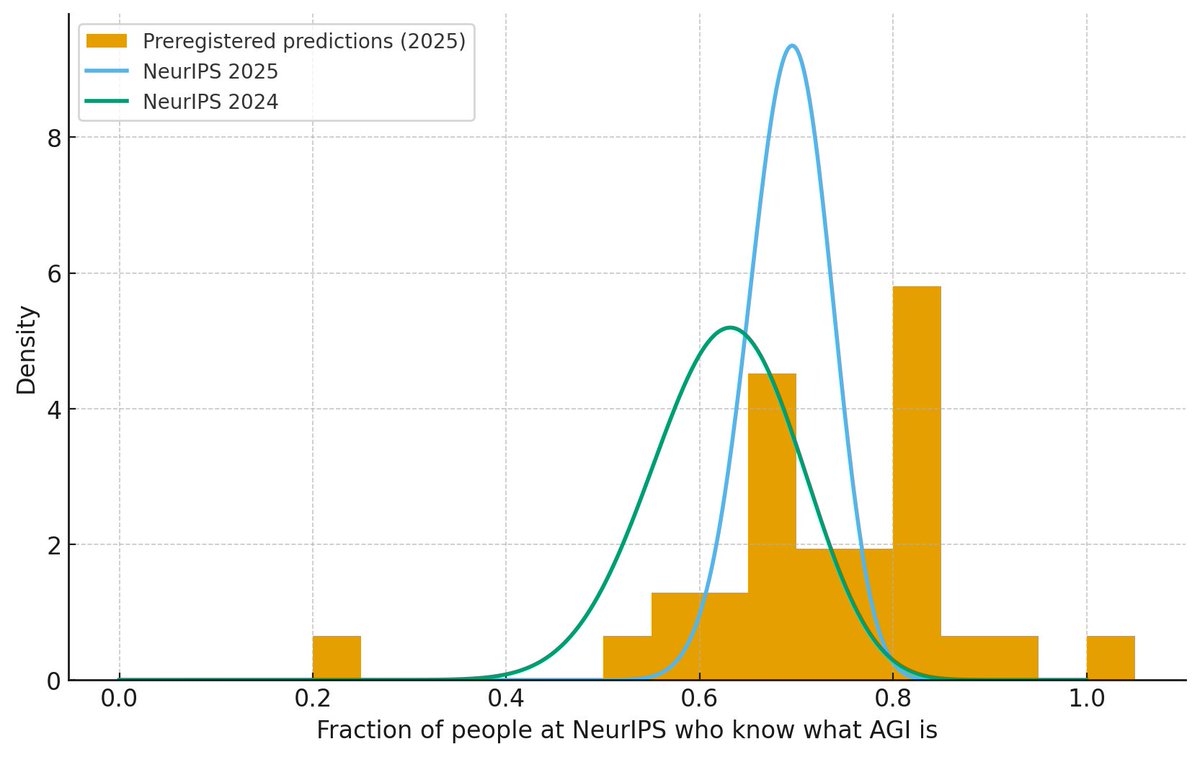

Last year, I randomly surveyed people walking around at neurips, and found that only 63% of people (n=38) could tell me what AGI stands for I'm repeating the experiment this year. Preregister your guess for the % this year now!