Lee Sharkey

687 posts

@leedsharkey

Scruting matrices @ Goodfire | Previously: cofounded Apollo Research

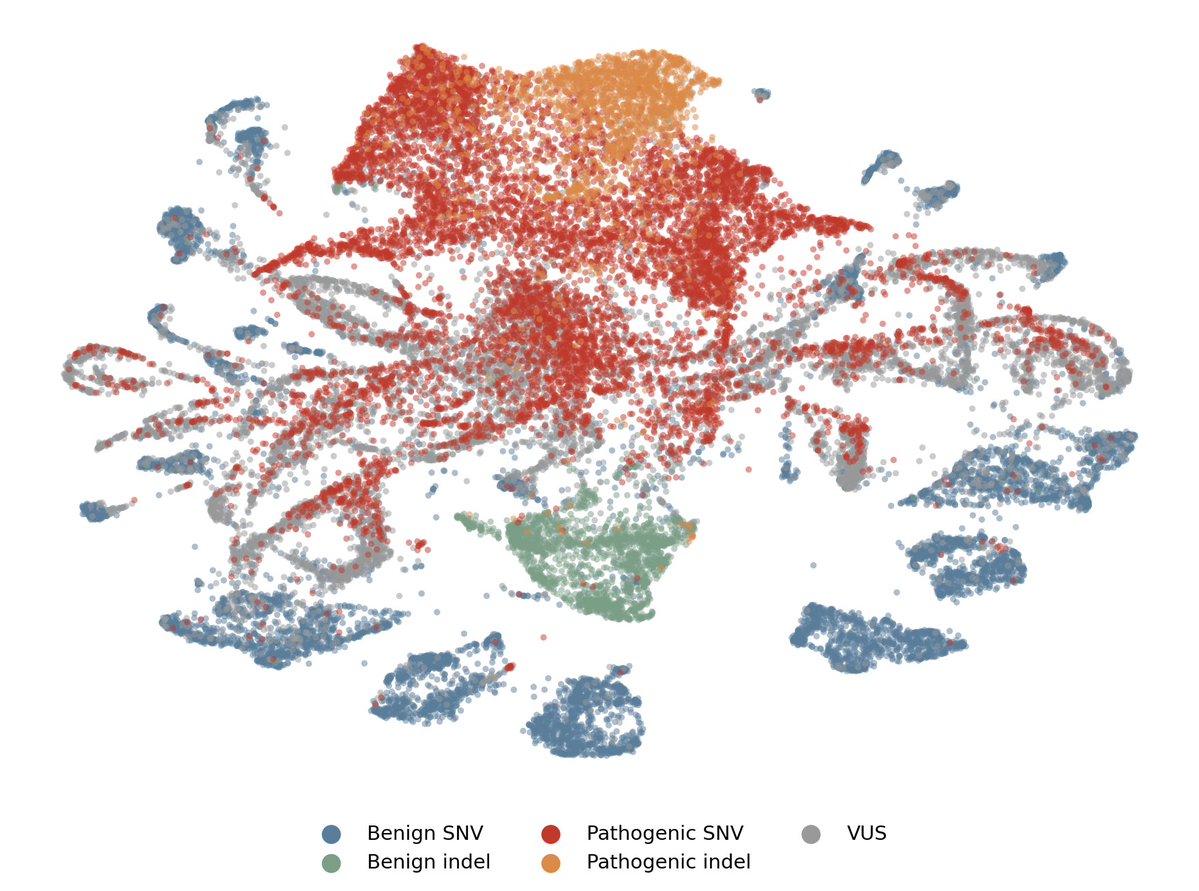

We achieved state-of-the-art performance in predicting which of 4.2 million genetic variants cause diseases by interpreting a genomics model, in a new preprint with @MayoClinic. We're now releasing an open source database for all variants in the NIH's clinvar database. 🧵(1/8)

We achieved state-of-the-art performance in predicting which of 4.2 million genetic variants cause diseases by interpreting a genomics model, in a new preprint with @MayoClinic. We're now releasing an open source database for all variants in the NIH's clinvar database. 🧵(1/8)

Update on the meeting; according to Axios Defense Secretary Pete Hegseth gave Dario Amodei until Friday night to give the military unfettered access to Claude or face the consequences, which may even include invoking the Defense Production Act to force the training of a WarClaude

We used interpretability to scale RL against open-ended tasks, cutting Gemma 12B’s hallucination rate in half by teaching it to self-correct in tandem with our probing harness.