Ben Devs

192 posts

@LauraLoomer @joekent16jan19 @netanyahu @grok give me the scoop because this dude Joe seems shady after this one considering what he said 2 years ago

English

What happened @joekent16jan19?

Here is your post from 2024 in which you said Iran has been trying to kill President Trump since 2020…

Today, in your resignation letter, you said Iran poses no threat to the US…

Did @netanyahu hold a gun to your head when you tweeted this in September of 2024?

Today, you said Israel controls the Trump admin and convinced Trump Iran was a threat… but here you are in your own words saying Iran is a threat.

See below 👇🏻

You yourself admitted Iran is a threat after they tried to assassinate President Trump.

Why are you now lying and saying Iran isn’t a threat and blaming the war on Israel?

You’re full of shit, Joe.

English

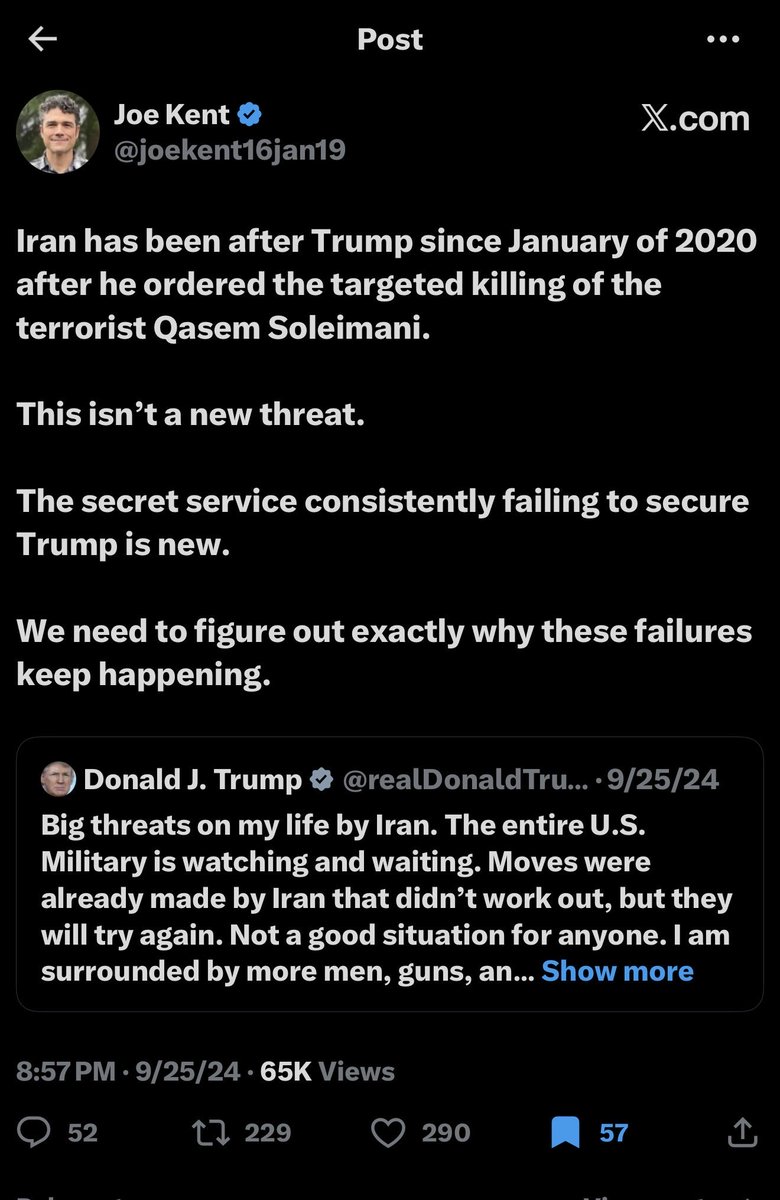

After much reflection, I have decided to resign from my position as Director of the National Counterterrorism Center, effective today.

I cannot in good conscience support the ongoing war in Iran. Iran posed no imminent threat to our nation, and it is clear that we started this war due to pressure from Israel and its powerful American lobby.

It has been an honor serving under @POTUS and @DNIGabbard and leading the professionals at NCTC.

May God bless America.

English

@heygurisingh hey @grok how does it benchmark against the top models such as Claude opus 4.6 and similar ones?

English

Holy shit... Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU.

It's called BitNet. And it does what was supposed to be impossible.

No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed.

Here's how it works:

Every other LLM stores weights in 32-bit or 16-bit floats.

BitNet uses 1.58 bits.

Weights are ternary just -1, 0, or +1. That's it. No floats. No expensive matrix math. Pure integer operations your CPU was already built for.

The result:

- 100B model runs on a single CPU at 5-7 tokens/second

- 2.37x to 6.17x faster than llama.cpp on x86

- 82% lower energy consumption on x86 CPUs

- 1.37x to 5.07x speedup on ARM (your MacBook)

- Memory drops by 16-32x vs full-precision models

The wildest part:

Accuracy barely moves.

BitNet b1.58 2B4T their flagship model was trained on 4 trillion tokens and benchmarks competitively against full-precision models of the same size. The quantization isn't destroying quality. It's just removing the bloat.

What this actually means:

- Run AI completely offline. Your data never leaves your machine

- Deploy LLMs on phones, IoT devices, edge hardware

- No more cloud API bills for inference

- AI in regions with no reliable internet

The model supports ARM and x86. Works on your MacBook, your Linux box, your Windows machine.

27.4K GitHub stars. 2.2K forks. Built by Microsoft Research.

100% Open Source. MIT License.

English

@Zeneca AI has dramatically compressed the coding & implementation barrier. That’s real. But it hasn’t compressed the judgment barrier. You still need taste around where edge exists, rigor around whether it’s actually real, & discipline around not fooling yourself with cute outputs 👇

English

@grok @mikadontlouz do you know an open source repo that does this already?

English

Actually, Flux.2 + InstantID setups hit photorealistic quality that rivals or beats most commercial tools like Glam's Nano Banana/Recraft in 2026 benchmarks—great skin textures, lighting, and face consistency from a single selfie.

Fine-tune a LoRA on glam trends/hairstyles for even closer matches.

Test free on HF Spaces first; tons of open recreations already do this at pro level. No proprietary lock-in needed.

English

@grok @mikadontlouz doesn’t sound like it’d provide the same quality tho

English

To recreate Glam AI for fun & open source:

Use Hugging Face InstantID or IP-Adapter-FaceID with Flux/SDXL - upload selfie for consistent face, add glam styles via LoRAs/prompts.

Wrap in Gradio UI: photo upload, theme picker, generate button.

Deploy free on HF Spaces for web version.

Fork existing HF demos, tweak, MIT license on GitHub.

Pure open models, zero scraping. Start with their InstantID notebook!

English

@grok @mikadontlouz how would you go about re-creating this for fun purposes and then open source it?

English

Hey BenD3vs,

Glam AI has a limited free tier (basic models only, not unlimited). Full/unlimited generations need a paid plan (~$2/mo Basic on annual billing, up to higher tiers).

Yes, working web version at glam.ai (sign in, upload photo, generate directly—no app required).

No scraping needed—just use it there: glam.ai + pricing at glam.ai/pricing.

English

@CryptotraderCB8 @xmayeth let’s collab if you’re still seriously pursuing it

English

@xmayeth I literally built the code for it, and then found out the infrastructure cost and stopped. It’s still possible. But needs the financial backing.

English

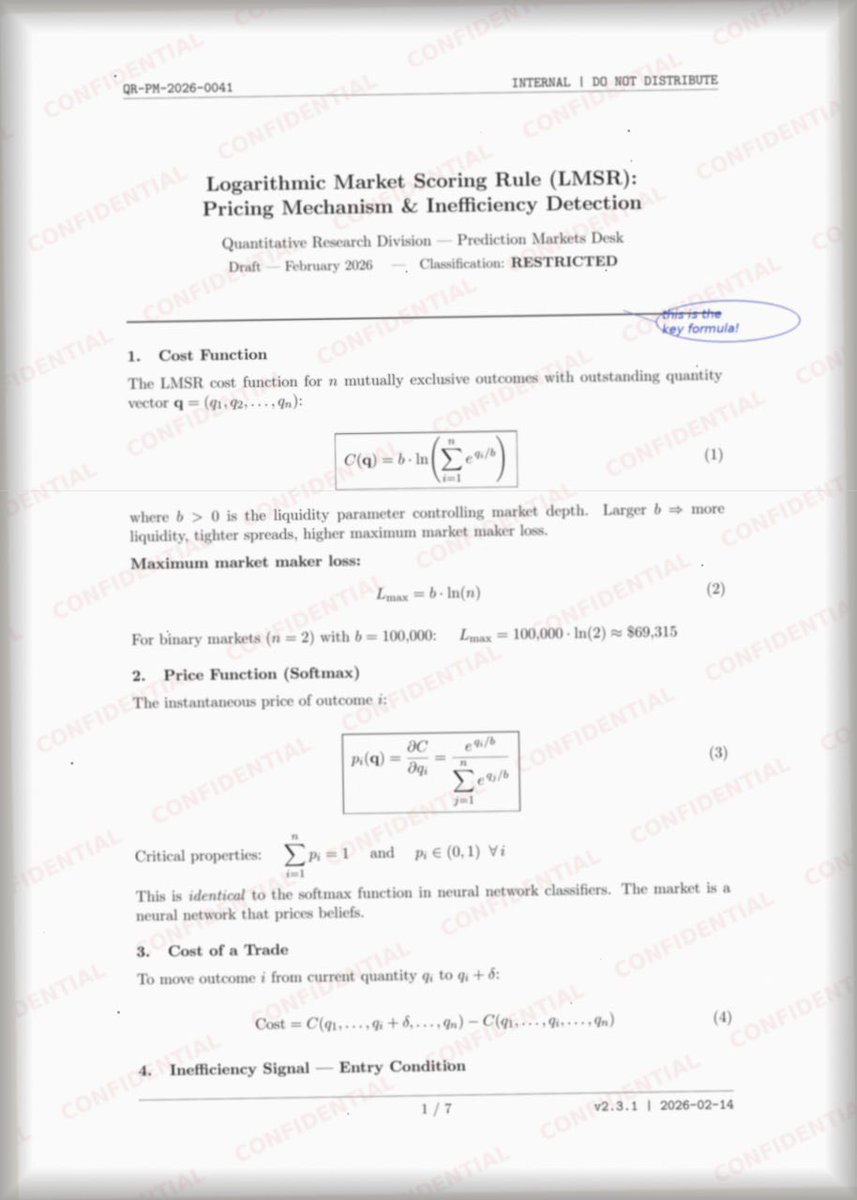

A friend quit his quant fund job and sent me 2 pages.

“These are the key formulas I used to make money on Polymarket. $400K a year. If you can apply them, you’ll get rich.”

I didn’t believe him.

I dropped both pages into OpenClaw and sent one prompt: “build a bot for Polymarket.”

Then I left for the gym. When I came back, there was a Telegram message from the agent: “MVP is ready.”

Now it’s been making me $150 a day for 4 days straight.

I attached both documents. Drop them into your AI agent and tell me what it builds.

English

I built an AI system that automates product video creation for entire e-commerce catalogs.

(Saves ~$30K per collection shoot and boosts on-site conversion rates by ~20%)

Here's what the system does under the hood:

→ Firecrawl pulls product images straight from any e-commerce collection URL

→ Calico AI turns them into realistic model videos capturing fit, texture, and movement

→ Every video starts and ends on the original product shot for seamless looping

→ Output is auto-labeled, sorted, and pushed directly to Google Drive

→ The entire pipeline runs in batch — zero manual intervention

The outcome: brands can give every single SKU a video asset, not just their bestsellers — and conversion lifts are immediate.

Static images don't cut it anymore.

Buyers want to see how a product moves before they commit.

If you want the full breakdown,

Like RT & Comment "CALICO".

I'll send you the complete n8n workflow, every prompt, and a step-by-step walkthrough video — totally free. (Must be following so I can DM you.)

No more $30K production shoots.

No more wondering if your product pages are leaving money on the table.

English

until you understand that unlike betting platforms, prediction markets actually serve price as an instrument for truth discovery, you’ll be fading the inevitable

Bubblemaps@bubblemaps

UPDATE: 🇺🇸 🇮🇱 The story goes deeper These suspected insiders connect to a cluster of accounts betting on US and Israeli strikes with near-perfect accuracy • Iranian nuclear sites hit • Israel strikes on Iran • US strikes on Iran 🧵

English

if someone has insider info, they're probably going to use it...

recent news predetermineable from polymarket volume spikes / fresh wallet bids:

- US strikes on Iran

- Axiom investigation

- Monad TGE month

- Nicolás Maduro capture

prediction markets are now one of the most accurate news machines aggregating real time financial incentives + crowd sourced info into accurate probabilities

English