Bertrand Duflos

12K posts

Bertrand Duflos

@BertrandDuflos

AI, platforms, data. Digital transformation. IP, licensing, competition law, copyright.

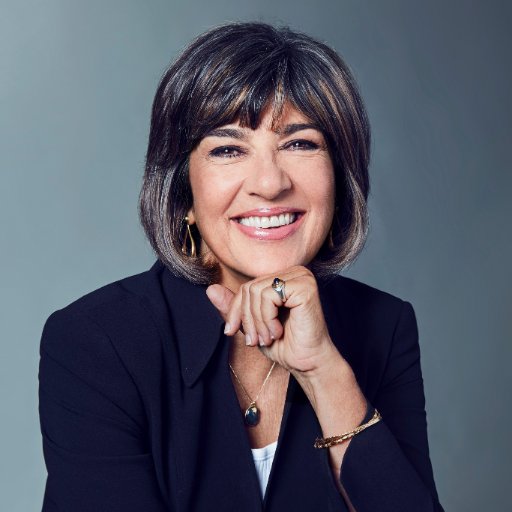

If there is precisely one thing you watch today, make it this. French Senator Claude Malhuret. A microphone. And the most magnificently savage dismantling of the Trump administration ever delivered in a language they almost certainly don’t speak. He covers Iran. He covers corruption. He covers the kind of staggering, industrial-scale incompetence that would get you fired from managing a car park. And he does it with the calm, unhurried certainty of a man who has read every page of the indictment and found it, if anything, worse than expected. France has never pretended to like these people. But this is contempt elevated to an art form. The kind of refined, aristocratic disdain that takes centuries of civilization to produce and approximately ninety seconds to deploy. Malhuret sounds like he is four seconds from the button. Not out of panic. Out of sheer, exhausted disgust. Honestly? Understandable. Watch it. Share it. The adults are speaking. Gandalv / @Microinteracti1

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

🇫🇷 @MistralAI vient de faire 4 annonces titanesques. Et personne n'en parle en France.(comme d'habitude) Les Américains, eux, ils sont en PLS. Alors permettez-moi de corriger ça. 1/ Small 3 → Small 4 Un modèle qui réunit TOUT le savoir-faire de Mistral. Open source. Gratuit. Mixture of Experts. Raisonnement + multimodal + code. Fenêtre de contexte XXL. Licence Apache 2.0 = ultra-permissive. C'est le nouveau champion de l'IA open source mondiale. 2/ Mistral rejoint la coalition Nemotron (NVIDIA) Aux côtés de Black Forest Labs, des meilleures boîtes IA open source de la planète. Un seul siège français dans cette coalition d'élite. Ce siège, c'est Mistral. 3/ LeanMistral Un modèle dédié aux preuves formelles : maths, sciences, raisonnement rigoureux. L'IA qui ne se trompe pas — et qui peut le prouver. Pour la crédibilité de l'IA en entreprise, c'est un game changer. 4/ Mistral Forge Fini le fine-tuning artisanal ou les bases de données séparées. N'importe quelle entreprise peut maintenant créer son propre modèle, entraîné sur ses données, verticalisé sur son métier. Des centaines d'IA hyper-spécialisées vont émerger. Elles auront toutes du Mistral dans les veines. L'avenir de l'IA, ce n'est pas forcément le plus gros modèle propriétaire derrière un paywall. C'est peut-être une IA open source, gratuite, partout, dans tous les logiciels et services — une vraie commodité technologique. Et le champion qui dessine cet avenir ? Il est français. Il s'appelle Mistral. Vous en pensez quoi ? #IA #AI #IAGen #LLMs #MBADMB #OpenSource #FrenchTech

Not Americans but international teams developed convnets, alexnet, attention, AlphaGo, neural LMs, AlphaCode, AlphaFold, transformers, RL, etc, etc. This war mongering CEO does not represent the Americans who helped develop AI either. They have greater ideals. It is sad that my colleagues and I developed the science and tech being used by these money and power hungry despicable people. We need a moratorium on AI weapons. And international institutions that can enforce it.

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project. This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.: - It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work. - It found that the Value Embeddings really like regularization and I wasn't applying any (oops). - It found that my banded attention was too conservative (i forgot to tune it). - It found that AdamW betas were all messed up. - It tuned the weight decay schedule. - It tuned the network initialization. This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism. github.com/karpathy/nanoc… All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges. And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

NOW - Germany's Merz supports U.S. embargoing Spain, claims it's to "convince" them to increase NATO spending.

Ready to make the switch? claude.com/import-memory

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…