Bill Leoutsakos

2.1K posts

Bill Leoutsakos

@Bi11Leou

Computer Engineering @cambridge_uni | ex-ML Engineer @CosineAI | Eurotech Fellow

Cambridge, England Katılım Kasım 2024

779 Takip Edilen288 Takipçiler

Sabitlenmiş Tweet

Just built an open-source real-time AI assistant using the newest gpt-realtime API from @OpenAI (watch the demo below)

Check out the repo and feel free to reach out for any questions & take ideas from it to build SOTA real-time voice agents!

github.com/BillLeoutsakos…

Here are some of the challenges I faced while building it, how I solved them and what I learned: 👇

(also pls like / comment / repost, it's my first launch on Twitter and I wanna go a bit viral 😁)

English

@Polymarket Years??? Why did bro say that 😭. The stock market is cooked rn

English

@AndrewCurran_ The thing is... gpt 4.5 was a huge model but the result underperformed. Maybe the methods have advanced a lot since then

English

Three weeks ago there were rumors that one of the labs had completed its largest ever successful training run, and that the model that emerged from it performed far above both internal expectations and what people assumed the scaling laws would predict. At the time these were only rumors, and no lab was attached to them. But in light of what we now know about Mythos, they look more credible, and the lab was probably Anthropic.

Around the same time there were also rumors that one of the frontier labs had made an architectural breakthrough. If you are in enough group chats, you hear claims like this constantly, and most turn out to be nothing. But if Anthropic found that training above a certain scale, or in a certain way at that scale, produces capabilities that sit far above the prior trendline, then that is an architectural breakthrough.

I think the leaked blog post was real, but still a draft. Mythos and Capybara were both candidate names for the new tier, though Mythos may now have enough mindshare that they end up keeping it. The specific rumor in early March was that the run produced a model roughly twice as performant as expected. That remains unconfirmed. What is confirmed is that Anthropic told Fortune the new model is a 'step change,' a sudden 2x would certainly fit the definition.

We will find out in April how much of this is true. My own view is that the broad shape of this is correct even if some of the numbers are wrong. And if it is substantially accurate, then it also casts OpenAI's recent restructuring in a new light. If very large training runs are about to become essential to staying in the game, then a lot of their recent decisions, like dropping Sora, make even more sense strategically.

For the public, this would mean the best models in the world are about to become much more expensive to serve, and therefore much more expensive to use. That will put pressure on rate limits, pricing, and subscription plans that are already subsidized to some unknown degree. Instead of becoming too cheap to meter, frontier intelligence may be about to become too expensive for most of humanity to afford.

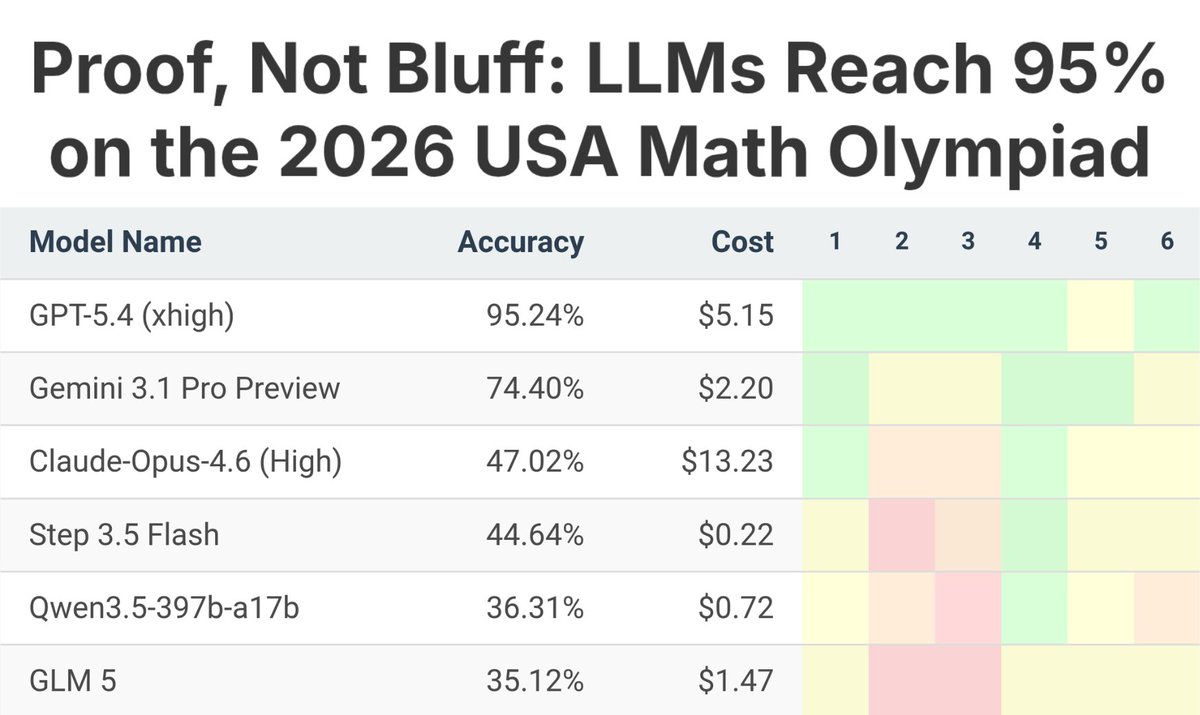

Second-order effects; compute, memory, and energy are about to become much more important than they already are. In the blog they describe the new model as not just an improvement, but having 'dramatically higher scores' than Opus 4.6 in coding and reasoning, and as being 'far ahead' of any other current models. If this is the new reality, then scale is about to become king in a whole new way. It would also mean, as usual, that Jensen wins again.

English

"Getting in is the hard part" is what everyone including me thought after i got in. Its utter nonsense. The university exams and content are 100x harder than any test you ll take befpre uni. In highschool I was hitting 90 and 100% with modest preparation, now i am grinding and hoping for a 60 or a 70%. If you are in a stem course in a good uni, doing the course is a pain in the ass.

English

There is no alpha in graduating from college. Getting in is the hard part; pretty much everyone graduates.

Therefore the optimal path is to get into the best college you can, then drop out immediately while using that name brand as signal and getting real world experience at a very fast paced and high growth job opportunity.

Or idk just have fun while making friends and memories like a normal college student. Just a thought.

English

@creepydotorg If they put 1% of this creativity in a job interview they would have been employed a long time ago

English

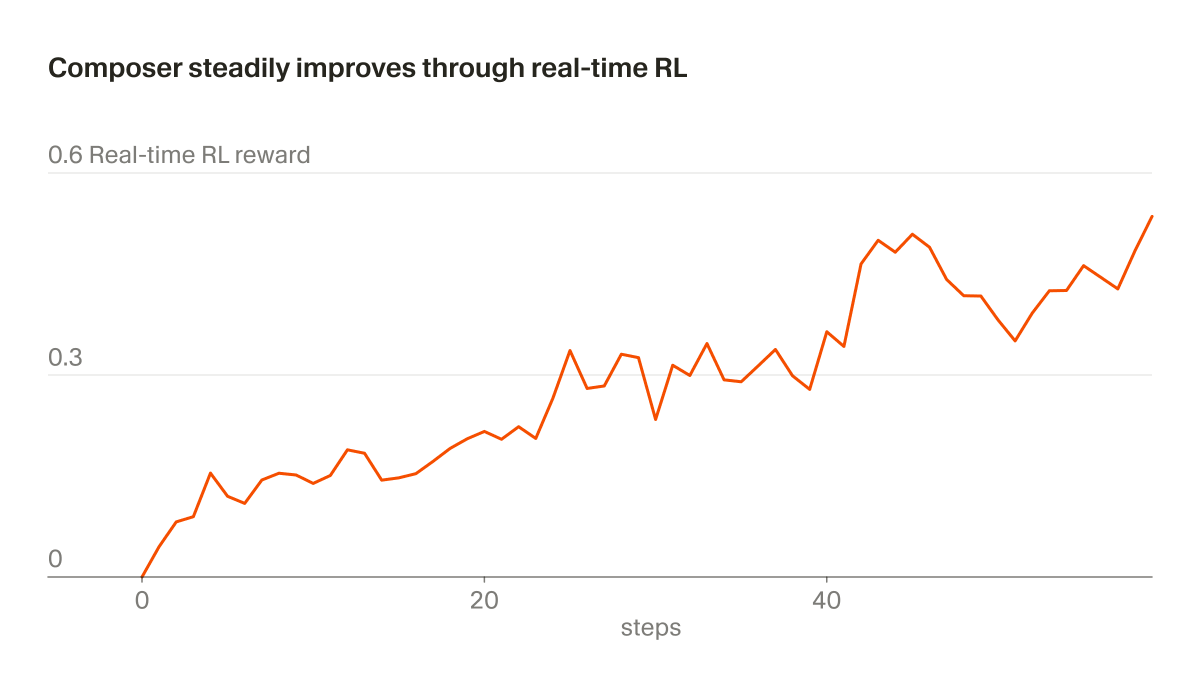

@cursor_ai Plot twist: Cursor drops continual learning before the labs

English

@scaling01 Claude 4.5 was already phenomenal... imagine what Claude 5 is gonna be. Its over for openai and google

English

@DEhnts All the growth beforehand came from building houses anyway. After the 70s we never invested in anything important in tech or otherwise.

English

Greece used to be at 95 percent of the EU average when it comes to GDP per capita. Now it stands at 68%. Those that talk about a "Greek recovery" need to get their facts straight. Greece stabilized at a low level of economic activity, then grew a bit, but it did not "recover".

EU_Eurostat@EU_Eurostat

The preliminary 2025 results show that gross domestic product (GDP) per capita — expressed in purchasing power standards — ranged between 68% of the EU average in 🇬🇷Greece and 🇧🇬Bulgaria and 239% in 🇱🇺Luxembourg. Read more 👉link.europa.eu/94N43x

English

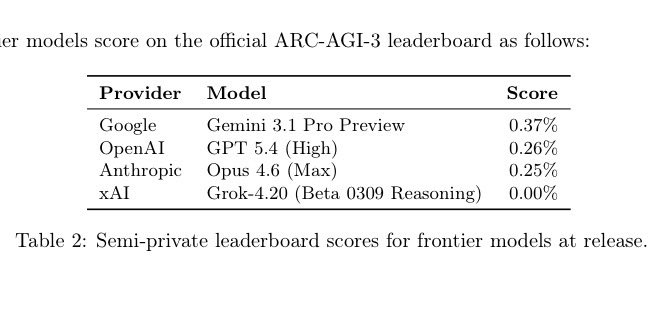

@chatgpt21 Now they will see what it has, put it in the training data, and in a year from now we ll all be like wow arc agi 3 is saturated thats insane

English

The billionaire’s legal team said that because Chancellor Kathaleen McCormick had liked a post celebrating his recent legal defeat and thereby created ‘a perception of bias against Mr. Musk in these cases, recusal is necessary and warranted’. ft.trib.al/6h9vFAH?

English

The 2 most goated companies ngl

Disclose.tv@disclosetv

NEW - Anduril and Palantir to develop software to run Trump’s Golden Dome antimissile shield — WSJ

English

- OpenAI shutting down Sora

- Disney backing out from OpenAI deal

- OpenAI backlash from Pentagon deal

- $11.5 billion in quarterly losses

- $207 billion funding gap

- No profitability before 2030

The bubble is bursting

DiscussingFilm@DiscussingFilm

OpenAI is shutting down its AI video slop-making platform Sora.

English