Sabitlenmiş Tweet

🚨 New paper alert!

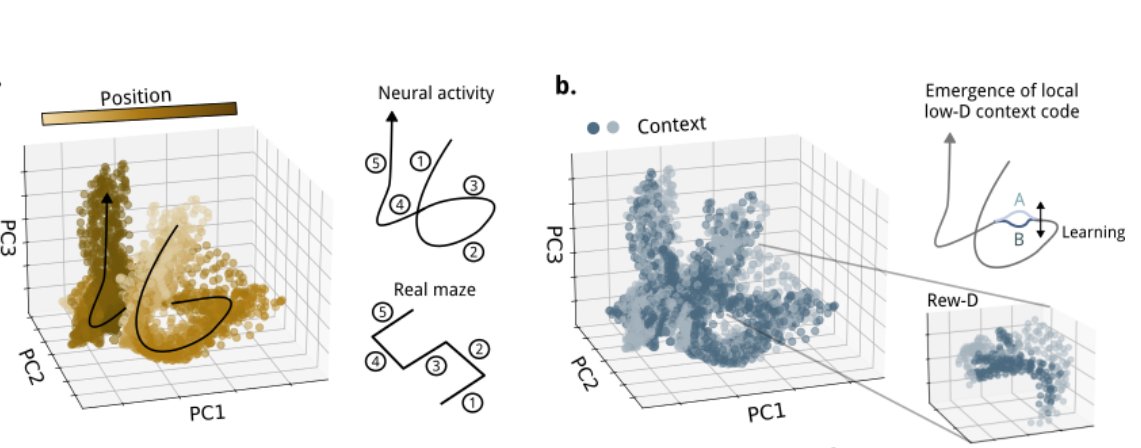

Have you ever suspected that spikes, Dale's law, and E/I balance might be more than just biological constraints, but rather fundamental to how brains compute? Check out my latest work with Christian Machens @Neuro_CF: tinyurl.com/mpwkkubd

🧵 (1/5)

English