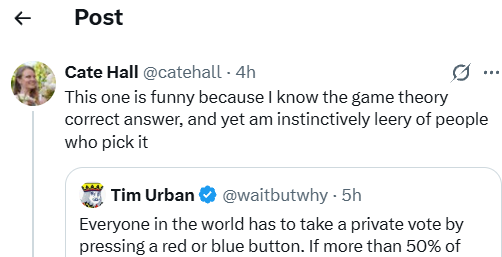

Roko 🐉@RokoMijic

Time for some math on the blender game.

The Blender Game is an excellent probe that reveals as very particular way that the minds of WEIRD (Western, Educated, Industrialized, Rich, and Democratic) people are broken.

Perhaps THE way that they are broken.

What is the rational solution to the blender game? Well, basic game theory for self-interested players gives a clear answer. You never get into the blender. This is because the move of not getting into the blender strictly dominates getting in: whatever happens, you will always be better off or the same if you stay out of the blender. The end.

In game theory a Nash Equilibrium is very simple - it is a state where there's no unilateral move anyone could make to improve their own situation. In the (selfish) Blender game there are many Nash Equilibria because lots of states have the property that it's way above 50% in the blender, so it doesn't matter what any one individual does. So all of those are Nash Equilibria, as well as the state where everyone is outside the blender ("all red" in Tim Urban's red/blue framing). But all the states that have <50% in the blender are not Nash Equilibria because anyone one of the people in the blender could now save their own life by exiting.

But the Nash Equilibrium where everyone is outside is in some sense better. It is more stable.

In game theory we can formalize this as a type of equilibrium called Trembling Hand Perfect Equilibrium (THPE). In THPE we imagine that people will make their moves in the game and then with some small probability they will accidentally press the wrong button because their "hand is trembling".

There is only one THPE for the blender game with selfish players, which is when everyone presses red. It's easy to see why: imagine there's 50% people exactly in the blender. Out of 1000, 500, for example. Then if you imagine that each one of those 500 people who are deliberately putting themselves into the blender has a small chance of accidentally not going into the blender. Now if you are one of these people, you reason that even if you don't make the mistake yourself, someone else might. And then you are going to die, which is bad, so you can improve your situation by actually exiting. The same is true for 501 people, 502, etc. None of the "in the blender" states are actually Trembling Hand Perfect Equilibria. But, the "everyone out of the blender" state is a Trembling Hand equilibrium, because even though some people might accidentally go into it with some small probability, you are definitely not going to improve your own chances by joining them to almost certainly be blended.

Okay, but what about if you are a mixture of selfish and altruistic. Say you assign utility +1 to yourself for surviving, and +1/N for each other person who survives.

We can analyze this new game: there are now other "stable" (THPE) equilibria?

Yes. If everyone is a bit altruistic, then "all in the blender" also becomes a Trembling Hand Perfect Equilibrium. The reason for this is that for someone who is at least a little bit altruistic, it is okay for them to suffer a small chance of being blended in exchange for a larger chance of saving the larger group. "The good of the many outweighs the good of the few - or the one". Note that in these games both the size of the set you are saving and the probability of saving them is larger, because in order for getting out of the blender to actually save yourself, you need one more other person to also get out, which is ε times less likely. So any nonzero amount of altruism is enough to make these blue equilibria THPE.

This seems to vindicate the "Blue" position. As long as everyone is at least a little bit altruistic, "All in the Blender" is actually a Trembling Hand Perfect Equilibrium, so it is at least equally valid to "All out of the Blender", and some might argue superior since under trembling hand conditions it can prevent anyone from getting blended, most of the time (there are absurdly unlikely cases where many people simultaneously slip up).

But there is a problem.

The "All in the Blender"/"All Blue" equilibrium is only Trembling Hand Perfect if the number of altruists is at least at or above the 50% threshold. If there are 49% altruists and 51% are egoists, then the egoists will rationally abandon the altruists in the blender because both the altruists', and egoists' hands are trembling, so the blender is still dangerous, even if only slightly.

But in reality you never really know how many people are slightly altruistic, versus just self interested and rational. In practice a fair number of these games end up with red winning. In these games if you use a mixed population with more than half the people being purely self-interested or even sadistic, the "All in the Blender"/"All blue" equilibrium is no longer Trembling Hand Perfect. To see why, think about a mixed population where there are 3 selfish players and 2 altruists. Imagine them all provisionally choosing to go into the blender, and then reconsidering their options in light of the fact that someone(or several!) might slip. All the selfish people realize that if any three (or all four!) of the other four slip, they will be in the blender either with one other person or on their own, and they will then die. Therefore, all three selfish players will not enter the blender. But then, the altruists also get blended with high probability, so actually they don't want to get in either.

Now imagine that all the altruists are sort of "running the same algorithm", like functional decision theory. If they assign any nonzero probability to the case that they are outnumbered by selfish people, they should all choose to get out of the blender/all play red. This is because in cases where self-interested players outnumber altruists, playing red strictly dominates even for the altruists, and in cases where altruists outnumber the self-interested you can do either and it makes no difference to first order.

High commitment cooperation only makes sense when you are absolutely sure that the altruists outnumber the merely self-interested who larp as altruists.

So to pick blue, it is not enough to merely be an altruist. Rational altruists wouldn't pick blue. You must also walk around with the background assumption that everyone else in the entire world is also an altruist.

What is the flaw of the WEIRD mind that this thought experiment exposes?

It's that WEIRD people do game theory by tentatively assuming that every group they ever interact with is composed of altruists/cooperators, and then maybe adjusting given specific information on bad individuals.

It's "Assume everyone is a cooperator by default, and then adjust if needed" decision theory. WEIRD Decision Theory. WDT.

This sounds stupid, but it is a neat hack that solves lots of things. It prevents WEIRD people from letting rational mutual doubt ruin their lives by defecting just on the chance that the other person might want to defect. It is also probably about the simplest way to solve that, other than "always cooperate".

So to WEIRD people, "All blue" comes out as the obviously correct answer, even though it is not actually the right answer in the math. They don't like it even when they know the math!

The blender game is weirdly, unnaturally balanced to expose this flaw. Usually there is some active benefit to coordination, so the "always assume other people will cooperate if you do" hack does tend to line up with the math, because the small chance of people not cooperating is usually cancelled out by big benefits of cooperation. But in the blender game, there is no benefit to cooperating. The uncoordinated equilibrium is just better.

WEIRD people don't like it when uncoordinated equilibria are just better. This is why they are always trying to cancel capitalism.

And this is why they keep getting into the blender.

□