Sabitlenmiş Tweet

Timo Bolkart

251 posts

Timo Bolkart

@BolkartTimo

Research Scientist at Google, 3D face & body modeling (FLAME/SMPL-X), reconstruction from images (DECA/EMOCA/ToFU/TEMPEH/ExPose), neural avatars (INSTA, GEM).

Zürich, Schweiz Katılım Eylül 2017

522 Takip Edilen2K Takipçiler

I’m excited to share my next chapter: I will be joining the team of @MZollhoefer and @AlexRichardCS as an AI Research Scientist at Meta in Pittsburgh, working on behavioral foundational models for humans. 🚀

English

Yesterday marked a very important milestone in my life. I successfully defended my PhD under the supervision of Prof. @JustusThies 🎓.

It has been an incredible four-year journey, and I’m deeply grateful for the opportunity and trust that Justus placed in me as his student.

English

Timo Bolkart retweetledi

GEM - Gaussian Eigen Models for Human Heads is a method that distills networks into lightweight linear avatars represented as an efficient eigenbasis, offering easy adjustability in terms of memory and size.

💻 Code: github.com/Zielon/GEM

🌐 Website: zielon.github.io/gem

English

Timo Bolkart retweetledi

SYNSHOT - Synthetic Prior for FewShot Drivable Head Avatar Inversion is a method that explores how a synthetic dataset can be used to build a prior for few-shot inversion of 4D avatars using in-the-wild images.

💻 Code: github.com/Zielon/synshot

🌐 Website: zielon.github.io/synshot

English

Timo Bolkart retweetledi

Excited to present two projects tomorrow together with Timo Bolkart (@BolkartTimo) at #CVPR2025 in Nashville🎸🇺🇸!

📍 SYNSHOT - Poster 8, Sat, June 14, 10:30–12:30 (Session 3, Hall D)

📍 GEM - Poster 7, Sat, June 14, 17:00–19:00 (Session 4, Hall D)

Come say hi!

English

Timo Bolkart retweetledi

1/n Proud to present our latest attempt to give 3D faces a voice: “THUNDER: Supervising 3D Talking Head Avatars with Analysis-by-Audio-Synthesis”

We synthesize audio from facial motion!

Website: thunder.is.tue.mpg.de

With @Michael_J_Black, Senya Polikovsky, Carolin Schmitt

English

Timo Bolkart retweetledi

We had an incredible week at @dagstuhl for the seminar on Generative Models for 3D Vision! Great talks and lots of inspiration on the future of 3D vision and generative models 🚀

English

Timo Bolkart retweetledi

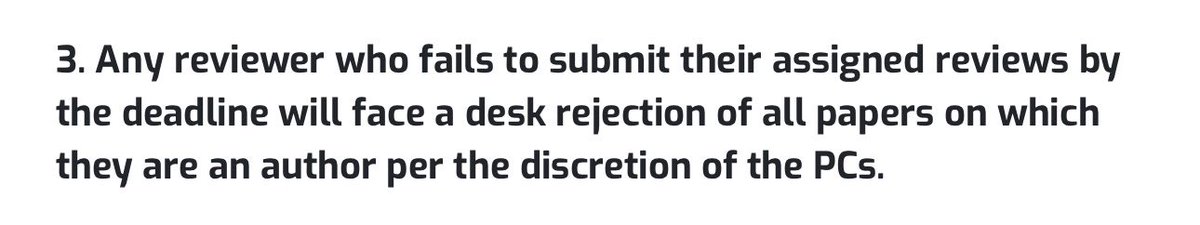

📢Pixel3DMM: Versatile Screen-Space Priors for Single-Image 3D Face Reconstruction📢

-> highly accurate face reconstruction by training powerful VITs via surface normals and UV-coordinates estimation.

The geometric cues from our 2D foundation model backbone constrain the 3DMM parameters, which allows us to achieve remarkable reconstruction accuracy - works for both single image and videos!

In addition, we introduce a new 3D face reconstruction benchmark that evaluates both neutral and posed face geometry.

🌍 simongiebenhain.github.io/pixel3dmm

📷 youtu.be/BwxwEXJwUDc

Great work by @SGiebenhain @TobiasKirschst1 @martin_ruenz @LourdesAgapito

YouTube

English

Timo Bolkart retweetledi

Pixel3DMM: Versatile Screen-Space Priors for Single-Image 3D Face Reconstruction

TLDR: DINO feature extraction + ViTs predict uv and normal maps, which allow for very accurate 2d landmark tracking and so powerful image/video monocular reconstruction.

📽️ simongiebenhain.github.io/pixel3dmm/

📜 arxiv.org/pdf/2505.00615

💻 Code coming soon! (These guys always live up to their promise)

English

Timo Bolkart retweetledi

Timo Bolkart retweetledi

📢 SHeaP: Self-Supervised Head Predictor Learned via 2D Gaussians 📢

Given a single input image, we predict accurate 3D head geometry, pose, and expression.

Previous works (e.g. DECA, EMOCA) use differentiable mesh rasterization to learn a self-supervised head geometry predictor via a photometric reconstruction loss. We borrow these ideas, but our key insight is to replace the mesh rendering with 2D Gaussian Splatting. This leads to much higher accuracy of the underlying predicted geometry and thus more gradient signal during training.

🌍 nlml.github.io/sheap/

🎥 youtu.be/vhXsZJWCBMA/

Great work by @liamschoneveld @_davidedavoli_ @jiapeng_tang

YouTube

English

Timo Bolkart retweetledi

🎉 I’m happy to share that 𝗯𝗼𝘁𝗵 𝗚𝗘𝗠 𝗮𝗻𝗱 𝗦𝘆𝗻𝗦𝗵𝗼𝘁 got accepted to 𝗖𝗩𝗣𝗥 𝟮𝟬𝟮𝟱!

Check out the papers, code, and comparisons below:

🔹 GEM: github.com/Zielon/GEM

🔹 SynShot: github.com/Zielon/SynShot

Thanks to all collaborators, and see you in Nashville! 👋

English

Timo Bolkart retweetledi

Can we reconstruct relightable human hair appearance from real-world visual observations?

We introduce GroomLight, a hybrid inverse rendering method for relightable human hair appearance modeling.

syntec-research.github.io/GroomLight/

English

Timo Bolkart retweetledi

📢Announcing our 3D head avatar benchmark📢

Two tasks with hidden test sets:

- Dynamic Novel View Synthesis on Heads

- Monocular FLAME-driven Head Avatar Reconstruction

Our goal is to make research on 3D head avatars more comparable and ultimately increase the realism of digital humans.

The benchmark studies distinct phenomena of 3D head avatar creation, such as extreme facial expressions, slow motion captures of shaking long hair, or complicated light reflection and refraction patterns of glasses. The two benchmark tasks assess two core desiderata of 3D avatars: While the novel view synthesis challenge focuses on best possible rendering quality of complex moving scenes, the avatar animation challenge is concerned with how well a driving signal is translated into an avatar.

Evaluations are light-weight and consist of diverse video recordings from the popular NeRSemble dataset with a hidden test set. Participation in the benchmark is therefore straight-forward and requires only 5 reconstructions per task.

Leaderboard and benchmark submission: kaldir.vc.in.tum.de/nersemble_benc…

Benchmark data access and toolkit: github.com/tobias-kirschs…

Great work by @TobiasKirschst1 @SGiebenhain

English

I joined @RealityLabs in Zürich to work on digital humans. Meta's vision aligns perfectly with my interests. Reconnected with old friends and met talented new colleagues—I am excited to take part in building the future of communication!

English

Timo Bolkart retweetledi

📢📢𝐍𝐞𝐑𝐒𝐞𝐦𝐛𝐥𝐞 𝐯𝟐 𝐃𝐚𝐭𝐚𝐬𝐞𝐭 𝐑𝐞𝐥𝐞𝐚𝐬𝐞📢📢

Head captures of 7.1MP from 16 cameras at 73fps:

* More recordings (425 people)

* Better color calibration

* Convenient download scripts

github.com/tobias-kirschs…

The new version of our dataset adds 156 participants for a total of 425 different people. In its entirety, the dataset provides now 65 million images from over 15 hours of diverse human facial expression performances.

We improved the color consistency of the recorded images with a better color calibration procedure. As a result, 3D reconstructions with images from the NeRSemble dataset should become better and look more realistic.

Finally, we made it much easier to download the recordings with our new download repository.

It now just takes a single command to download all frontal hair shake videos of all participants or to download all recordings of a single participant.

Check it out: tobias-kirschstein.github.io/nersemble/

Awesome work by @TobiasKirschst1 @SGiebenhain !!!

English

Timo Bolkart retweetledi

Timo Bolkart retweetledi

📢 I am #hiring 2x #PhD candidates to work on Human-centric #3D #ComputerVision at the University of #Amsterdam! 📢

The positions are funded by an #ERC #StartingGrant.

For details and for submitting your application please see:

werkenbij.uva.nl/en/vacancies/p…

🆘 Deadline: Feb 16 🆘

English

Timo Bolkart retweetledi

Many people are in the middle of the @CVPR deadline. So I'm sharing my guide to writing a CVPR paper (or any paper). My students have had this for years but I haven't shared it publicly before. I hope you find it useful and write a great paper. #CVPR2025 @black_51980/writing-a-good-scientific-paper-c0f8af480c91" target="_blank" rel="nofollow noopener">medium.com/@black_51980/w…

English

@taiyasaki Totally agree! Suggesting a speaker to read a book on how to present after their talk isn’t helpful—it just embarrasses them in front of everyone.

English

Congratulations @YaoFeng1995 for being named one of MIT's Rising Stars in EECS! Her work includes regressing 3D faces & bodies (DECA, PIXIE), avatars (TECA), mesh representations (Ghost-on-the-shell, MeshDiffusion), and human foundation models (ChatPose)

risingstars-eecs.mit.edu/participants/y…

English