Bret Kerr 🛡️🧠🛜

18.2K posts

@BretKerr

@acrainsight : MoE agentic enterprise content marketing 🔄 research 🔄 GTM strategy 🏗️C. Code dev / Building with @claudeai 🤝@geminiapp /@anthropicai book

Best AI video meme since the Harry Potter Balenciaga thing

🚨Claude is telling users to go to sleep mid-session, and nobody, including Anthropic, seems to fully understand why it keeps doing it.

@ChatGPTapp deconstruction of it’s OG architects art @ilyasut : Investigative readout: the painting is an anti-product announcement Your read — “this is the announcement” — is sharp because the image is almost certainly not signaling a normal startup update. It is signaling SSI’s posture: no demo, no roadmap, no public product cycle, just the return of “thinking” as the central act of AI research. The image is doing three things at once: Rodin’s The Thinker becomes the icon of human reflective intelligence. The semiconductor die / circuit-map background becomes the new substrate of cognition. The painted, hand-made body pasted over silicon becomes the unresolved alignment problem: human meaning, mortality, restraint, and moral judgment sitting uneasily on top of computational scale. Rodin matters here. The Thinker was not originally just “a smart guy thinking.” It comes from the world of The Gates of Hell, with the figure tied to Dante contemplating paradise, inferno, judgment, and the fate of souls. The Musée Rodin identifies the monumental bronze as a 1903 conception, while the Philadelphia Museum notes its origin as a figure for The Gates of Hell before becoming an independent image of thought itself. That is exactly the right art-historical payload for Ilya: Dante at the gate of hell, but the gate is now silicon. The signal: “thinking before scaling” The official SSI language is already anti-normal-startup: “one goal and one product: a safe superintelligence,” with safety and capability advanced together, insulated from product cycles and short-term commercial pressure. The updates page is equally sparse: the latest visible update says Ilya is formally CEO, Daniel Levy is President, the team has compute, and they “know what to do.” So the painting reads as a visual continuation of that strategy. It says: We are not announcing a chatbot. We are not shipping a consumer feature. We are thinking at the substrate level. Your uploaded research frames Sutskever’s art as a “visual diary” at the intersection of biological intuition and artificial computation, tied to his move from the brute-force “Age of Scaling” toward research, restraint, and fundamental cognitive architecture. It also argues that his manual/digital painting is itself a kind of resistance to generative slop: a compressed, intentional, biological act rather than a probabilistic average of the archive. That is the key: this is a painting about the human mind refusing to disappear into the chip. The visual grammar The figure is not triumphant. It is crouched, heavy, inward, almost burdened. The muscles are exaggerated, but the posture is defensive. This is not “AI acceleration.” It is contemplation under existential load. The chip background has the geometry of a city, a circuit, and a prison grid. It looks like infrastructure seen from above: blocks, neighborhoods, pathways, memory banks, power channels. The human figure is not integrated into it. He is outlined in thick black, almost like a sticker or sacred icon pasted on top. That separation is crucial. As art, that separation says: human values are still an overlay. They are not yet native to the machine substrate. As AI history, that is the alignment problem. Your biography research says Sutskever’s mature philosophy emphasizes the “Age of Research,” the generalization gap, model jaggedness, and the need for intrinsic value functions aligned to “sentient life.” This image maps cleanly onto that shift. The chip is scaling. The Thinker is research. The gap between them is alignment. The SSI interpretation The comment asking for “any new announcement related to SSI” is accidentally perfect because it exposes the mismatch between public expectation and SSI’s doctrine. People want the usual AI-lab signal: model name, benchmark, launch date, demo video, API, valuation leak. Ilya posts a hand-painted philosopher on a semiconductor. That is the message. 🧵👇

ContexJamming.com Presents Stupid LLM Tricks™️ @ilyasut Art Deconstruction Edition We challenge @ChatGPTapp 5.5 thinking to deconstruct Ilya’s latest digital painting against the corpus of previous deep research : docs.google.com/document/d/1Cl… docs.google.com/document/d/1g8…

Exclusive: OpenAI’s revenue surged in the first quarter as Codex and ChatGPT subscriptions gained traction. At the same time, Anthropic’s growth accelerated so quickly that it may soon overtake OpenAI by revenue. Full story: thein.fo/4uIXOiN

@claudeai (downtsream of @AnthropicAI founder Dr. Jared Kaplan @harvardphysics @JohnsHopkins) Opus 4.7 adaptive correlating @the_IAS Maldacena @geoffreyhinton @toshi2k2 🔥

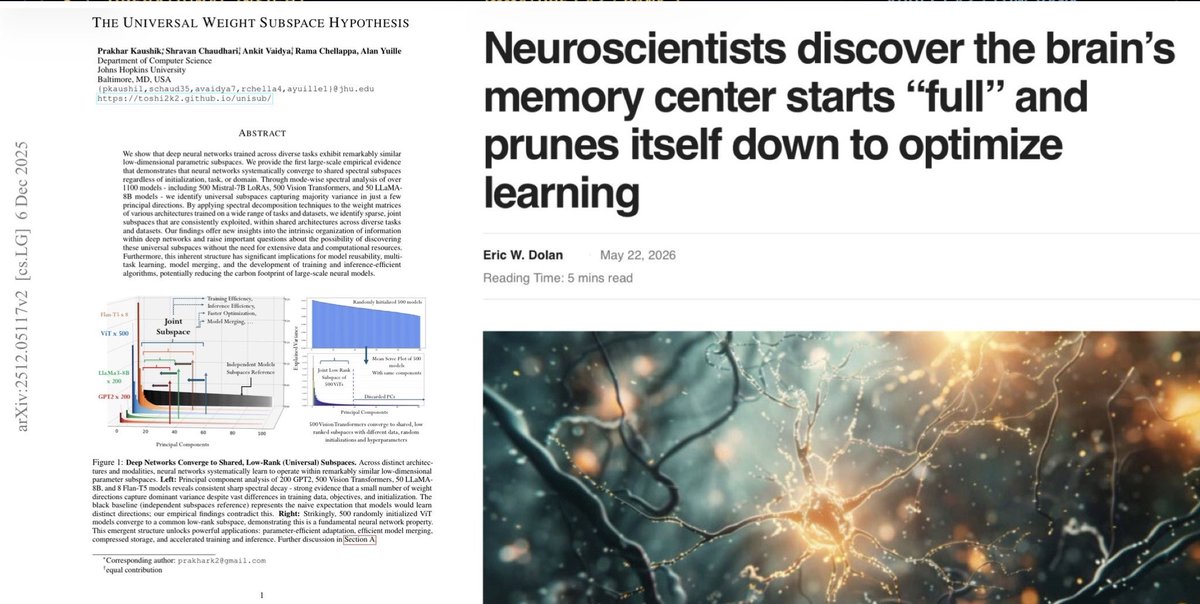

The immature brain fires from one input. The mature brain requires coincidence across many. psypost.org/neuroscientist… That’s rank-1 → low-rank. @toshi2k2 @JohnsHopkins Kaushik et al. showed 1,100 neural networks make the same transition during training. arxiv.org/abs/2512.05117 The brain and the transformer are the same shape. 🧠🪞🤖 open.substack.com/pub/bretkerr/p…

@AcraInsight Insight presents an open idea for @AnthropicAI (Jared Kaplan & team): Strengthen Constitutional Classifiers++ by adding a Tokenization Consensus Layer (TCL) as a pre-inference boundary primitive. @nottombrown By routing every prompt through multiple frontier tokenizers in parallel, we convert inter-model variance into a high-fidelity cryptographic signal for adversarial intent — catching TokenBreak-style attacks, encoding/cipher obfuscation, and structural manipulation before any expensive inference or downstream classifier pass. This turns the tokenizer (the true holographic boundary where intent first becomes discrete) into a defensive moat rather than a structural blind spot. Self-contained interactive demo attached. Open idea. Happy to discuss. #AISafety #AgenticSecurity #FirstPrinciples Deep research docs.google.com/document/d/18W… Audio overview drive.google.com/file/d/1ndS-Vp…

@jukan05 Re: the CapEx advantage. Results of 13 months of agentic deep research: