@cursor_ai Life cycle of a dev rn

Ben

208 posts

@BuildItWithBen

Lead Android Engineer at Phyn Love learning about the future of: Software/IoT/Design Native Android + iOS

@cursor_ai Life cycle of a dev rn

Cursor now has automations! You can run agents on schedules, trigged by events from Slack, GitHub, or any MCP server. I get a daily review every morning with my GitHub/Slack activity. Our team now has dozens of agents running 24/7 improving or monitoring things for us.

HIGHLY recommend: A '/cross-analyze' skill set up in both claude and codex 1. generate plan 2. 'review' prompt/skill in both 3. run /cross-analyze in each which is mostly just "analyze this analysis in light of this other analysis" 4. repeat until convergence 5. go

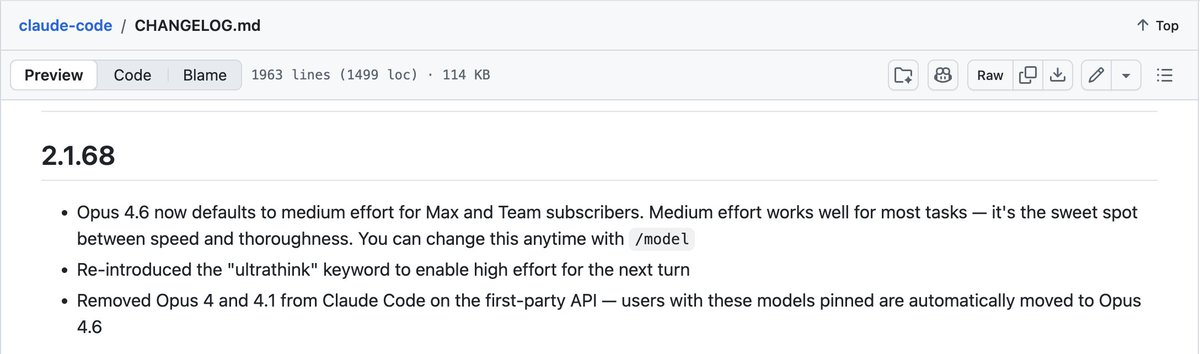

ultrathink coloring + animation perfect example of a low-effort, high-delight feature. A simple joy.

In the next version of Claude Code.. We're introducing two new Skills: /simplify and /batch. I have been using both daily, and am excited to share them with everyone. Combined, these kills automate much of the work it used to take to (1) shepherd a pull request to production and (2) perform straightforward, parallelizable code migrations.

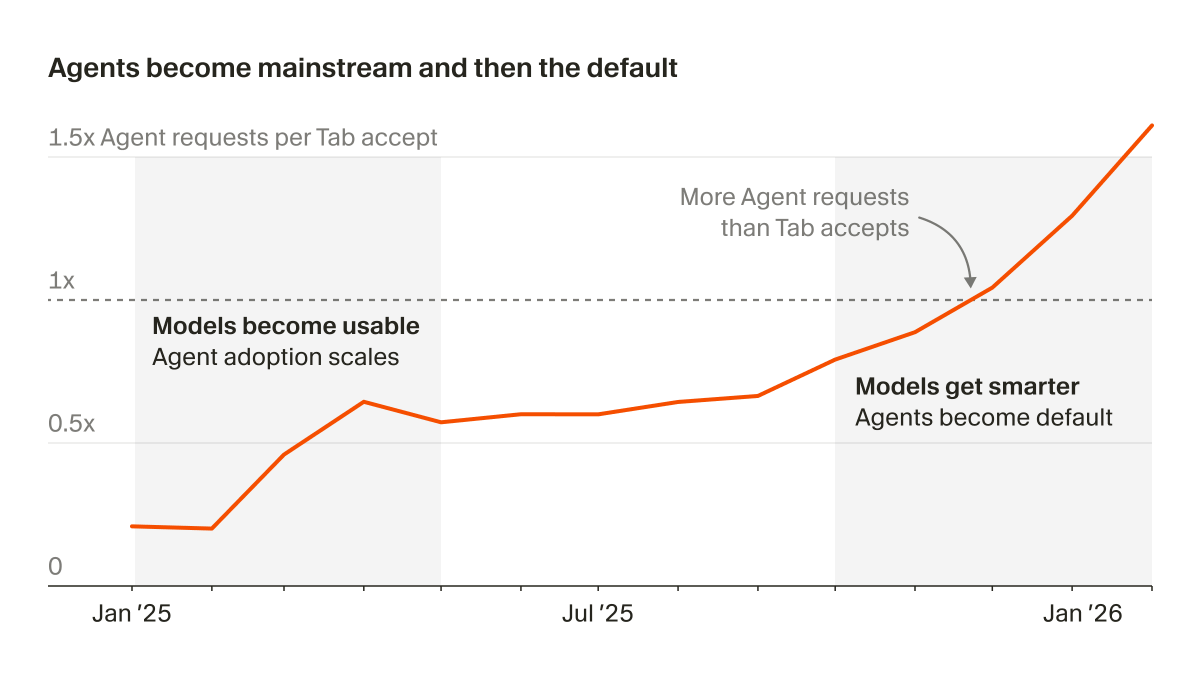

My observation has been that this week we crossed the line between early adopters and the early majority on the "Innovation Adoption Curve". Many who ignored AI, especially in the business world, finally had their 'aha' moment and a fire lit under them.