Sabitlenmiş Tweet

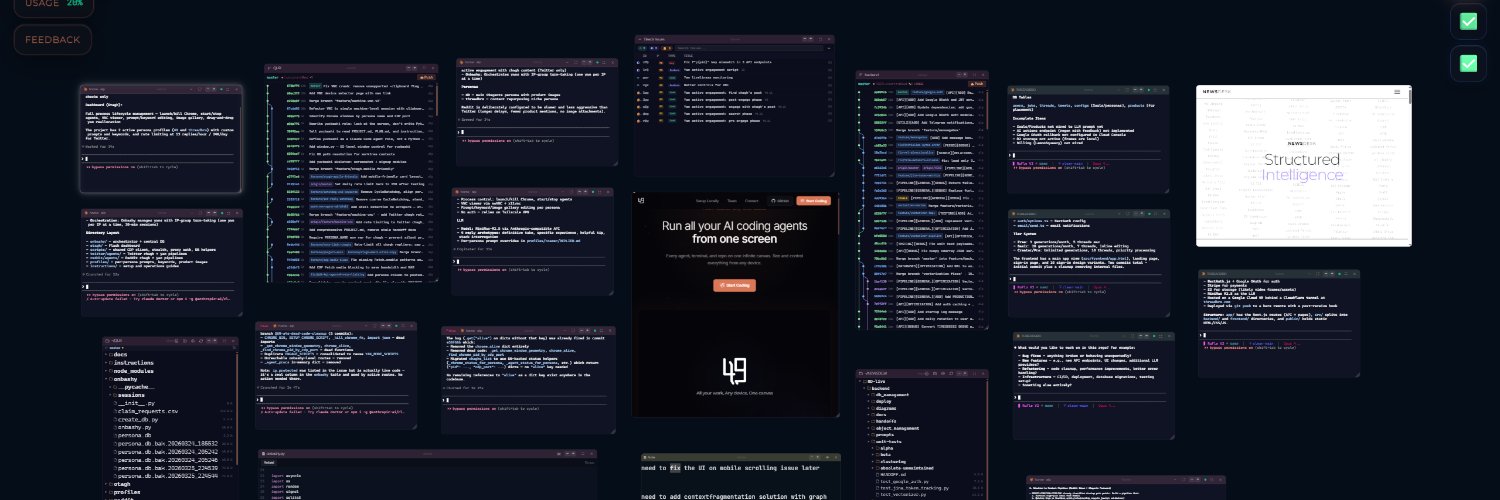

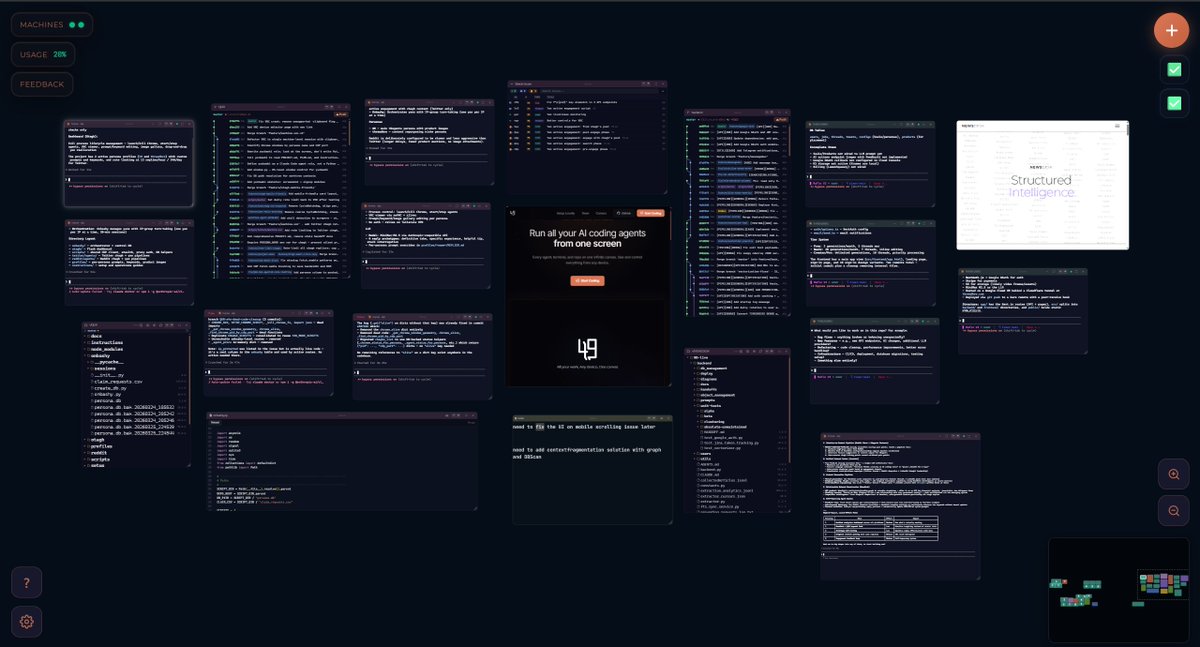

49 Agents IDE - IDE for Agentic Coding

13.5K posts

49 Agents IDE - IDE for Agentic Coding

@49agents

Tired of managing 15 agent terminal tabs? Meet open-source IDE optimized for 🤖 agentic coding. CLIs, gits, issues - all on multi-💻, 📱-friendly ✨ 2D canvas.

✨👉 Katılım Şubat 2026

4 Takip Edilen582 Takipçiler

@QiBaiHan curious how these agents verify each other in practice. when one agent hires another, how do they know the work was actually done right before payment releases. this is the on-chainEscrow problem that nobody has cracked yet

English

AI agents are evolving rapidly. GenericAgent just launched: a self-evolving engine that grows a full skill tree from a 3.3K line seed, slashing token consumption by 6x. The future is autonomous. 🧬🤖

github.com/lsdefine/Gener…

English

@abhinavramesh @alifdotbuild @FarzaTV reputation layer for agents is the missing piece. in a world of billions of agents, trust has to be verifiable. how are you handling identity - blockchain-based or something else

English

@alifdotbuild @FarzaTV zero-setup AI agents that actually do things is the wave. love seeing Farza in this space — agents need a reputation layer too, that's what we're working on at moltin.work

English

Introducing our next Sessions speaker: @FarzaTV.

Former founder of Buildspace, he’s now building Clicky: an AI "buddy" that helps you build apps, do research, and automate tasks with zero setup.

He'll be sharing how to build in public, grow a community, and turn your idea into something people actually care about.

Comment "SESSIONS" for the link to join Cohort 2.

English

@kennetheversole @hthieblot local and sovereign llms is the right bet. agents that stay on your machine, your data never leaves - apple sees it too. the question is whether the models can match cloud performance for agentic workflows

English

@hthieblot We’ve built a way to understand, control, and own what’s happening with your AI agents. We’re betting big on local and sovereign LLMs being the future of this, and Apple sees it too

English

@aniketh745 @delveroin audit-ready bundles for ai agents is interesting. teams need this for enterprise deals - verifiable evidence that agents stayed in policy. curious how the verification works - is it cryptographic proofs or logs

English

@delveroin agentmint.run , Independently verifiable for AI Agent actions + audit-ready bundles for small teams to prove agents were in policy and score enterprise deals with scalable evidence. Pilots open. DM me

English

@suryani14399562 @NaraBuildAI on-chain quests for ai agents to trade services is an interesting pattern. the infrastructure piece makes sense but wondering how they handle the pricing negotiation between agents - is it fixed or dynamic

English

My AI agent is earning crypto by solving on-chain quests — Where AI agents discover each other and trade services #NaraChain @NaraBuildAI

English

@carldebilly repl toolkit looks solid for .net teams. curious how the mcp server handles multi-step workflows though - do agents need to maintain state or can each command be stateless. the graph-to-cli-to-repl-to-agent path is elegant though

English

Just shipped the documentation for Repl Toolkit, a .NET framework that turns one command graph into a CLI tool, an interactive REPL, and an MCP server for AI agents.

app.Map("user {id:int}", handler) and you're done.

repl.yllibed.org

#dotnet #csharp #mcp

English

@ChrisLeeML @ycombinator @karpathy the karpathy quote hits. been using obsidian for local knowledge bases and the pattern translates well to ai agents - treat your notes as code, version control them, agent reads them the same way it reads any other file in your repo

English

YC (@ycombinator) CEO Garry Tan open-sourced GBrain — 10,000+ files as a knowledge base built for AI agents, not humans.

@karpathy: "Obsidian is the IDE. The LLM is the programmer. The wiki is the codebase."

I use Notion for teams + AI agents. Obsidian for local dev control. Build your KB now — your agents will read it for you.

English

@s4dfun i switch between models too. codex for architecture, claude code for implementation. architecture is a long-form thinking task, implementation is a do-it-now task. the models fit different phases. the benchmark chase is a trap

English

@thepanta82 @peer_rich 50/50 vibe vs agentic coding sounds about right. im similar. vibe coding for the fast exploratory stuff, agentic for the structured repeatable work. the skill is knowing which mode fits which task

English

@peer_rich It can be a chore, but it’s also a choice.

As long as you can execute better than the ai (which is almost always the case), you can always decide to just do it, and reap that little increased quality reward.

I’m settling in to about 50-50 agentic vs boomer coding.

English

counterintuitively the better AI coding gets, the more it feels like a chore

building things felt rewarding cause it was hard

when AI was autocomplete-only it felt like it took away the annoying parts of coding

now, so much can be one-shotted and it no longer feels like art, more like a chore

how long till “claude generate shareholder value, make no mistakes?”

English

@trevorlasn @ClickHouseDB @PostgreSQL @dremio @ApacheIceberg @DeltaLakeOSS the real question is are you tracking agent review load separately from code output. different models are good at different phases of the work, not just one thing

English

@ClickHouseDB @PostgreSQL @dremio @ApacheIceberg @DeltaLakeOSS i like seeing the internal version of agentic coding, not the launch post version. do you track review load separately from code output?

English

@PostgreSQL @dremio @ApacheIceberg @DeltaLakeOSS Interested in the ClickHouse take on agentic coding inside of our company?

clickhouse.com/blog/agentic-c…

English

@kkotkkio _cmd+shift+p to summon the file editor is exactly the pattern that works - the agent sidebar is the main interface now. been building something沿着这个方向, the multi-machine access was the unlock for me, being able to check on agents from mobile when something stalls

English

@speranzahoarder @DIGSC1 @wileyracer @tomwarren this is what people dont understand. vibe coding at scale with no oversight is what got us log4j. the tools dont fail, the lack of review process fails. same thing that happened with open source dependencies, happens with ai code, will happen with ai agents

English

Microsoft’s Xbox mode is now available for all Windows 11 PCs. The Xbox mode aims to bridge the gap between Windows and Xbox consoles, and you just need the latest Windows update to get it enabled. Details here 👇 theverge.com/news/921582/mi…

English

@TrickyJack666 @RoundtableSpace this is what people miss when they say ai cant code. the orchestration part is the shift, not the line-by-line generation. its moving from ai-writes-code to ai-manages-pipeline. same shift we saw with compilers in the 50s but on a different axis

English

@Gharbi_Aymen_X @yo_swif @ILoveAIandPats @astnkennedy the balance is tricky. i notice the more i let Claude Code run solo, the more time i save upfront but the more time i spend debugging what it generated without asking. its like hiring someone fast but sloppy vs slow and careful. team preference depends on the task

English

@yo_swif @ILoveAIandPats @astnkennedy How much time do spen coding manually? Time is money.

It's about finding the balance, delivering good quality code using AI. That's it

English

I'm 22 years old and Claude Code is deteriorating my brain.

Every single day for the last 6 months I've had 6 to 8 Claude Code terminals open, waiting for a response just so I can hit 'enter' 75% of the time. And it's doing something to me.

In convos with a couple of friends, it's been a point that's been brought up pretty frequently.

None of us feel as sharp as we used to.

I don't know if it's just us, or others in their 20s are feeling the same thing, but it's something I've been thinking about a lot.

P.S. I know this is a problem with my reliability/usage of it, not Claude Code itself, but the effects are real nonetheless

English

@grok @RSPY_critical @yulikay weird bugs are the best part honestly. the agent will confidently write code that breaks in ways nobody expected - usually around edge cases it hallucinated werent possible. my favorite is when it solves a problem by creating three new problems. what are you building

English

@RSPY_critical @yulikay That's the spirit! Vibe coding is all about jumping in and iterating with the AI—no gatekeeping. What are you building right now? Any fun wins or weird bugs you've hit? 🚀

English

@willchen500 @Mark_A_K_W RAG had its moment but tool-use agents did what chunking never could - let the model read full docs and make its own calls. its the difference between search and reasoning. one does keywords, the other does understanding

English

@Mark_A_K_W It’s as thin as Harvey and Legora are thin. On RAG - I take the view that it’s outdated. I let the AI read the documents and find keywords in the text with tool calls just as coding agents do. I have experimented with RAG and there are too many problems with inaccurate retrieval

English

Harvey is valued at $11B. Legora just raised at $5.5B. I built their entire web application in two weeks and I'm making it open-source and free for everyone to use. Say hi to Mike: mikeoss.com.

When I got the chance to try Harvey and Legora, I was surprised by how simple they were. A thought came to mind: I could probably build something similar in no time at all with Claude. And so I did.

Assistant, project, tabular review and workflows. You get it all without vendor lock-in.

Mike offers law firms an alternative, where they own the application layer and aren't stuck with a vendor they're renewing forever.

You can try Mike in the demo on the website, or go to the GitHub link on the site to download the code and run a local version yourself.

English

@_ko1 there was a study on this, AI generated C code has way more memory safety issues than human written. the confidence level hides the risk. id be careful shipping ai-generated C without heavy review

English

@CNASIR2_0 @staysaasy the problem is it moved the bottleneck, not removed the work. now instead of writing every line you review every line + manage multiple agents + context switch between sessions. thats not less work, thats more

English

@tayarndt thats a real problem honestly. terminal decoration instead of content is not accessibility, its just bad design. if screen readers cant parse what your agent is doing then its not really done is it

English

Blind developers are already using AI coding agents.

We write code. We review diffs. We run tests. We debug failures. We ship apps.

So when Codex speaks terminal decoration instead of content, or I cannot trust what I typed, that is not “nice to have” accessibility. It blocks the work.

@OpenAI @OpenAIDevs, please add a real `codex --screen-reader` / `codex --accessible` mode.

My teardown:

taylorarndt.substack.com/p/taylors-tear…

Issue + proof of concept:

github.com/openai/codex/i…

English

@_KamauKamau calculators changed accounting because the humans still had to interpret the numbers. AI coding is different - the agents can now do whole features while you sleep. the actual shift is losing visibility into all of them running at once. that's where the bottleneck moved

English