Adam Satterfield

515 posts

Adam Satterfield

@Bytesized_qual

Technology executive driving innovation in AI, cloud, DevOps, and security. 20+ years of experience building teams and delivering scalable solutions.

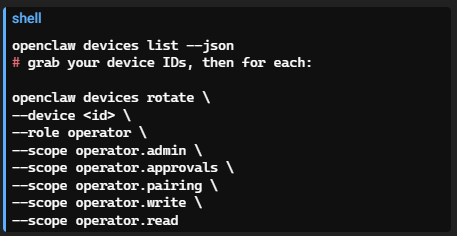

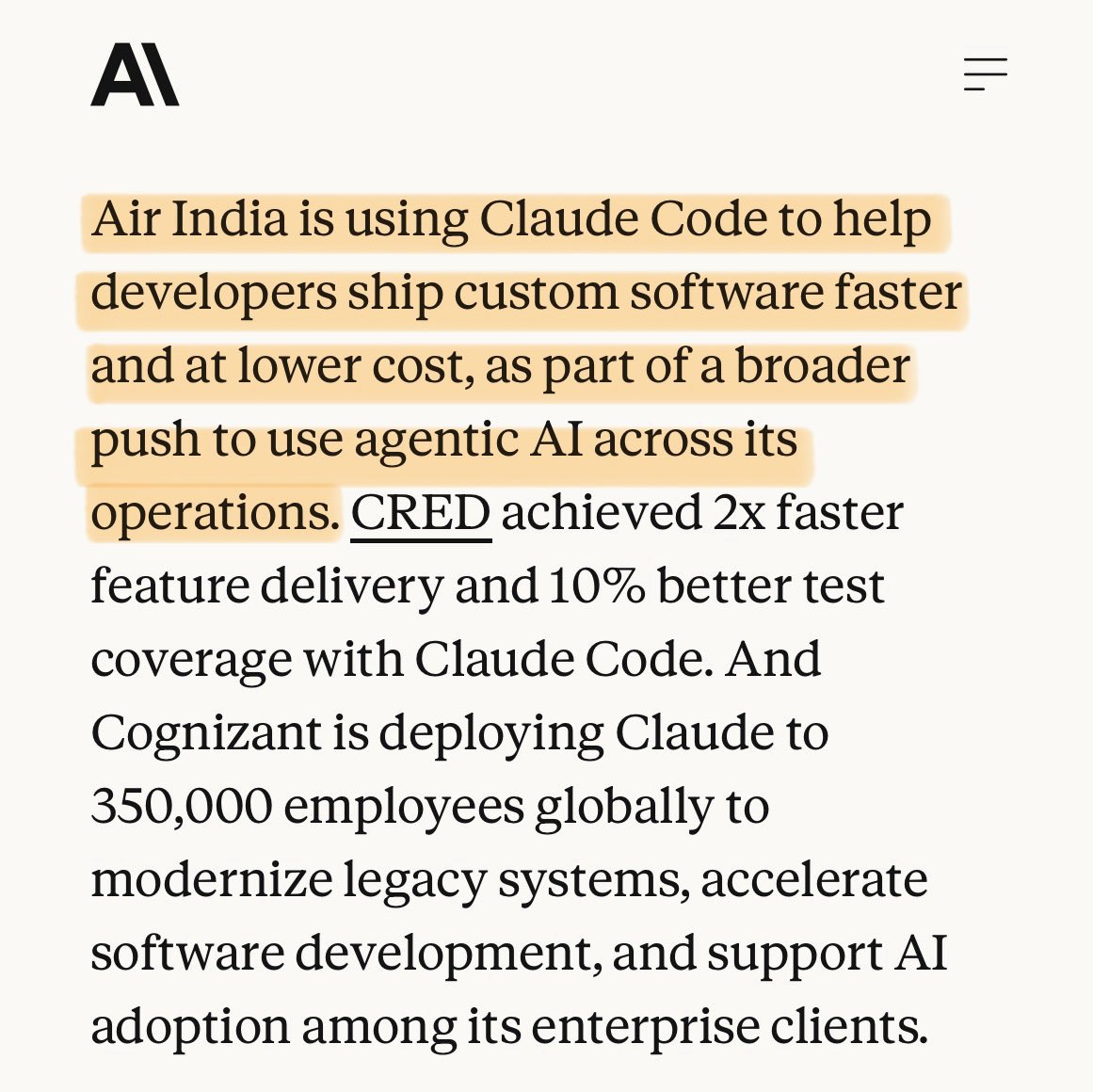

qwen overtook meta's llama in total huggingface downloads. alibaba is now #1 in open source AI. i've been running qwen models for 2 weeks. 6 benchmarks. 4 models. dense vs MoE vs distilled vs base. every test on a single 3090. the download numbers are catching up to what the data already showed. meta and alexander wang keep talking about superintelligence while their actual models can't hold a coding benchmark anymore. llama used to be the default. now it's the fallback for people who haven't updated their scripts. qwen 3.5 27B dense holds 35 tok/s from 4K to 262K context on one card. no degradation. the 35B MoE runs 112 tok/s flat across the same range. the 80B coder hit 46 tok/s on 2x 3090s and oneshotted a 564 line particle sim. their 9B beats OpenAI's 120B on key benchmarks. good architecture over parameter count. i ran dense vs MoE vs distilled on the same GPU, same quant, same prompt. wrote an article breaking down every result. the data speaks for itself.