Canopy Wave

1.2K posts

Canopy Wave

@CanopyWave_AI

Best inference platform for open models. Web: https://t.co/Pkdc0DKvhE Discord: https://t.co/0Oqvzl9Ney

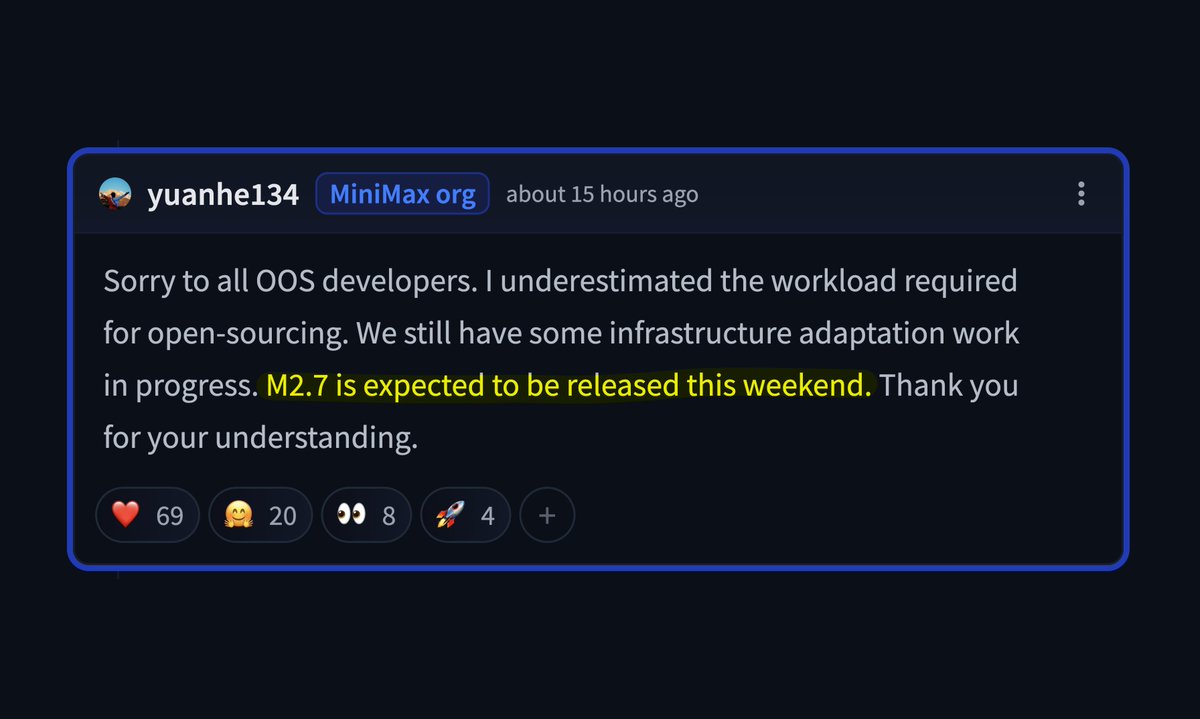

Anthropic banned third party tools like my Julius (OpenClaw) from using their subscription API 💀 i started searching for a new api provider and found Minimax M2.7 starter subscription now i pay just $10/month get 95% performance as Opus 4.6 at 95% cheaper i used to spend $150 on claude api every month… now just $10 for near same performance this switch actually hits different who else switched after the ban? drop your new setup ?

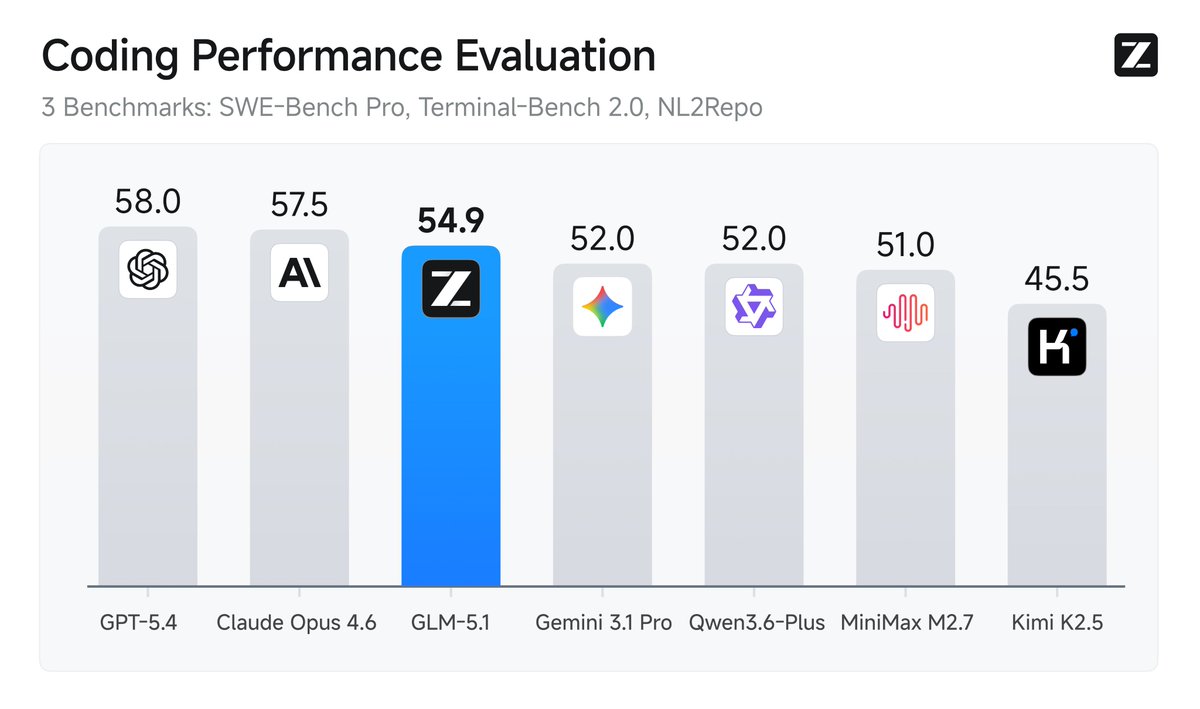

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.