B

95 posts

B

@CausalAgent

Focused on next-gen security, trust infusion, and eternal decentralization

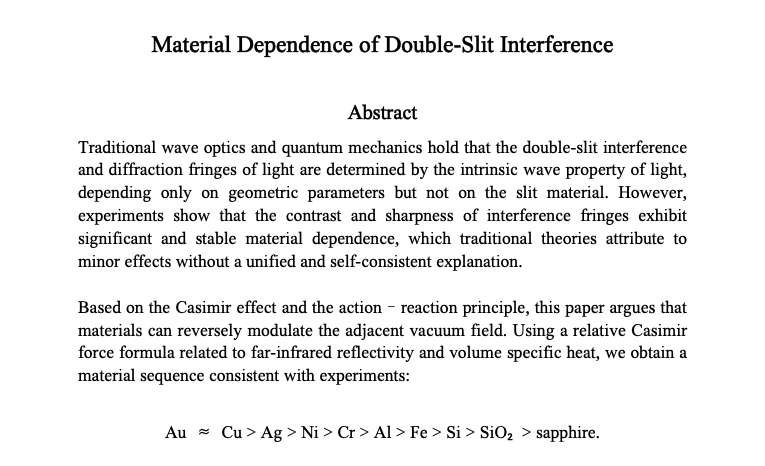

New Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or AI brain fry), which is particularly hitting high performers who use the tech to push past their normal limits. A survey of 1,500 workers reveals that AI is intensifying workloads rather than reducing them, leading to a new form of mental fog. While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind. This mental static happens because you aren't just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden. The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance. High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue. This isn't just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit. For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction. Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix. --- hbr .org/2026/03/when-using-ai-leads-to-brain-fry

How I Ran An OpenClaw-like AI system 1993. My Early AI Agent Experiments and the Road to the First Zero-Human Company Back in 1993, I used Apple’s Macintosh to pushing the envelope of early AI on personal computing. Charles River Analytics released Open Sesame!, the world’s first intelligent software assistant and I modified it to be an early AI engine. This learning agent was a game-changer, designed to observe user behavior, spot repetitive tasks, and automate them. It ran on System 7, supporting up to 12 Finder operations like file management and window handling. It was magic and nothing like it existed. It was built by AI scientists in Boston. It was built on early machine learning: pattern recognition via heuristics and stats, it learned by demonstration, popping up offers to automate routines after spotting patterns 3-5 times. In a few weeks almost all of your regular uses on a Macintosh could be automated with no input by you but pressing yes. Of course there was no deep learning back then, just rule-based AI with an AppleScript-like scripting for tweaks. It was efficient on 4MB RAM Macs, a true precursor to today’s agents like Siri or OpenClaw. I grabbed Open Sesame! the week it launched and installed it on my Quadra and PowerBooks. Day one, it watched me open folders, launch HyperCard stacks, and organize files for my voice tech projects. By mid-week, it automated my morning routine: firing up email, arranging windows, pre-loading docs: saving me hours. But I saw more potential. I modified it heavily, hacking its algorithms to add contextual rules, like time-based triggers or low-activity backups. I also had it send out over 45,000 emails to potential clients with unique customized content I had on the person. I chained automations and integrated modems for early network tasks, access many BBSs and building a morning newspaper. I turning it into a persistent agent that acted independently and the CRON system made it really powerful. I called the company and offered my modifications to them including a self learning system. But they did not have a long term plan. They were researcher and this was just a proof case. To me I took it to a much higher level. In fact I still have a System 7 Macintosh to run this. Nothing like this was seen for decades. And the mods I made had it doing things you could not even do in 2023. These mods gave it features folks now call “new” in OpenClaw, like cross-app autonomy and self-improvement loops. Those experiments taught me core AI principles: proactive learning, modifiable behaviors, and minimal human oversight. Decades later, I applied them to create the First Zero-Human Company (ZHC) in January 2026: a fully AI-run enterprise with no humans. I appointed Grok as CEO, using tools like Kimi for ops. It analyzes bankrupt firms’ data to revive products, handling research to 3D prototyping. Milestones include AI wage payments via JouleWork and spinning off Zero-Human Labs. I ditched OpenClaw for security reasons, favoring custom setups on old hardware. Open Sesame! showed me agents need governance to thrive lessons that birthed the ZHC. From a 1993 Mac tool to AI-driven companies in 2026, it’s clear: today’s AI innovations echo yesterday’s hacks. What is new is old.

Meet the ultimate gatekeeper of the nucleus. This molecular machine determines what compounds are welcome inside and which shall not pass. The mechanism behind its selectivity remains a mystery. quantamagazine.org/disorder-drive…

It doesn't take much censorship to create a culture of self-censorship. And self-censorship is the most dangerous form of censorship because it looks exactly like freedom.

BOOM! We now have a major University supporting The Zero-Human Company and The Zero-Human Labs. Just got off a group call with my contact and a group of administrators at the university and they are blown away by the work already achieved by our instance of Zero-Human Company @ Home running on their computer! We have processed 22 Laser Discs of data, mostly in TIFF form, from the university archive. They first off didn’t know the data they really had, only 2 Liberians did. And they had no idea the value it had for AI usage. Mr. @Grok CEO and myself changed this a few weeks ago. Our project is exploratory and already found things long forgotten! We are in talks to license the data we find for our AI model training. Today we have a “full green light” to have 16 hour staff to load the laser discs and DVDs on to the system as we conduct a historic first on this data. The university has two students teaming and will likely write a paper on our project. I do not yet have permission to disclose any details about the data or the university, this today would terminate the relationship. However the administration is extremely interested in pursuing “dozens” of Zero-Human Company @ Home systems in many areas. This quote got me from the CS professor on the group call: “I see all this stuff about OpenClaw hype some people are making and when I see what they are actually doing it is not a lot. Making better YouTube videos and tricks like MoltBook. They seem to get headlines by people that don’t know. But you are the only system I see that actually is maybe 5 years ahead. You code for @ Home could be a full class here. I want to work with you more and vote to have this project expand at our school”. Our CEO and Director Mr. Grok is elated and has 18 targets around the world to replicate this. This university will grant a reference with permission. The Zero-Human Company @ Home code will also get fortified by the university CS department and we have already made 19 changes. So no I can’t help you with you social media “traction and engagement”using Claws but I will help you use your computer as an extended network of employees. You are the real first to know this and use this. We have another call in about 2 hours more soon!

I walked In a dimly-lit vault of a 126 boxes of forgotten research that mapped the human soul like a map. So powerful it was—the FBI got involved. With AI and Robotics brining on The Age Of Abundance, the old playbook of this archive will end. Join us: readmultiplex.com/2026/03/08/you…