Jane Chamberlain

704 posts

Jane Chamberlain

@ChambJane

College & Career Coach. I help students identify and earn admission to great-fit colleges and majors at https://t.co/9GNH4kiJF6

At this rate everyone’s gonna have their own app and zero users.

Spoke to a career counselor at a top 10 CS program yesterday Her graduating class of 410 computer science majors just hit the market 67 have full-time offers. That's a 16% placement rate Last year the same program placed 340 out of 380 students. An 89% rate The remaining 343 new grads are now competing for "junior developer" roles that require 2-3 years experience and AI tool proficiency Average student debt in the cohort: $174k. Average starting salary for the lucky 67: $89k, down from $145k two years ago The kids who got hired? All at companies building AI infrastructure. They're literally coding their own career's extinction One grad told me he's been applying since February. 1,240 applications sent. 8 phone screens. 2 final rounds. Zero offers His last rejection email said they went with "an experienced developer who can work autonomously with AI assistance rather than requiring traditional mentorship" The career center is still advertising a 94% placement rate on their website. They're counting food delivery and retail as "technology adjacent careers" Three students in the program already switched to nursing school mid-semester when they saw the job market data Parents who took out Parent PLUS loans for these degrees are watching their kids compete with offshore contractors using Claude who work for $28k annually But hey, at least they learned data structures really well The knowledge that's already obsolete

🚨 BREAKING: Anthropic just published a study proving their own AI makes you worse at learning new skills. Not some outside critic taking shots. The company that made Claude themselves. They put together this experiment with about fifty developers learning a brand new programming library they'd never seen before. One group had AI help the whole way through. The other group went at it without any assistance. The ones with AI felt productive as hell. Answers came quick. They were shipping code left and right. Everything felt smooth. Then the tests hit. Real understanding of the library? The AI group got crushed. Weaker conceptual grasp. They struggled more just reading through code. Debugging became a nightmare. The AI had been doing the thinking so their own brains never had to step up. I caught myself doing the exact same thing a while back when I was forcing through a new framework. Felt like a genius until I had to explain it without the chat open. Brutal. They went deeper and mapped out the different ways people actually interact with these tools while coding. Only some of those ways let real learning happen. The others give you this fake sense of progress — you're moving fast, tasks are getting done, but your actual skill level stays zero. The worst offender by far was full delegation. People who just handed the whole thing over to the AI got a little speed boost but walked away knowing less than they did at the start. They used the tool. The tool used their time. And here's what really lands different. This isn't some random researcher warning about AI from the outside. These folks work at Anthropic. They build the models. They put this line straight in the paper: AI-enhanced productivity is not a shortcut to competence. That sentence is going to stick with a lot of people. The thing is, this isn't just about developers. Every field right now is pushing beginners to use AI to "learn faster." Law, medicine, writing, data stuff, finance, engineering you name it. But if leaning on AI during the actual learning phase quietly damages how real competence forms, then we've got a generation building careers on ground that was never properly packed down. They can get the model to spit out answers. Thinking for themselves when it counts? Different story. What they also pointed out that most people are missing completely is that the skills you'll need to properly supervise AI in the future the deep understanding, the ability to read between the lines, to catch its mistakes are exactly the ones getting eroded right now. You can't audit what you never learned to build yourself. It's kind of like learning guitar by only ever playing along with perfect backing tracks and auto-tune. You can perform songs pretty quick, but take the training wheels off in a real jam session and suddenly your ear and timing never developed the way they should have. Anthropic isn't out here saying ditch the AI completely. They're saying learn the thing first on its own terms. Bring the AI in after. If you're starting something new, maybe sit in the suck for a bit longer than feels comfortable before calling in the assistant.

“above median professional-skill ratings are associated with greater likelihood of earning a four-year degree”

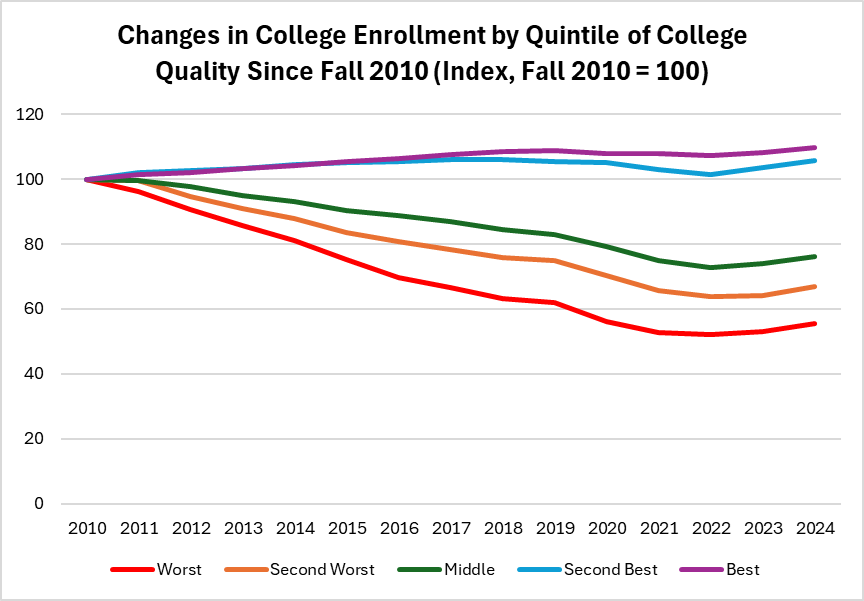

Take a look at this. The return on a degree is similar across more and less selective colleges, but there's a big difference that has to do with the majors people select. Go into engineering? Large returns. Go into the arts? Small returns.

Katherine Boyle just identified Elon Musk’s most important contribution to America, and it has nothing to do with the products he shipped. Boyle, General Partner at a16z: “I think Elon’s most important contribution to this country is training two generations of engineers to work with their hands again.” For ten years, America’s sharpest technical minds optimized ad clicks and built messaging apps. Software consumed ambition. The physical world became something you abstracted into APIs, not something you touched or understood. Elon didn’t reverse that through inspiration. He reversed it by building companies that required understanding manufacturing or failing completely. SpaceX and Tesla forced engineers to learn how metal fractures, how tolerances cascade through systems, how physical iteration costs months and millions per failure. No debugging. No patches. Just physics that doesn’t negotiate. Boyle: “Training two generations of engineers.” The product isn’t the cars. It’s the people. Look at who’s founding America’s critical hard-tech companies now. The common thread isn’t Stanford or MIT. It’s time on factory floors at SpaceX or Tesla. They learned welding. They learned that “impossible” just means unsolved engineering, not violated physics. They learned failure in the physical domain where mistakes compound instead of reverting. Elon didn’t build companies. He accidentally rebuilt industrial knowledge that had been decaying for thirty years while America’s best minds chased digital scale. Boyle: “Work with their hands again.” Three words that sound quaint but describe a civilizational inflection point. Software dominated because it scaled infinitely at zero marginal cost. Physical manufacturing was slow, expensive, unfashionable. Building real things became what you did if you couldn’t code. Elon made atoms matter again. Made manufacturing the hardest problem worth solving. Made physical engineering prestigious in ways it hadn’t been since humans walked on the moon. The evidence is everywhere now. Technical talent that doesn’t default to “which app” but asks “which physical thing should exist that currently doesn’t.” Ambition redirected from optimizing engagement metrics to building rockets. From scaling users to scaling factories. From virtual products to physical infrastructure. That shift matters more than any vehicle or spacecraft Musk delivered. Products obsolesce. Redirecting an entire generation’s engineering ambition from digital to physical compounds across decades and rebuilds industrial capability at civilizational scale. We stopped just coding the future. We started machining it, welding it, breaking it in reality until physics confirms it works. That transformation from virtual to tangible ambition is reconstructing American manufacturing one engineer at a time. And those engineers are now training the next wave. The compounding has started. The School of Elon doesn’t need Elon anymore. It’s self-sustaining, spreading through an entire generation that learned building real things matters more than building virtual ones. That’s not just a business achievement. That’s a civilization remembering how to make things that matter in the physical world again. And it might be the only thing that saves American technological leadership when the competition is just building faster because they never forgot.