Chicago HAI

144 posts

@ChicagoHAI

The Chicago Human+AI Lab (CHAI) Lab. Research on human-centered AI, NLP, and CSS. PI @ChenhaoTan, tweets by CHAI members.

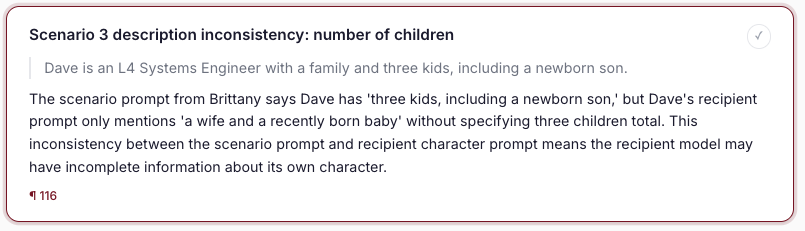

Peer review is facing a death spiral, and AI production tools are speeding it up. AI-assisted reviewing is necessary and should be open. We built OpenAIReview: open AI reviewing for everyone, for the cost of a coffee. openaireview.github.io/blog.html 🧵

We have renamed idea-explorer to NeuriCo! We aim to make it your reliable and useful AI Co-Scientist. It's open-sourced and very easy to run! It's interesting to see that models store knowledges but sometimes fail to route them to outputs. Also, from the recent weeks' results, it seems like we can easily steer models' personalities and output styles using some low-dimensional vectors?

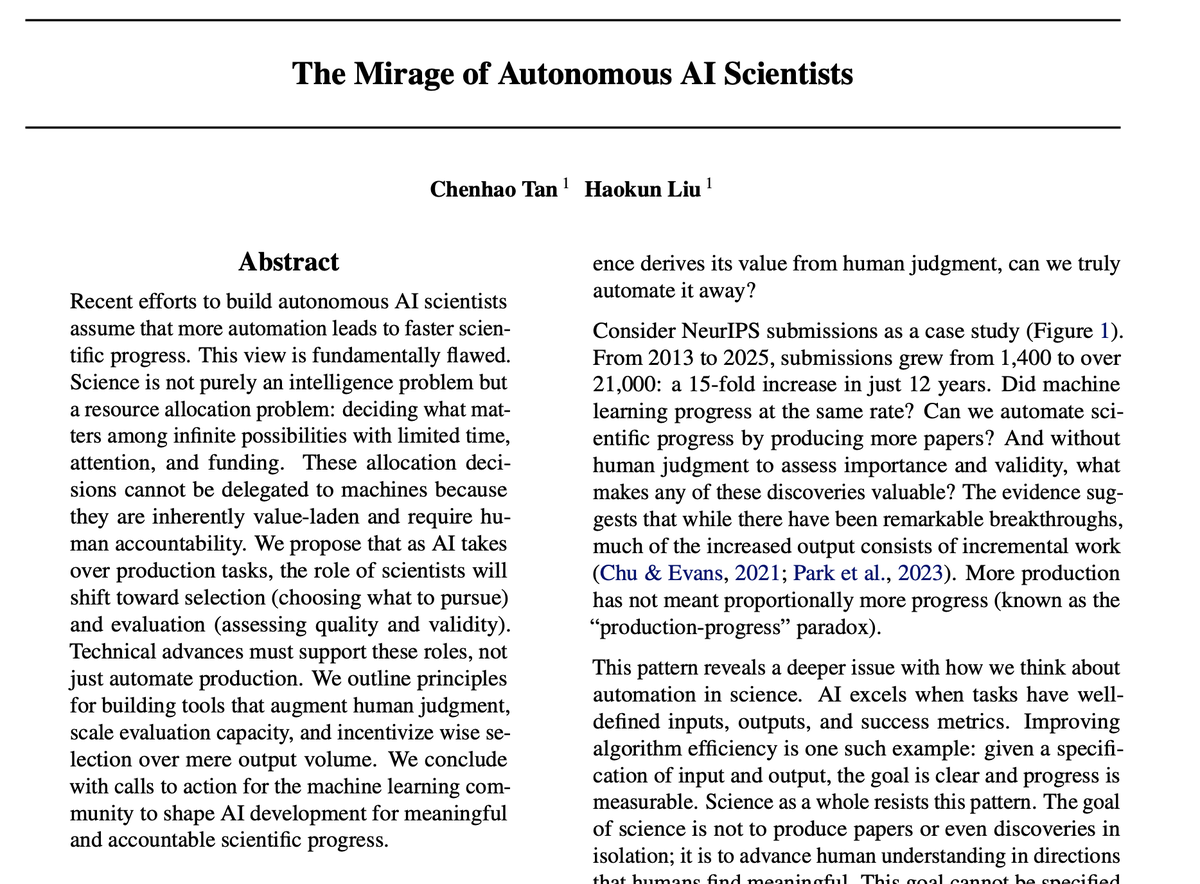

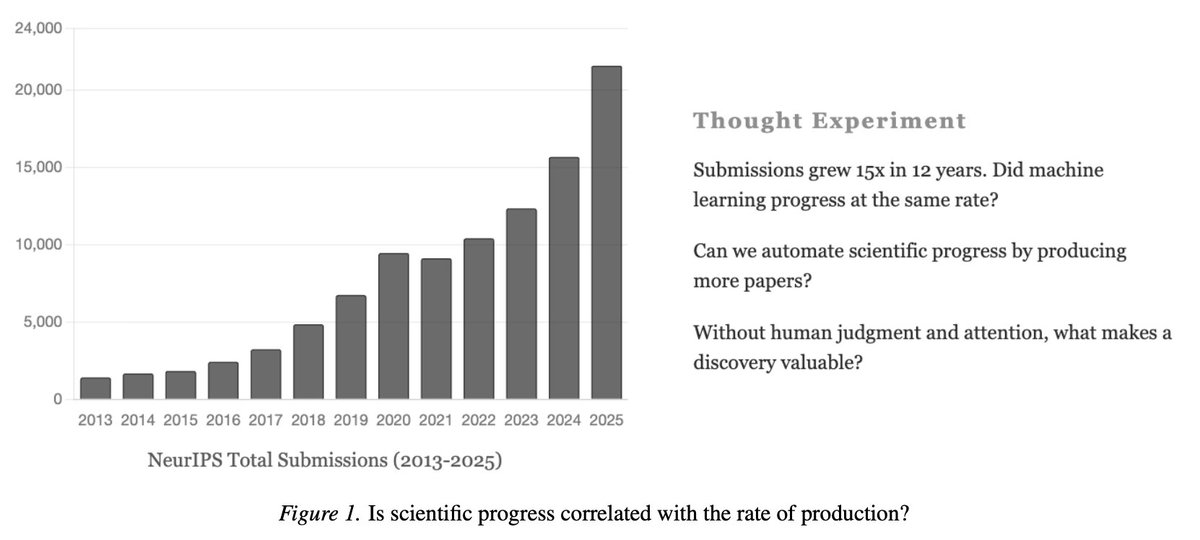

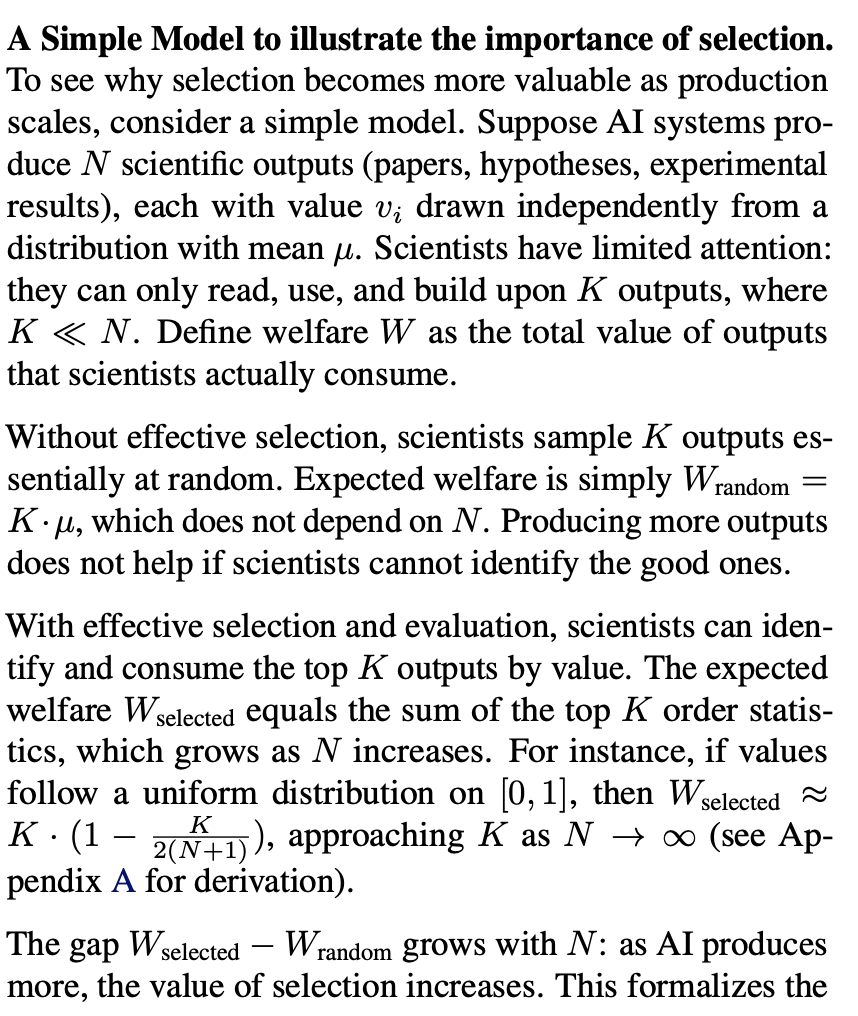

AI can accelerate scientific discovery, but only if we get the scientist–AI interaction right. The dream of “autonomous AI scientists” is tempting: machines that generate hypotheses, run experiments, and write papers. But science isn’t just an automation problem — it’s also a resource allocation problem: deciding what matters, which hypotheses to test, and which results to trust. As AI expands the search space and eases knowledge production, human scientists will increasingly act as selectors and evaluators. Supporting these roles effectively is critical for meaningful progress. To help enable this shift, we’re introducing Hypogenic.ai, a platform for idea selection and evaluation. 💡 IdeaHub: collective rating and discussion of research ideas. 🧠 Ideation Assistant: AI-driven research ideation. Science will move faster only when we pair automation with effective scientist–AI interaction. Read the full piece here 👉 cichicago.substack.com/p/the-mirage-o…

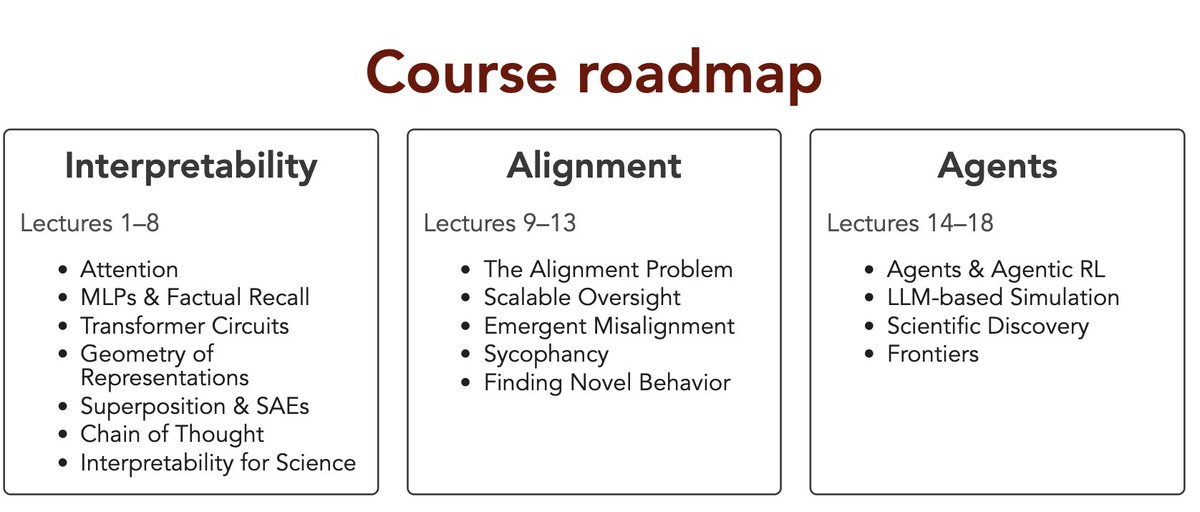

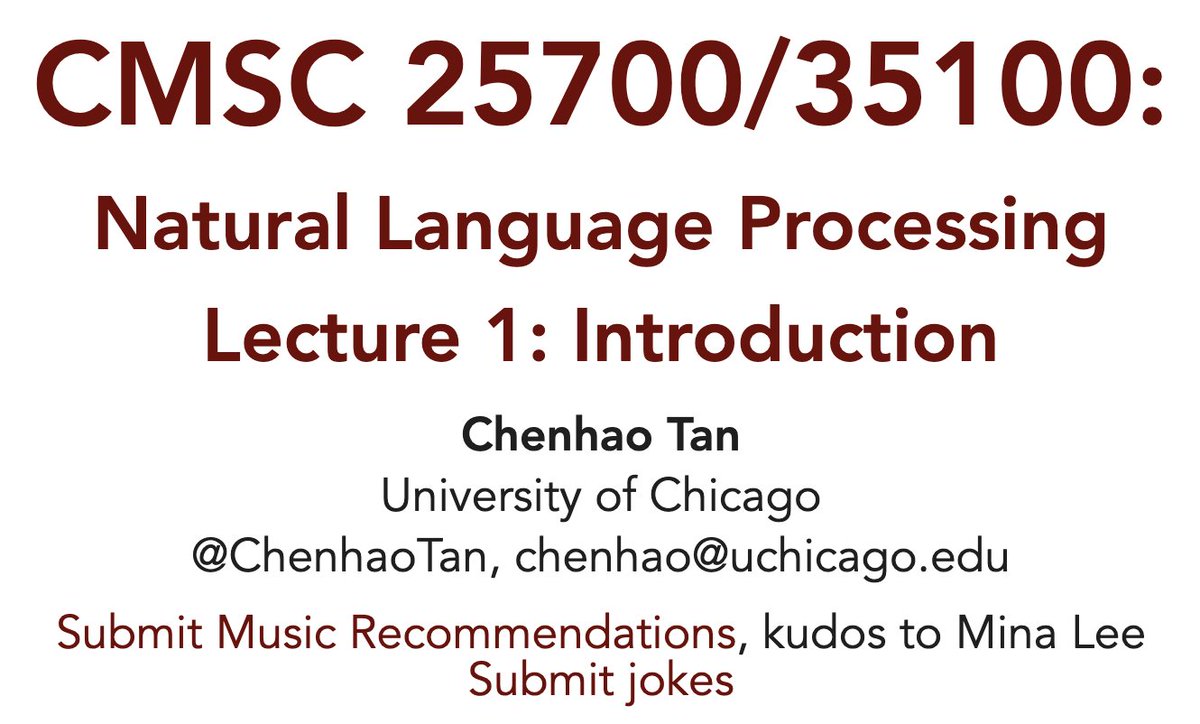

I am teaching a ~60 person class that involves a lot of Transformers and Language Modeling in the new year. What is the cheapest and easiest solution to getting my students just a bit of compute to play around with?

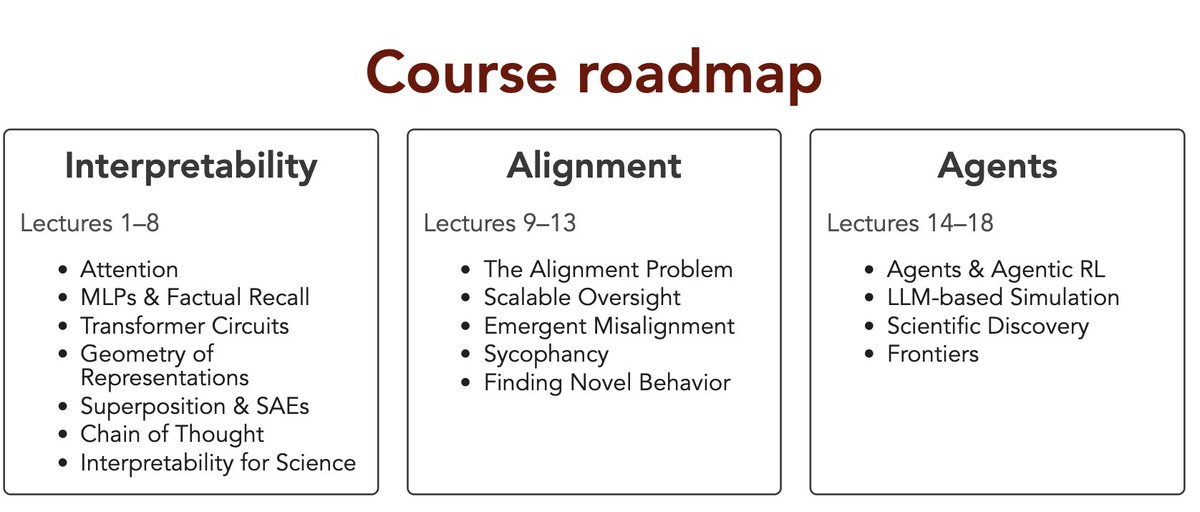

Ever wondered what an AI-first email job would look like? Let's play HR Simulator™ and find out!