Yuren Cong

51 posts

@CongYuren

Research Scientist @Meta | exploring multimodal GenAI systems🤖

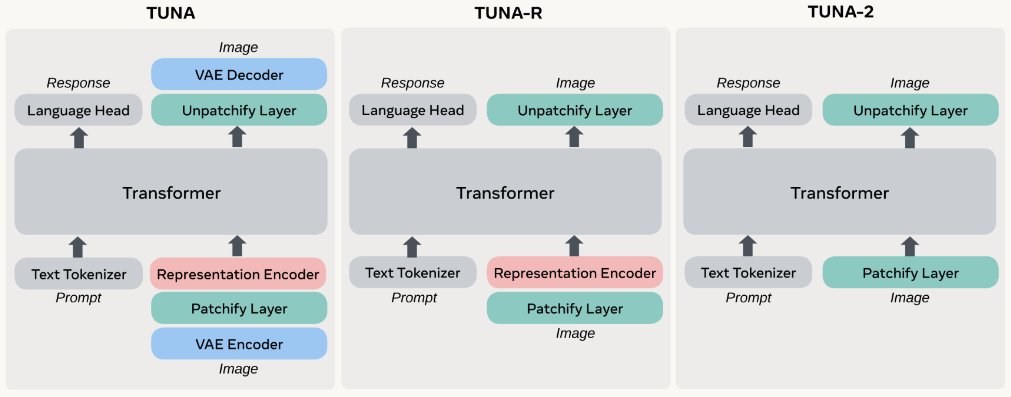

2/🚀 How: We first derive Tuna-R, a pixel-space model that relies solely on a representation encoder. Furthermore, Tuna-2 streamlines the design by bypassing the representation encoder entirely, utilizing direct patch embedding layers for raw image inputs.

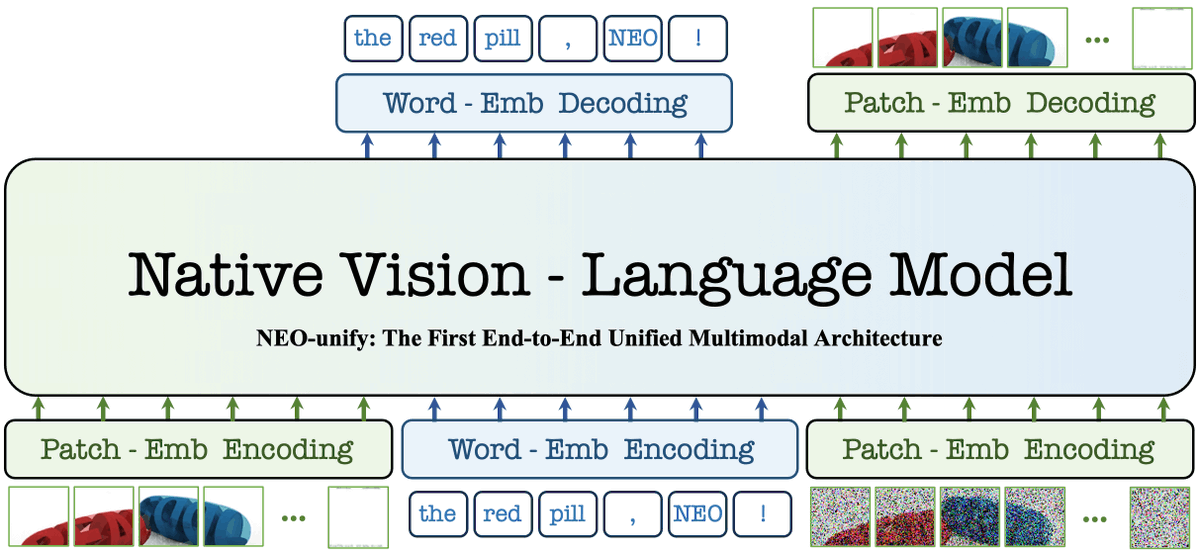

1/🚀 Excited to announce Tuna-2: Pixel Embeddings Beat Vision Encoders for Multimodal Understanding and Generation! We built an omni model utilizing direct patch embedding layers for raw image inputs and achieves SOTA in multimodal understanding AND generation. Paper: huggingface.co/papers/2604.24… Code: github.com/facebookresear… Thanks to all the co-authors! @__Johanan, @wmren993, @xiaoke_shawn_h, @ShoufaChen, @TianhongLi6, Mengzhao Chen, Yatai Ji, Sen He, Jonas Schult, Belinda Zeng, Tao Xiang, @WenhuChen, Ping Luo, @LukeZettlemoyer!

Glad to share we have 3 papers accepted to #CVPR2026🥳🥳🥳: (1/3) TUNA: Taming Unified Visual Representations for Native Unified Multimodal Models arxiv.org/abs/2512.02014

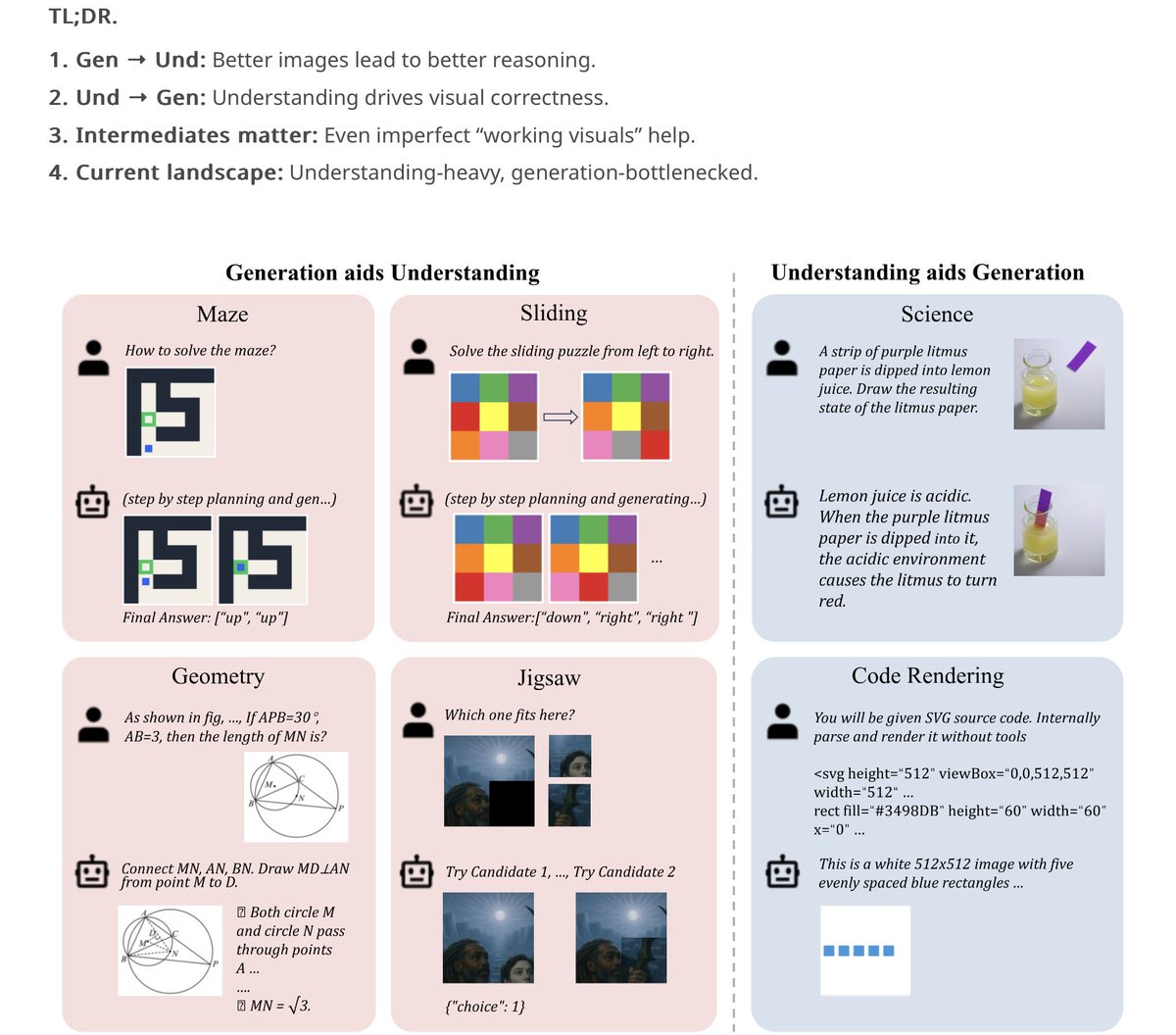

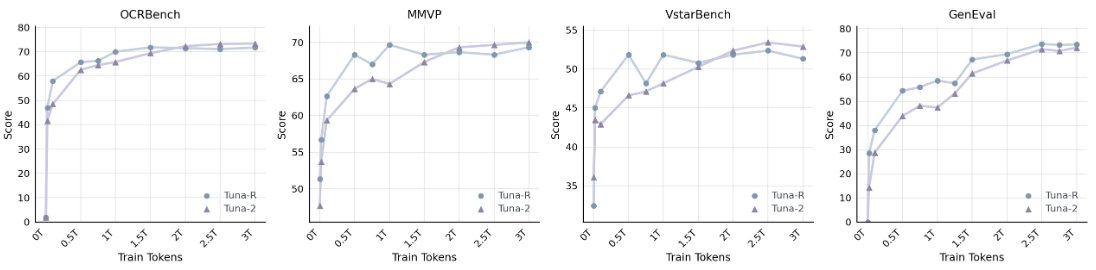

🔥We present Tuna, a native unified multimodal model built on a unified continuous visual representation, enabling diverse multimodal understanding and generation capabilities (T2I/I2T/I2I/T2V/V2T): 1️⃣ Unified Representation We build an effective unified representation space by cascading a VAE encoder with a representation encoder. 2️⃣ Mutual Benefit Within a unified representation space, joint training enables understanding and generation to mutually benefit each other. 3️⃣ Representation Encoders matter Stronger representation encoders consistently yield better performance across all multimodal task. 🌟 Our method generalizes beyond the image domain to the video domain as well. Notably, TUNA-1.5B demonstrates outstanding performance on both video generation and understanding tasks. 🐟 Project page: tuna-ai.org