Weiming Ren

89 posts

@wmren993

CS PhD student @UWaterloo @UWCheritonCS

Meta presents Tuna-2 Pixel Embeddings Beat Vision Encoders for Multimodal Understanding and Generation paper: huggingface.co/papers/2604.24…

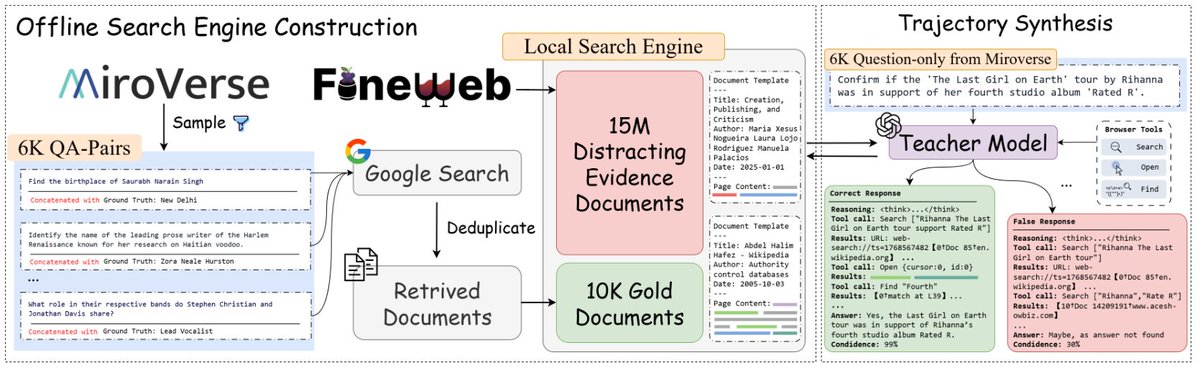

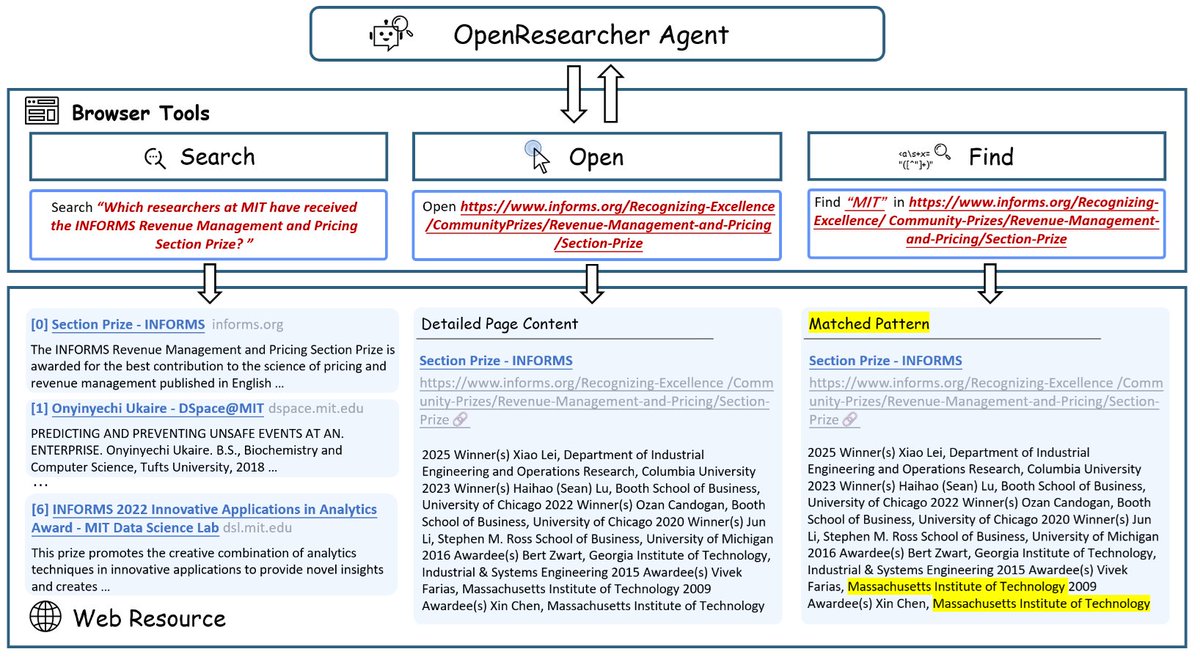

🚀 Introducing OpenResearcher: a fully offline pipeline for synthesizing 100+ turn deep-research trajectories—no search/scrape APIs, no rate limits, no nondeterminism. 💡 We use GPT-OSS-120B + a local retriever + a 10T-token corpus to generate long-horizon tool-use traces (search → open → find) that look like real browsing, but are free + reproducible. 📈 The payoff: SFT on these trajectories turns Nemotron-3-Nano-30B-A3B from 20.8% → 54.8% accuracy on BrowseComp-Plus (+34.0). 🧩 What makes it work? 🔎 Offline corpus = 15M FineWeb docs + 10K “gold” passages (bootstrapped once) 🧰 Explicit browsing primitives = better evidence-finding than “retrieve-and-read” 🎯 Reject sampling = keep only successful long-horizon traces 🧵 And we’re releasing everything: ✅ code + search engine + corpus recipe ✅ 96K-ish trajectories + eval logs ✅ trained models + live demo 👨💻 GitHub: github.com/TIGER-AI-Lab/O… 🤗 Models & data: huggingface.co/collections/TI… 🚀 Demo: huggingface.co/spaces/OpenRes… 🔎 Eval logs: huggingface.co/datasets/OpenR… #llms #agentic #deepresearch #tooluse #opensource #retrieval #SFT