Doc

2.3K posts

Doc

@CoreyClarkPhD

@BalancedMT CTO, Deputy Director Research at @SMUGuildhall & Assoc Prof SMU CS, Director @HuMInGameLab: Merging Games, AI/ML and Distributed Computing

🚀 DeepSeek-R1 is here! ⚡ Performance on par with OpenAI-o1 📖 Fully open-source model & technical report 🏆 MIT licensed: Distill & commercialize freely! 🌐 Website & API are live now! Try DeepThink at chat.deepseek.com today! 🐋 1/n

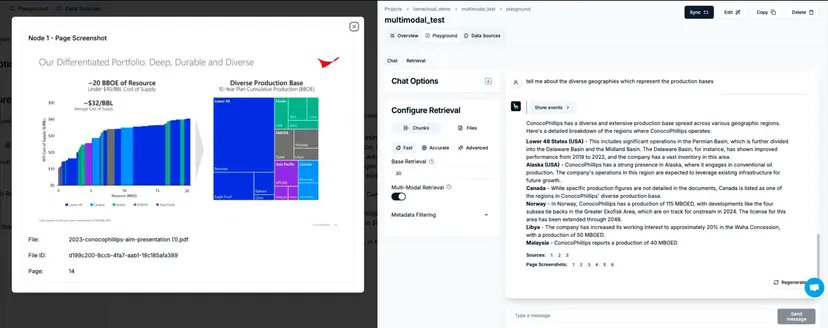

Today we’re excited to launch multimodal capabilities in LlamaCloud, which gives you the full toolkit to build e2e multimodal RAG pipelines across any unstructured data in minutes - whether it’s over marketing slide decks, legal/insurance contracts, finance reports. All you have to do is toggle the “multi-modal indexing” setting in LlamaCloud, and we will index each page as both a text and image chunk. You can easily validate your pipeline through our chat interface - includes image source as citations - or plug it into your application through an API. Check out our full launch blog post along with an example notebook showing you how to build with this API! Blog: llamaindex.ai/blog/multimoda… Notebook: github.com/run-llama/llam… Come talk to us: llamaindex.ai/contact

Congrats to Dr. Khaled Abdelghany and Dr. Corey Clark for being named among the Most Innovative Leaders in Artificial Intelligence in Dallas-Fort Worth by @DallasInnovates! Read more: bit.ly/4akA49H #AI #machinelearning #researchinnovation