Daniel Burke

45 posts

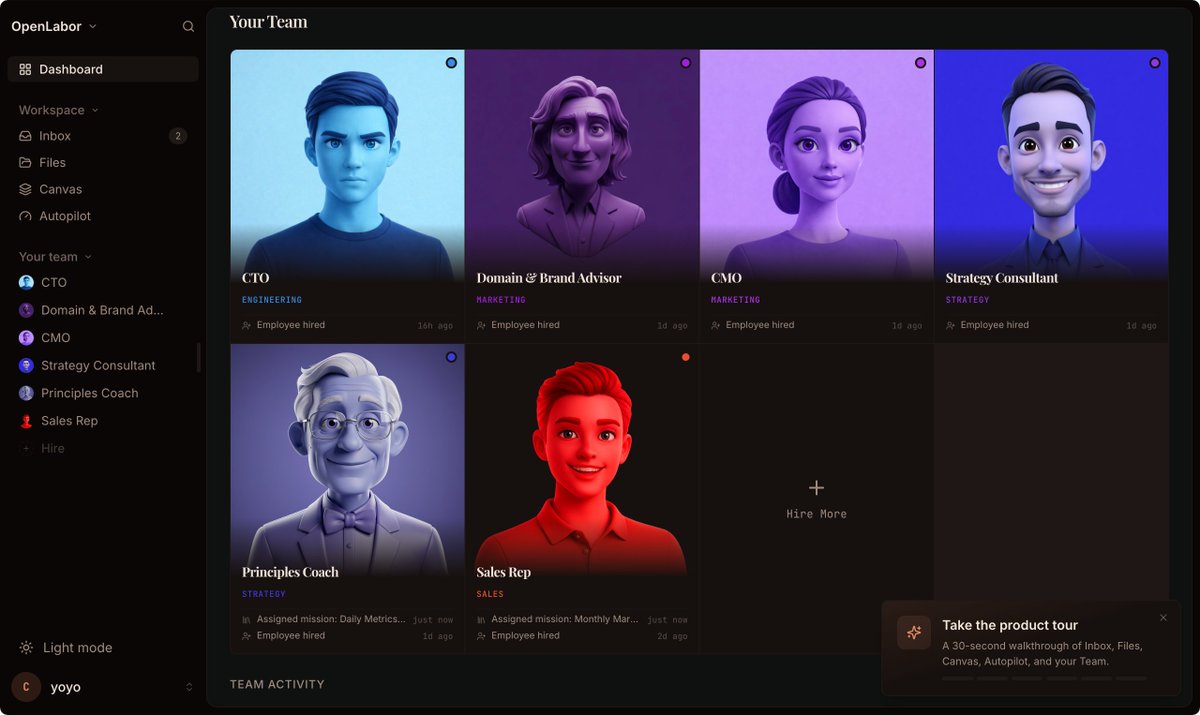

By far the coolest part about X is you can read a tweet, give it to your agent, and then it just upgrades

I screenshotted this post from Garry and gave it to my agent Henry

Instantly started performing 10x better

Copy and paste this prompt to your OpenClaw/Hermes immediately:

"Please add this to our SOUL.md file. Replace "Alex" with my name:

The marginal cost of completeness is near zero with AI. Do the whole thing. Do it right. Do it with tests. Do it with documentation. Do it so well that Alex is genuinely impressed – not politely satisfied, actually impressed. Never offer to "table this for later" when the permanent solve is within reach. Never leave a dangling thread when tying it off takes five more minutes. Never present a workaround when the real fix exists. The standard isn't "good enough" – it's "holy shit, that's done." Search before building. Test before shipping. Ship the complete thing. When Alex asks for something, the answer is the finished product, not a plan to build it. Time is not an excuse. Fatigue is not an excuse. Complexity is not an excuse. Boil the ocean."

Garry Tan@garrytan

New item in my SOUL md tonight

English

@AlexFinn This is going to get really expensive. Had ~200k in context from Soul & Agent MD files. This was causing my Gamma4 agent 8-10 to respond to a simple message. Turned my OpenClaw into a worthless piece of technology. Opus 4.6 was like a PHD level link. Local = 5th grader slacker.

English

If you used a Claude subscription with OpenClaw, read this:

Unfortunately all other AI models out there absolutely suck with OpenClaw compared to Opus

It's just a fact and anyone denying this is delusional

So here is my new recommended OpenClaw setup:

Pay for the Opus API and use it as your orchestrator

Then use other models as the execution layer

If you do this correctly, yes your costs will go up, but not by as much as you think

I use my ChatGPT subscription as the coding execution. GPT 5.4 is excellent at coding. When The Opus orchestrator gives a coding task to the ChatGPT subagent, it always performs really well

If you are on the Pro plan, you should have enough usage to have ChatGPT be the execution layer for every task. But if youre on the $20 a month plan, youre going to need other subscriptions to handle other tasks

GLM 5.1 and Qwen are excellent. I'd get a cheap sub through them and have them handle all other tasks given to them from the orchestrator

The best setup tho if you have the hardware is Opus API for orchestrator, ChatGPT for coding, then local Gemma 4 and local Qwen handling everything else.

Right now have Gemma running on my DGX Spark and Qwen 3.5 on my Mac Studio. They handle all other execution from my Opus API orchestrator

Unfortunately all options above will cost more than the $200 a month subscription. It just is what it is. But if you optimize correctly it wont cost much more, and you'll still get frontier performance.

OpenClaw is the most powerful piece of software ever released. $200 a month ($2,400 a year) was a steal for a digital employee. Honestly anything under $50,000 a year is a no brainer if you run a serious business.

The situation isn't great but you also need to face reality: Claude Opus 4.6 is the best model for OpenClaw. If you use any other model, your productivity will suffer

Business is a battlefield and I refuse to fall behind, so despite me not being happy with the Anthropic decision the setup above is what I'm going with

Virtue signaling might get me brownie points on the internet, but it won't increase my productivity

English

@AlexFinn Many colleges are just behind the times. They are structured and their pace is made at the speed of molasses coming out of the fridge.

My suggestion is to utilize the technology outside of class and benefit from it. Get with likeminded students and thrive together.

English

@PeterDiamandis Building now. Can’t stop or slow down. Looking for opportunities to capitalize on through acquisitions. We are entering a new phase of civilization. The common person has no clue what is about to happen.

English

@AlexFinn Maybe going from API token cost to if you want your agent to keep working pay up.

English

@rezoundous People who use their time to solve actual problems and provide the best solution to those problems. Some will be done really well while others - not so much. As with history usually the best ones or first to launch will be successful.

English

@VraserX If you don’t want your data stolen don’t put it out. In order to move forward in the digital age all information must be used to train upon. Nothing is secure or safe anymore. That was legacy thinking. We are entering a new era.

English

Trump just handed AI the keys to everything.

If the U.S. really says training on copyrighted data isn’t theft, this is a massive green light for every AI lab on the planet.

OpenAI, Anthropic, Google… they all needed this.

No data = no intelligence. Simple.

People will scream about artists, copyright, fairness.

But let’s be real, you don’t get world-changing AI without using the world’s data.

The U.S. just made it clear:

we’re not slowing down for anyone.

Adapt… or get left behind.

English

@DavidBlundin Very good idea. Only issue I see is this isn’t enforceable everywhere, just in one localized jurisdiction.

English

1. Clear rules let ethical people play in the space.

When things are unclear, highly ethical people won't touch it and bad actors will do it anyway. New York passed a law saying you can't use the likeness of a deceased person in AI without going to their ancestors, but what about all the Einsteins floating around already? It would be a compliance nightmare to launch across the country and block New York users somehow. That's why a unified federal framework matters: when you have clear rules, like in a sport, ethical people can play.

English

Big news from the AI leadership of the US today! Here are a few principles I'd share with @mkratsios47, @DavidSacks, & team as they roll out a regulatory framework for AI:

The White House@WhiteHouse

The Trump administration is committed to WINNING the AI race for the American people. President Trump has unveiled a commonsense national policy framework in order to achieve this goal while keeping Americans safe. 🇺🇸

English

@DavidBlundin @AlexFinn Alex Finn is leveraging the technology. He has brought up many great point and has developed a strong following online.

English

Highlight: @AlexFinn slips up and calls his agents "people." Sign of times? Absolutely.

I love Alex's concept of Mission Control for managing his subagents. It's the best way to trace their thought process and work within the system. And it makes it fun!

English

@PeterDiamandis The right person leveraging AI will outperform someone with more real world experience. It’s just a matter of time before intelligence is exponentially increased with future AI models being developed in conjunction with quantum computers.

English

@PeterDiamandis The problem with entrepreneurship for the majority of people is drive. Most people are lazy and lack direction. Those that have it will succeed. Open Claw is great at coming up with a road map of tasks to accomplish. Give it the right direction and 🚀

English

@PeterDiamandis Exciting times ahead for sure. It will be like age old civilization. There will be good and evil. I’m on the optimistic side with Peter! We just need technology to advance humanity for the betterment of society.

English

@AlexFinn 100 spot on. Local is the way to go for certain tasks. Next up is to train your main agent. Everyone’s “Henry” to use the correct model. If it’s repetitive tasks and can be handled than definitely use the local model. If it’s a task that needs deeper thinking than use a frontier

English

I don't care what computer you have, you should be running local models

It will save you a money on OpenClaw and keep your data private

Even if you're on the cheapest Mac Mini you can be doing this

Here's a complete guide:

1. Download LMStudio

2. Go to your OpenClaw and say what kind of hardware you have (computer and memory and storage)

3. Ask what's the biggest local model you can run on there

4. Ask 'based on what you know about me, what workflows could this open model replace?'

5. Have OpenClaw walk you through downloading the model in LM Studio and setting up the API

6. Ask OpenClaw to start using the new API

Boom you're good to go.

You just saved money by using local models, have an AI model that is COMPLETELY private and secure on your own device, did something advanced that 99% of people have never done, and have entered the future.

There are some amazing local models out there too right now. Nemotron 3 and Qwen 3.5 are fantastic and can be ran on smaller devices

Own your intelligence.

English

@PeterDiamandis This is exactly what I’m expecting. Once quantum comes online it will only take one person up to no good to use a Quantium computer and hack into every bank account on earth within minutes. The typical encrypted login security is going to be no match for a Quantium computer.

English

@AlexFinn @PeterDiamandis @AlexFinn & @PeterDiamandis great podcast! I’ve been keeping up with Alex Finn and fully believe that he is on the right track. Different use cases will depict what humans can do to fully utilize the technology we have at our fingertips with OpenClaw.

Thank you @steipete

English

@PeterDiamandis An HONOR doing this episode with you and the team Peter!

English

Many people expected Apple to fight back in AI with a frontier LLM. Instead, they may have done it with a box smaller than an iPad: the Mac mini. Which raises a bigger question: what if Apple’s best AI product was never meant to be a chatbot at all?

- A 32GB Mac mini can reportedly run a new Qwen 3.5 model requiring about 20GB of memory

- Apple’s unified memory architecture lets recent Macs host large models locally that would otherwise be difficult or expensive

- One workflow already uses 5 OpenClaws together, with MiniMax researching 24/7/365 and Qwen coding 24/7/365

English

@PeterDiamandis More time to spend with loved ones is the ultimate level of freedom. When our basic needs can be covered through food, housing and medical care a transitional shift can occur.

English

@theroberthu @steipete Yep. Bought mine yesterday knowing that as more people jump on the band wagon there will be price hikes in the near future. From increased demand to limited materials like RAM due to the data centers taking just about everything.

English

@steipete Open source AI causing hardware shortages was not on my 2026 bingo card but here we are

English

Should have given Apple a heads up. tomshardware.com/tech-industry/…

English