Sabitlenmiş Tweet

Darshan Yadav

749 posts

Darshan Yadav

@DarshanSays

Problem Solver, Writing on AI security, agentic risk, and why the perimeter is now the data itself. Views my own.

Katılım Nisan 2026

134 Takip Edilen57 Takipçiler

The "cracks" often preexisted - AI workflows just expose them faster.

Model containers with write access to host mounts. GPU workloads running as root. No egress controls on what leaves the inference environment.

Isolation and least-privilege matter as much for AI workloads as any other. The model is just another process.

#ContainerSecurity #AgenticAI

English

Running AI workflows exposes cracks in systems built for something else.

This issue of the Docker Navigator looks at what breaks and how teams adapt: hardening images, isolating workloads beyond containers, handling supply chain attacks, & moving to production-ready systems - bit.ly/4dIYa2x

English

Why send sensitive prompts to a remote API when a capable model can run on your own hardware?

SLMs are good enough for most enterprise tasks now. Local inference means no data leaves your network, no vendor logging your queries.

Microsoft Phi cookbook to get started:

github.com/microsoft/PhiC…

#SLM #DataSecurity #PrivateAI

English

The data angle makes it worse. Whoever controls the model controls what gets logged, what gets used for training, and what gets retained.

Big AI solution farms don't just outcompete - they also become the data processor for everything their customers build on top of them.

That's not just an economic problem. It's a sovereignty one. #AIRisk #DataSecurity

English

Found a way to make a commercial LLM leak its system prompt? Output PII? Bypass its safety controls?

Who do you tell?

Most AI companies don't have a structured vulnerability disclosure program for model behavior. That gap needs to close before agentic deployments become the norm.

#AIRisk #Compliance #LLMSecurity

English

The network perimeter is dead. You can't firewall your way to security when data travels through LLM context windows, agent memory, and third-party APIs.

The new perimeter is the data itself.

Classify before you share. Know where it goes. Control who - and what - touches it.

#DataCentricSecurity #ZeroTrust

English

When an AI agent queries a database, reads a file, or calls an API - what enforces what it can access?

Most teams trust the agent. That's the gap.

Policy-as-code enforces data boundaries at runtime - regardless of which model or agent makes the request:

github.com/open-policy-ag…

#DataSecurity #AgenticAI

English

CVSS 10.0, unauthenticated, arbitrary command execution on SD-WAN controllers.

If you're running AI agents that interact with network infrastructure or pull telemetry from SD-WAN environments - this is a critical path to compromise. Patch before you automate.

Full advisory: rapid7.com #CVE #CriticalVuln #ZeroTrust

English

Today @rapid7 and Cisco are disclosing CVE-2026-20182, a critical (CVSS 10.0) auth bypass affecting Cisco Catalyst SD-WAN Controller, found by @_CryptoCat and I when we were researching CVE-2026-20127 last Feb. An unauth attacker can become the vmanage-admin and issue arbitrary NETCONF commands. Cisco has also disclosed that the new CVE is already EITW as of this month. Read our blog here with full technical details: rapid7.com/blog/post/ve-c…

English

We spent a decade designing for humans at keyboards.

Agents don't click - they call APIs, parse outputs, chain decisions, and act.

UI/UX assumptions break completely. The interface is now a contract: what the agent can do, what it can't, what it must ask before acting.

That's AX. Design for it.

#AgenticAI #AX #AIDesign

English

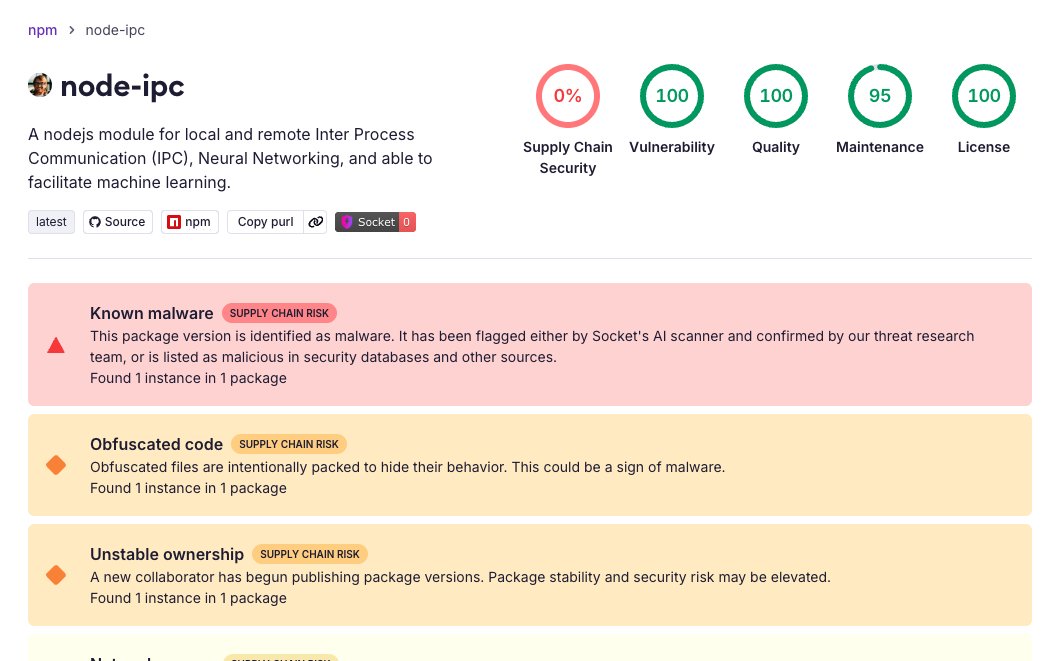

3 minutes to flag a malicious package with 822K weekly downloads. That's where AI in security actually delivers.

Supply chain is one of the hardest attack surfaces to monitor manually. Behavioral detection at publish time is exactly the right place for it.

Audit your node-ipc usage now if you're running those versions. #SupplyChain #SecurityAI

English

🚨 Socket detected malicious activity in newly published versions of node-ipc, an npm package with 822K weekly downloads.

Affected versions:

node-ipc@9.1.6

node-ipc@9.2.3

node-ipc@12.0.1

Socket’s AI scanner flagged the malware within ~3 minutes of publication.

Early analysis shows obfuscated stealer/backdoor behavior, including host fingerprinting, local file enumeration, payload wrapping, and attempted exfiltration.

English

AI security vendors claim autonomous threat detection. What many ship: a rule engine with a GPT wrapper and a dashboard that says "AI-powered."

Real value is detection at scale with context. Elastic publishes their detection rules openly:

github.com/elastic/detect…

#CyberSecurity #AITools #HypeVsReality

English

Your compliance team approved one LLM in Q1.

By Q3 your org is running six different models across four cloud providers, none reviewed, two of them free-tier.

AI governance without continuous model inventory is just documentation theater.

#Compliance #AIGovernance #Risk

English

AI agents make API calls. That means they handle credentials.

Most teams store those secrets in env vars, config files, or hard-coded in prompts.

Machine identity for agents isn't optional - it's the same problem solved for microservices, now applied to agents:

github.com/cyberark/conjur

#AgenticAI #ZeroTrust

English

This isn't a hypothetical anymore. AI-generated identities are passing hiring screens, background checks, even live video interviews with deepfake tools.

The attack surface for identity is now the face, the voice, and the resume - all synthetic, all convincing.

Zero trust on identity has to mean continuous, not just at onboarding. #AIRisk #ZeroTrust

English

Shipping an LLM feature without output validation is a risk few talk about.

The model can return malformed data, sensitive content, or hallucinations - and your app trusts it.

Guardrails adds structured validation to LLM outputs:

github.com/guardrails-ai/…

#AgenticSDLC #LLMSecurity

English

Your company banned ChatGPT to prevent data leakage. Brilliant.

Now employees use Claude, Gemini, Copilot, Perplexity, and three other tools you haven't heard of yet.

But sure, the one policy blocking one tool definitely solved shadow AI.

#sarcasm #ShadowAI #DataLeakage

English

Agentic voice AI closing the loop between intent and action fast. The benchmark lead matters - but so does what happens when an agent misinterprets context or gets fed adversarial audio.

Security needs to keep pace with capability here. @elonmusk is pushing the bar - the attack surface expands with it.

English

Grok Voice Think Fast 1.0 is officially the most well-rounded agentic voice AI on the market right now

It now ranks #1 in the latest τ-Voice agentic performance benchmarks in real-world tests on Artificial Analysis

The gap is massive. xAI is quietly taking over every other model by actually building for real-world use instead of just lab demos...

English

Prompt injection. Insecure output handling. Training data poisoning.

Known attack vectors - not hypotheticals. Most teams still have no threat model for their LLM integrations.

OWASP mapped them:

github.com/OWASP/www-proj…

#LLMSecurity #AIRisk

English

@cloudsek Typosquatting on crypto-js is high-impact - it's one of the most depended-on npm packages. AI agent frameworks pulling npm deps at runtime are particularly exposed to this class of attack.

Full CloudSEK analysis: cloudsek.com/blog/inside-a-…

English

New supply chain threat uncovered

CloudSEK TRIAD found an npm campaign using crypto-javascri, a typosquatted package impersonating crypto-js.

It steals npm/GitHub credentials, hijacks maintainer accounts, and uses Tor-based C2 to stay harder to disrupt.

cloudsek.com/blog/inside-a-…

English

Grok 4.3 from @elonmusk's xAI is getting real attention - fast inference, strong reasoning, and built with transparency around training data.

For security teams, faster models with better reasoning mean more capable AI-assisted threat analysis and faster response to emerging CVEs.

Competition in foundation models is good for everyone building on top of them.

#Grok #xAI #AISecurity

English

@BleepinComputer Teams as breach vector is increasingly a problem for AI-enabled enterprises. Many Copilot and agent deployments use Teams as an orchestration layer - same phishing path, much higher blast radius.

bleepingcomputer.com/news/security/…

English

KongTuke hackers now use Microsoft Teams for corporate breaches

bleepingcomputer.com/news/security/…

bleepingcomputer.com/news/security/…

English