Dean Donlon

2.6K posts

Dean Donlon

@DeanDonlon

Drive Business Growth Through Digital Advertising, AI Empowerment, & Operations Automation

N 43°39' 0'' / W 116°11' 0'' Katılım Nisan 2009

24 Takip Edilen247 Takipçiler

Dean Donlon retweetledi

There is a US corporation that has a $50B balance sheet, generates a 10% ROIC (~$5B of income per year) but has also convinced the US Government that:

a) it shouldn’t pay taxes and should be treated as a non profit

b) despite making billions more than necessary to cover costs, only spends the government mandated non-profit minimum of what it makes every year, creating an artificial $1.5B deficit

c) then asks the US government for help and gets $1.5B of taxpayer dollars every year to cover the gap that they could cover by themselves but chooses not to

d) while getting government funding, has been accused and found guilty by tue Supreme Court of systemic racial discrimination towards minority groups

This corporation is called the Harvard Corporation.

English

Dean Donlon retweetledi

Dean Donlon retweetledi

1) Yes it does cheapen the grade

2) If it’s happening at Yale it’s happening at every “elite” school

3) This is so insidious because it robs someone of learning to be resilient and say “I got a C - how do I do better?” and replaces it instead with “I’m smart and always right”

These school are so broken in so many ways.

English

Ain't that the truth! However, as all things in life it's how you look at change and adapt to new technology.

Like the internet and fear of Y2K etc... Adapt and learn to work with robotics and AI and you'll improve the quality of your life.

Naval@naval

Any physical task that a drone can do, a personal drone will eventually do for you. Any intellectual task that an AI can do, an AI agent will eventually do for you.

English

@pmarca Concur, absolutely. We need to organize and have more seats at the table. Let's begin by setting our own table, lobbying and campaign. Happy to help, Marc. Let's do it!

English

@pmarca Your an idiot who wants to curb evolution. Like they say on Grumpy Old Men you should pull your bottom lip over your head and swallow to save the world from your ignorance.

English

@ianmiles Worthless beings who don't even deserve the shoes on thier feet. Store owners should have a shoot at will law.

English

Who would you like to see on @PBDsPodcast podcast next?

English

Dean Donlon retweetledi

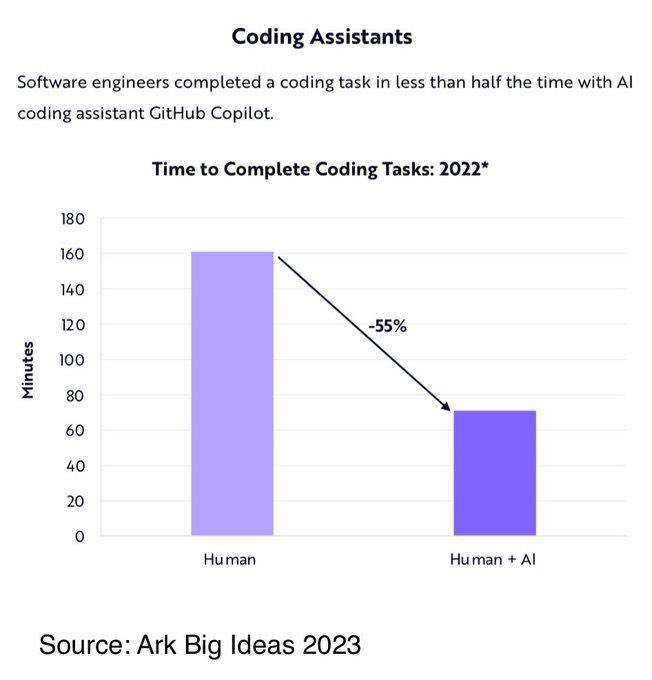

I was sent this chart and found the implication, if true, important.

Many people derided the reduction in force that happened at Twitter/X and the firing of 80% of the company. But it turned out that the company wasn’t only no worse for wear, but are seeing record usage since streamlining their workforce and OpEx.

Well if the chart below is true, it is a path that many other companies will have to take.

If you’re a public company, I don’t see how you can defend yourself from activists as AI tools proliferate. You have two choices:

1) double your work product and quantity of code shipped and product value as you keep headcount steady.

OR

2) reduce your R&D/OpEx by 50% and have half the team + AI tools do the work that the entire team used to do before.

FWIW, I don’t see how companies can empower their employees with tools and claim they have doubled their productivity unless revenue also doubles.

So the latter (#2) seems like the most obvious path that shareholders will push for. In no small part because of the SBC- based dilution they would also save if this happened.

English

#changeiscoming

Legislation was unveiled Jan. 13 in the U.S. House of Representatives to allow hemp-based CBD to be marketed in dietary supplements and conventional food.

House Bill 5587 would:

include... facebook.com/1034461721/pos…

English

Ohio hemp growing rules approved; farmers can start later this month daytondailynews.com/news/local/ohi…

English

The Future of Hemp Engineering: Hempcrete, Supercapacitors, Bio-fuel and More interestingengineering.com/the-future-of-…

English

Hemp: The Natural Response to Plastic Pollution psnet.biz/post/hemp-the-…

English

And it's in a can! #cannedcannabisisbetter facebook.com/1034461721/pos…

English

Mississippi Voters Will Decide Whether To Legalize Medical Marijuana hightimes.com/news/mississip…

English