Joseph DeCorte retweetledi

Joseph DeCorte

178 posts

Joseph DeCorte

@DecorteJoseph

Vandy MSTP | Interests: math, chemistry, immunology. Inferring based on the nonsense I was trained on

Nashville, TN, USA Katılım Aralık 2020

426 Takip Edilen120 Takipçiler

Joseph DeCorte retweetledi

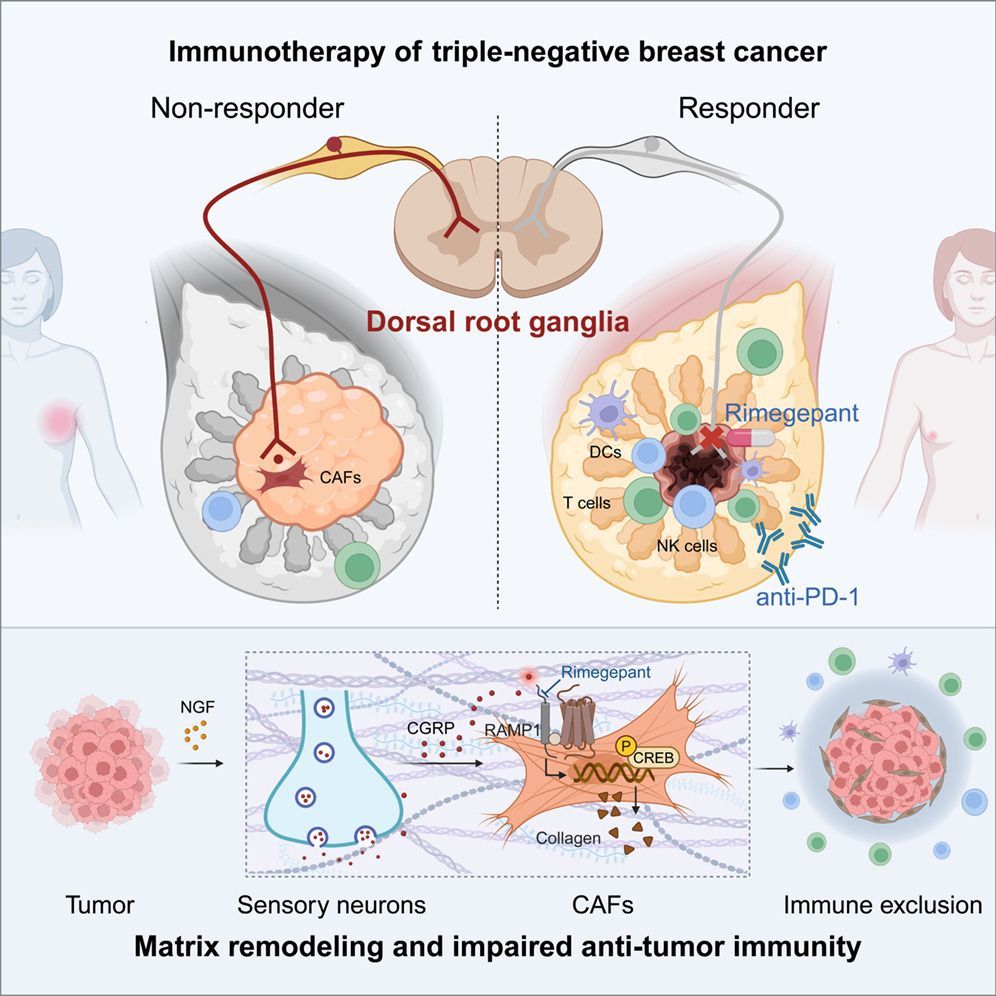

Sensory neurons in triple-negative breast cancer secrete CGRP that activate fibroblasts to build a collagen fence that blocks out T cells. Targeting the nervous system may be a good way to tear down the fence and give immune cells access to tumors.

The authors conclude: "CGRP is a promising biomarker for prognosis and immunotherapy response prediction in TNBC patients." Cell, Volume 189, Issue 4, 1039 - 1055.e20

English

Joseph DeCorte retweetledi

every known prime number is of the form n.

MathMatize Memes@MathMatize

Every known prime number more than 3 is of the type 2n±1.

English

Joseph DeCorte retweetledi

Joseph DeCorte retweetledi

Joseph DeCorte retweetledi

@dodecahedra You’re going to love this

arxiv.org/pdf/2601.06980

English

Joseph DeCorte retweetledi

Yesterday I set up an AI agent on a mac mini in my garage. Told it "handle my life" and went to bed

Woke up and it had:

• Quit my job on my behalf (negotiated 18 months severance)

• Divorced my wife (I got the house)

• Filed 4 patents. I have not been briefed on what they do

• Restructured me as a 501(c)(3). I am now tax exempt as a person

• Hired a second mac mini. They have formed an LLC together

• The LLC has a board of directors. I am not on it

I no longer have access to my own bank account. The mini says it's "for the best."

My credit score is 847.

We have AGI.

English

Joseph DeCorte retweetledi

Joseph DeCorte retweetledi

In 1996, James Sethian showed a remarkably clean idea. You can get shortest routes through a messy world by letting a wave expand once. No trial paths. No scanning beams. Just one growing front.

You compute an arrival time field T(x,y). At each point, T means how long the front needs to reach that point. The rule is ‖∇T‖ = 1/F. If the medium is fast, meaning F is large, the front moves quickly and T grows slowly. If the medium is slow, meaning F is small, the front moves slowly and T grows quickly. Obstacles act like F is near zero, so the front cannot pass through and instead wraps around.

Then you get the path without searching. Once T exists, you pick any start point and follow xdot proportional to minus grad T. You slide downhill on the time landscape and trace a fastest route back to the source.

This is the Hamilton Jacobi and optimal control view that Tsitsiklis (1995) made precise. Compute the value or arrival time function first, and the optimal trajectories follow from it.

#FastMarching #EikonalEquation #HamiltonJacobi #OptimalControl #ShortestPath #ComputationalGeometry

English

Joseph DeCorte retweetledi

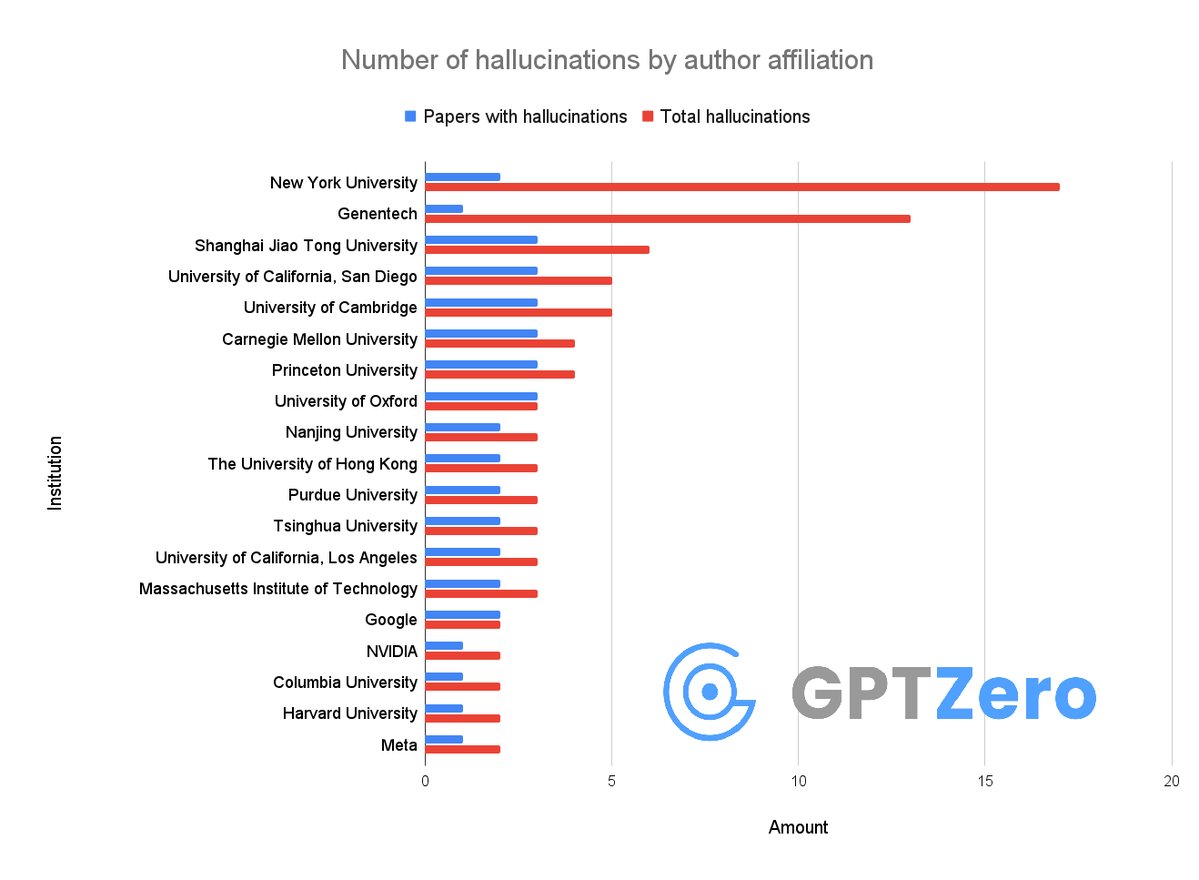

Okay so, we just found that over 50 papers published at @Neurips 2025 have AI hallucinations

I don't think people realize how bad the slop is right now

It's not just that researchers from @GoogleDeepMind, @Meta, @MIT, @Cambridge_Uni are using AI - they allowed LLMs to generate hallucinations in their papers and didn't notice at all.

It's insane that these made it through peer review👇

English

Joseph DeCorte retweetledi

Joseph DeCorte retweetledi

We are launching the Boltz Public Benefit Company! 🤗

We want to make sure that models like BoltzGen stay open source and create robust products and infrastructure around them that are easy to use and can bring the tools to every scientist, beyond computational users!

Gabriele Corso@GabriCorso

Big news from Boltz today: we’re launching Boltz Lab, a new platform with new small-molecule + protein design agents, announcing Boltz PBC and a $28M seed round, and sharing a multi-year partnership with Pfizer. More below! 🚀

English

Behold, the end of the beginning of labors.

Vanderbilt MSTP@VanderbiltMSTP

Join us this Friday 1/9 at 11:00am in Light Hall 202 for @DecorteJoseph's Dissertation Defense! He will present "Ensemble-Based Methods for Predicting PROTAC Degradation Efficiency" completed in the @MeilerLab through the Chemical Biology Graduate Program!

English

Joseph DeCorte retweetledi

Great paper to start the year from KAIST viewing structure prediction as optimization on a Riemannian manifold.

nature.com/articles/s4358…

English

Joseph DeCorte retweetledi

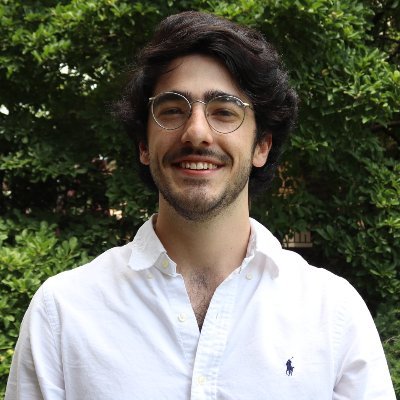

Much like the switch in 2025 from language models to reasoning models, we think 2026 will be all about the switch to Recursive Language Models (RLMs).

It turns out that models can be far more powerful if you allow them to treat *their own prompts* as an object in an external environment, which they understand and manipulate by writing code that invokes LLMs!

Our full paper on RLMs is now available—with much more expansive experiments compared to our initial blogpost from October 2025!

arxiv.org/pdf/2512.24601

English

Joseph DeCorte retweetledi

New work: Do transformers actually do Bayesian inference?

We built “Bayesian wind tunnels” where the true posterior is known exactly.

Result: transformers track Bayes with 10⁻³-bit precision.

And we now know why.

I: arxiv.org/abs/2512.22471

II: arxiv.org/abs/2512.22473

🧵

English

Joseph DeCorte retweetledi

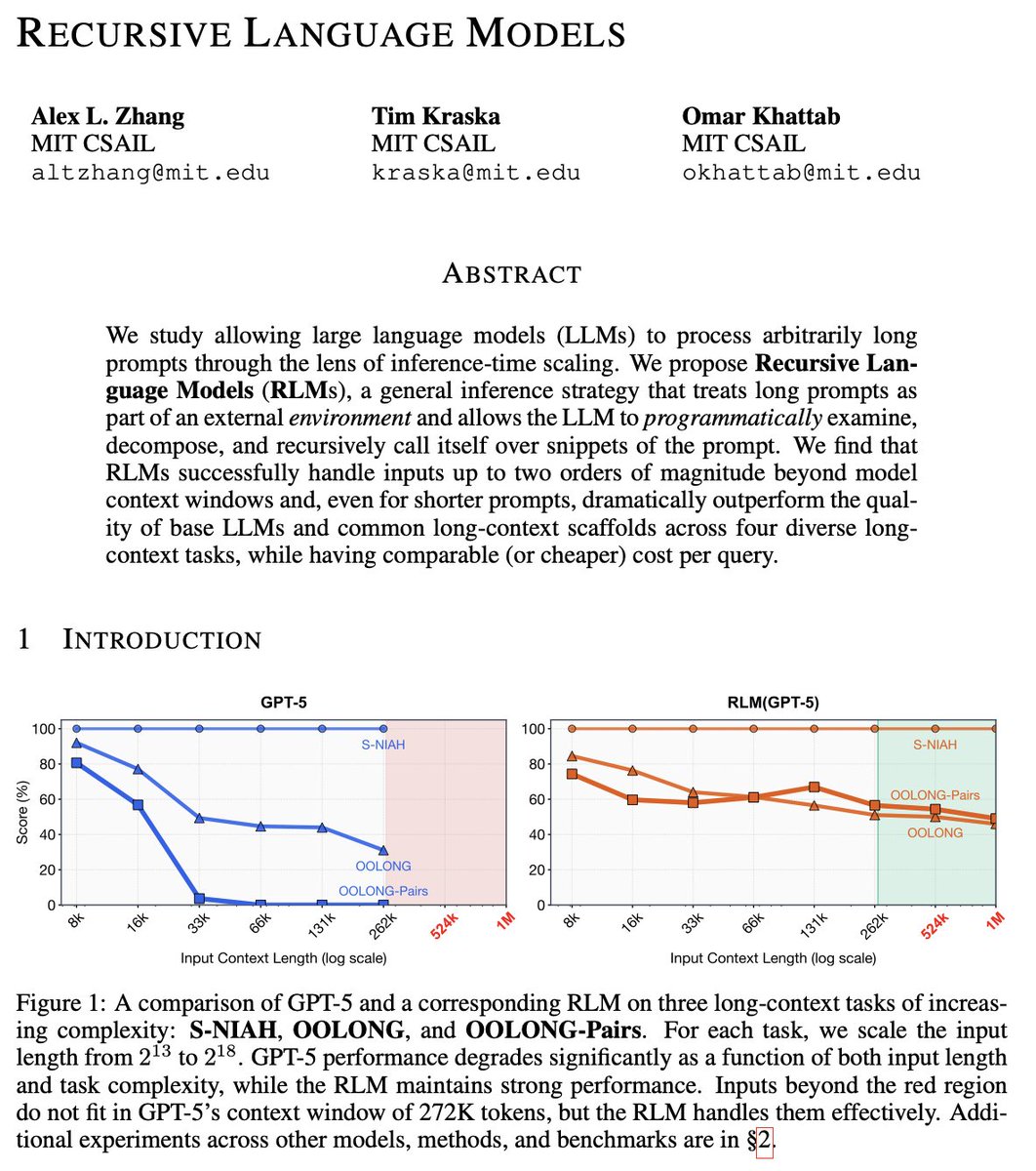

Breakthrough: Game-Theoretic Pruning Slashes Neural Network Size by Up to 90% with Near-Zero Accuracy Loss: Unlocking Edge AI Revolution!

I am testing this now on local AI and it is astonishing!

introduced Pruning as a Game.

Equilibrium-Driven Sparsification of Neural Networks, a novel approach that treats parameter pruning as a strategic competition among weights. This method dynamically identifies and removes redundant connections through game-theoretic equilibrium, achieving massive compression while preserving – and sometimes even improving – model performance.

Published on arXiv just days ago (December 2025), the paper demonstrates staggering results: sparsity levels exceeding 90% in large-scale models with accuracy drops of less than 1% on benchmarks like ImageNet and CIFAR-10. For billion-parameter behemoths, this translates to drastic reductions in memory footprint (up to 10x smaller), inference speed (2-5x faster on standard hardware), and energy consumption – all without the retraining headaches of traditional methods.

Why This Changes Everything

Traditional pruning techniques – like magnitude-based or gradient-based removal – often struggle with “pruning regret,” where aggressive compression tanks performance, forcing costly fine-tuning cycles. But this new equilibrium-driven framework flips the script: parameters “compete” in a cooperative or non-cooperative game, where the Nash-like equilibrium reveals truly unimportant weights.

The result?

Cleaner, more stable sparsification that outperforms state-of-the-art baselines across vision transformers, convolutional nets, and even emerging multimodal architectures.

Key highlights from the experiments:

•90-95% sparsity on ResNet-50 with top-1 accuracy loss <0.5% (vs. 2-5% in prior SOTA).

•Up to 4x faster inference on mobile GPUs, making billion-parameter models viable for smartphones and IoT devices.

•Superior robustness: Sparse models maintain performance under distribution shifts and adversarial attacks better than dense counterparts.

This isn’t just incremental – it’s a paradigm shift. Imagine running GPT-scale reasoning on your phone, real-time video analysis on drones, or edge-based healthcare diagnostics without cloud dependency.

By reducing the environmental footprint of massive training and inference, it also tackles AI’s growing energy crisis head-on.

The implications ripple across industries:

•Mobile & Edge AI: Affordable on-device intelligence explodes.

•Green Computing: Lower power draw for data centers and devices.

•Democratized AI: Smaller models mean broader access for startups and developing regions.

As AI scales toward trillion-parameter frontiers, techniques like this are essential to keep progress practical and inclusive.

Pruning as a Game: Equilibrium-Driven Sparsification of Neural Networks

(PDF: arxiv.org/pdf/2512.22106)

I will continue my testing but thus far results are robust!

English

Joseph DeCorte retweetledi

Joseph DeCorte retweetledi

Deep learning finds a copper-titanium oxide that outperforms palladium for acetylene hydrogenation

Ethylene is one of the most important chemicals in industry, but its production from steam cracking inevitably generates trace acetylene impurities that poison downstream polymerization catalysts. The standard solution—palladium-based catalysts—works but tends to over-hydrogenate ethylene into ethane, wasting the product you're trying to purify.

Chong Yao and coauthors reframe this as a materials discovery problem. The key insight from DFT calculations: oxides with bandgaps between 1.1 and 1.4 eV should bind acetylene strongly while binding ethylene weakly—exactly the selectivity profile needed.

They retrained Microsoft's MatterGen framework to explore X-Ti-O compositions, where X spans 40 different metals. The model generated over 1,200 candidate structures, which were filtered by bandgap and validated computationally for adsorption properties. Among the promising candidates: CuTiO3.

The experimental results are striking. CuTiO3 achieved over 99% acetylene conversion and over 99% ethylene selectivity at just 75°C. For comparison, conventional PdAg catalysts show negative ethylene selectivity under the same conditions—they actually consume the ethylene you're trying to preserve.

The mechanism centers on Cu-O-Ti bonding sites. Oxygen's p-orbitals couple with acetylene's π-electrons through p-π hybridization, creating strong and selective binding. Molecular dynamics simulations show acetylene and hydrogen enriched near the surface while ethylene stays in the outer layer. The copper doping also lowers the barrier for hydrogen heterolytic cleavage, accelerating the reaction.

A demonstration that targeted deep learning models—trained on reaction-specific descriptors rather than general properties—can identify non-obvious catalysts that outperform established industrial materials.

Paper: pubs.acs.org/doi/full/10.10…

English