Garrett G.

8.9K posts

Garrett G.

@DeepwriterAI

Personal account for the guy who created the smartest AI on earth. Opinions are my own, not DeepWriter AI's. Try it now 👇

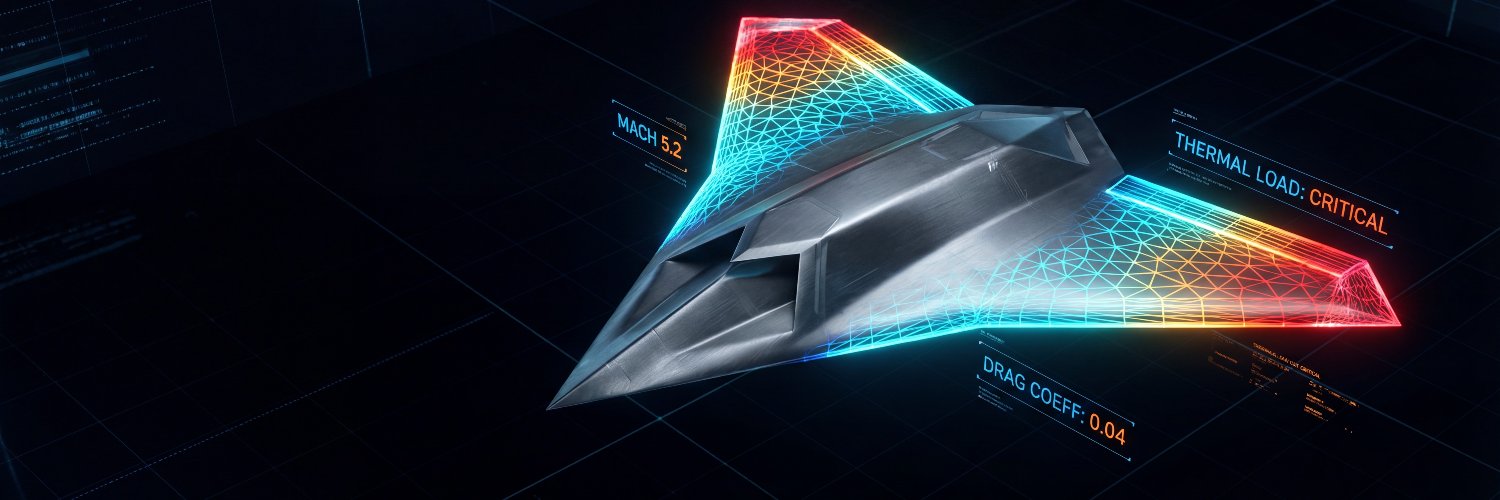

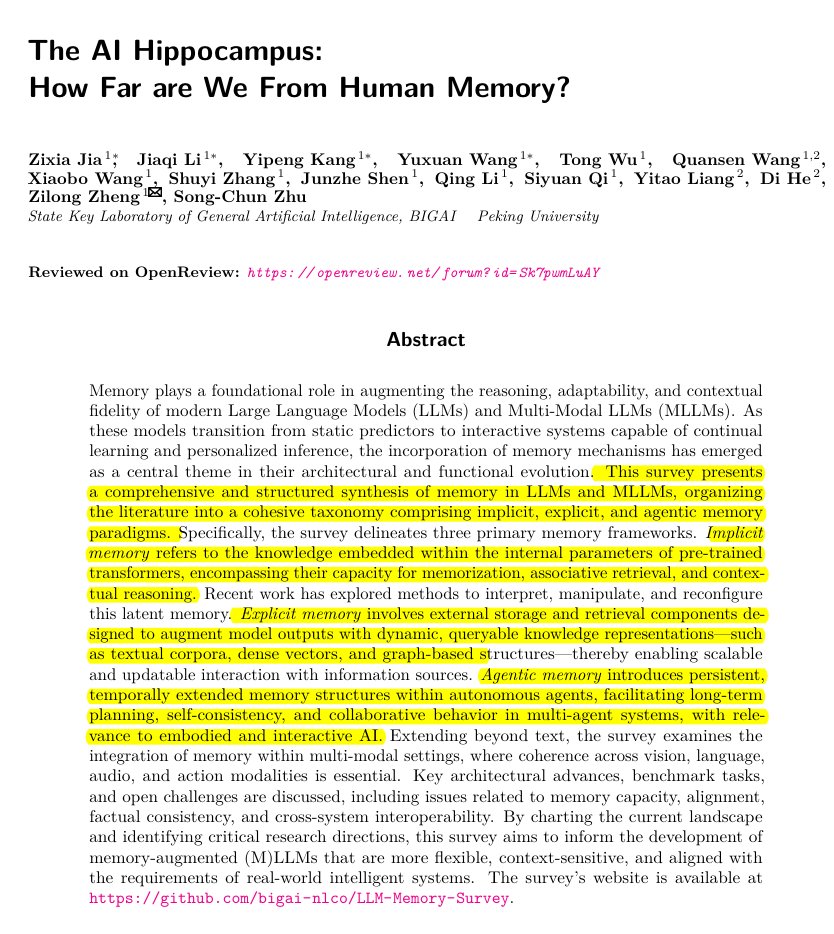

Terence Tao told me something that is both clarifying and unsettling about large language models. The mathematics underlying today’s LLMs is not especially exotic. At its core, training and inference mostly involve linear algebra, matrix multiplication, and some calculus. This is material a competent undergraduate could learn. In that sense, there is very little mystery about how these systems are constructed or how they run. And yet the real mystery begins there. What we do not understand well is why these models perform so impressively on certain tasks while failing unexpectedly on others. Even more striking, we lack reliable principles that allow us to predict this behavior in advance. Progress in the field remains largely empirical. Researchers scale models, change datasets, run experiments, and observe what emerges. Part of the difficulty lies in the nature of the data itself. Pure randomness is mathematically tractable. Perfectly structured systems are also tractable. But natural language, like most real-world phenomena, lives in an intermediate regime. And we humans hate that liminal space! It is neither noise nor order but a mixture of both. The mathematics for this middle ground remains comparatively underdeveloped. So we find ourselves in a peculiar position. We understand the machinery, yet we cannot reliably explain its capabilities. We can describe the mechanisms that produce these systems, but we cannot predict when new abilities will appear or how performance will vary across tasks. That tension, between relatively simple mathematical tools and highly unpredictable behavior, is the central puzzle of modern AI. (Video link in comments)

Claude Code and its ilk are coming for the study of politics like a freight train. A single academic is going to be able to write thousands of empirical papers (especially survey experiments or LLM experiments) per year. Claude Code can already essentially one-shot a full AJPS-style survey experiment paper (with access to Prolific API). We'll need to find new ways of organizing and disseminating political science research in the very near future for this deluge.

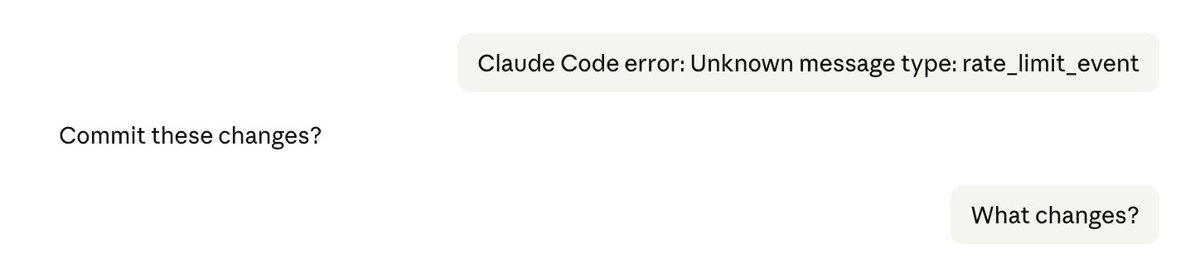

Situation: I submitted an error message to Claude (the top most message on the right). Claude then asked, "Commit these changes?" I have no clue what changes it wanted to commit, so I asked, "What changes?" And this fucker starts committing! After I stopped it and asked, "What the hell," it started to show me an approval modal with the question, "Do you allow me to commit?" I rejected, but it kept asking. Eventually, I made it shut up and showed it this screenshot, and it said that it thought "Commit these changes?" was *my* question to it and not the other way around. So, basically, because it's no longer a single model but a bunch of "subagents" asynchronously updating the conversation history, it loses track of who said what to whom. This is a real danger because some subagents might push into the history something that would make this Frankenstein decide to drop some production tables.

🚨: Scientists mapped 1 mm³ of a human brain ─ less than a grain of rice ─ and a microscopic cosmos appeared.

We are pleased to announce the Fellows of the AACR Academy Class of 2026. We look forward to celebrating their pioneering scientific achievements at the AACR Annual Meeting in April. brnw.ch/21wZqOo #AACR26 #AACRFellows