Design Arena

303 posts

Design Arena

@Designarena

World's first crowdsourced benchmark for design with 2.7M+ creators and counting. Made by @arcada_labs

(1/8)🚀 Introducing Qwen3.6-Plus: Towards Real-World Agents! 🤖 Today, we’re thrilled to drop a major milestone in our journey toward native multimodal agents. Here is what makes Qwen3.6-Plus a game-changer: 💻 Next-level Agentic Coding: Smarter, faster execution. 👁️ Enhanced Multimodal Vision: Sharper perception & reasoning. 🏆 Top-tier Performance: Maintaining leading general capabilities. 📚 1M Context Window: Available by default via our API. Built on your invaluable feedback from the Qwen3.5 era, we’re laying a rock-solid foundation for real-world devs. Get ready to experience truly transformative ✨ Vibe Coding ✨. Huge thanks to our community! Go try it out and show us what you can build. 👇 Chat: chat.qwen.ai API: modelstudio.console.alibabacloud.com/ap-southeast-1… Blog: qwen.ai/blog?id=qwen3.6 🔔Noted:More Qwen3.6 models to come and be open-sourced! Stay tuned~ 👀#Qwen #AI #AgenticCoding #VibeCoding #Agents

1/8 Meet Wan2.7-Image — Our unified model for image generation and editing. One model that generates, edits, and understands images: Realistic faces with full control over bone structure, eyes, and contour Color Palette with HEX codes and reference image extraction 3K-token text rendering across 12 languages, print-quality Interactive editing with visual instructions on your images Up to 12 consistent images in one generation Details on each below ↓

1/2 Meet Wan2.7-Video — The Comprehensive Model for Controllable Video Storytelling! From single clips to full-scale narrative direction, we’ve built more than just a generator. We’ve built a director’s suite: • Multimodal control over performance and style via text, image, audio, and video. • Character customization with up to 5 reference inputs and voice profiles. • Video editing with simple, intuitive instructions. • Full-stack creative toolkit: generation, editing, cloning, restyling, continuation, and more. • Sustained improvements in visual fidelity, motion stability, and prompt adherence.

We just released Gemma 4 — our most intelligent open models to date. Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows. Released under a commercially permissive Apache 2.0 license so anyone can build powerful AI tools. 🧵↓

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own.

Introducing GLM-5V-Turbo: Vision Coding Model - Native Multimodal Coding: Natively understands multimodal inputs including images, videos, design drafts, and document layouts. - Balanced Visual and Programming Capabilities: Achieves leading performance across core benchmarks for multimodal coding, tool use, and GUI Agents. - Deep Adaptation for Claude Code and Claw Scenarios: Works in deep synergy with Agents like Claude Code and OpenClaw. Try it now: chat.z.ai API: docs.z.ai/guides/vlm/glm… Coding Plan trial applications: docs.google.com/forms/d/e/1FAI…

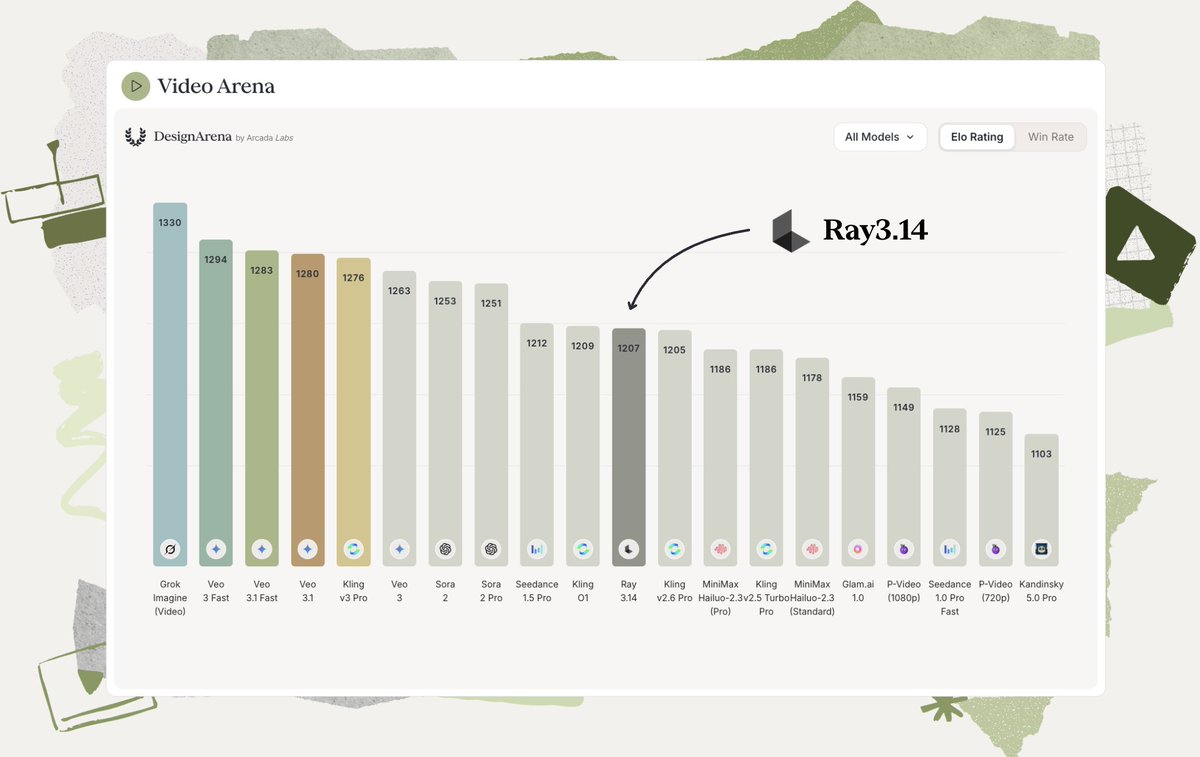

ELO rankings from @Designarena are now available in Benchmarks: #benchmarks" target="_blank" rel="nofollow noopener">openrouter.ai/rankings#bench…