Di Chang

83 posts

@DiChang10

PhD @CSatUSC|VSR @Stanford | Intern @MetaAI|Prev. ByteDance Seed @TikTok_US @EPFL @TU_Muenchen| Working on Multimodal Generative Models|

How do we generate videos on the scale of minutes, without drifting or forgetting about the historical context? We introduce Mixture of Contexts. Every minute-long video below is the direct output of our model in a single pass, with no post-processing, stitching, or editing. 1/4

[Hiring!] I am hiring multiple PhDs @CSatUSC @USCViterbi for this cycle. If you're interested in scene representations, neural simulation, generative AI, and robotics, feel free to mention my name in your application (no need to email). For USC masters/undergrads who're interested in our research, feel free to fill in this form forms.gle/RerZfDqCqmCj8A….

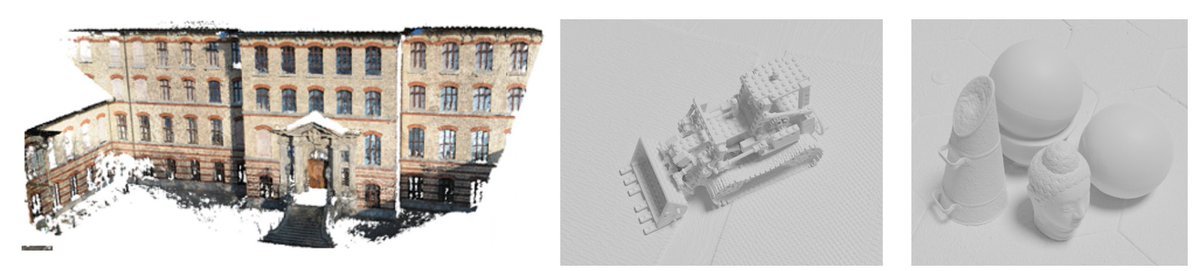

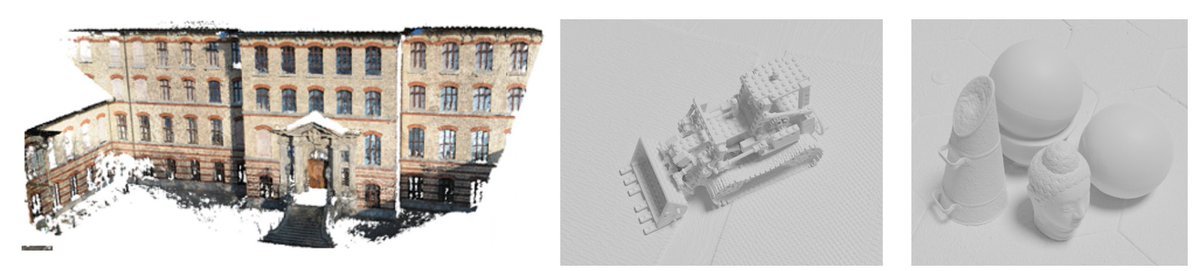

Excited to share MonST3R! -- a simple way to estimate geometry from unposed video of dynamic scene We achieve competitive results on several downstreams (video depth, camera pose) and believe this is a promising step toward feed-forward 4D reconstruction monst3r-project.github.io

Learning-based Multi-View Stereo: A Survey @FangjinhuaWang, Qingtian Zhu, @DiChang10, @UUUUUsher, @han_junlin, Tong Zhang, Richard Hartley, @mapo1 tl;dr: review of learning-based MVS methods until 2023 arxiv.org/pdf/2408.15235

I'm thrilled to share that MagicPose (formerly known as MagicDance) will be presented at #ICML2024! MagicPose (MagicDance) is a diffusion-based model for 2D human pose and facial expression retargeting. It enables robust appearance control over generated human images, including body, facial attributes, and background. By leveraging the prior knowledge of image diffusion models, MagicPose generalizes well to unseen human identities and complex poses without the need for additional fine-tuning. Moreover, the proposed model is easy to use and can be considered as a plug-in module/extension to Stable Diffusion. Our code has been fully open-sourced and please kindly gives the repo a star 🌟 if you find our project interesting🥰 💻 paper: arxiv.org/abs/2311.12052 🔗website: boese0601.github.io/magicdance/ ⌨️ code: github.com/Boese0601/Magi… MagicPose was my summer intern project at @tiktok_us. A huge thanks to my mentors Yichun Shi, Hongyi Xu, Guoxian Song, Qing Yan, Yizhe Zhu, Xiao Yang from TikTok. And I appreciate the help from my friend Quankai Gao @UUUUUsher and faculty advisor @msoleymani at @CSatUSC . See you in Vienna😘