陈善

40 posts

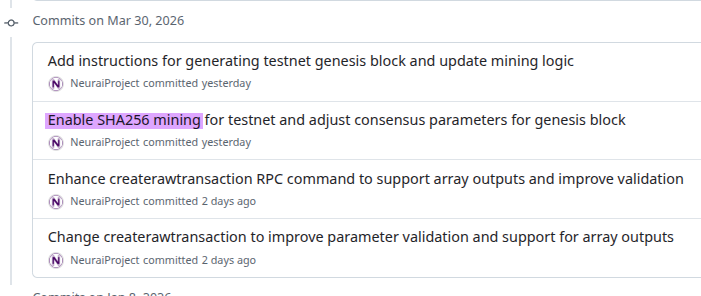

以太坊基金会又出来骚操作了。 为什么大家一直对以太坊基金会印象很差?因为 EF 除了之前攒了一堆圣母和蛀虫围绕 V 神搞小朝廷修仙之外,一直不停的卖卖卖。每年要卖出远超他们正常运营所需的钱,尤其经常在市场不好的时候 dump,。 今天这次进一步引起众怒的原因是,在 AAVE 的 Defi 危机里,整个 DeFi 生态在自救,Lido/Aave/EtherFi 创始人都在捐 ETH的时候,EF 反向操作。 他们非但没有站出来牵头,甚至也没有积极参与,而是在 2387 这个并不算好的价格又套现了 10000 个 $ETH ,而且还有点得意的表示这次我可没有直接砸盘哦,是 OTC 的,快来表扬我。 推文发出引发群嘲。 尤其是在诸多项目响应 AAVE 的"DeFi United"纷纷解囊相助,甚至 @StaniKulechov 都开始开放对散户的募资通道的时候, @ethereumfndn 干出这种事真的非常刺眼。 本来就没啥公信力的组织进一步加深了行业对他们的负面印象,well done,不如早日解散算了吧。

Aave service providers have been leading the DeFi United effort to restore rsETH's backing since the April 18 incident. We believe ecosystem collaboration matters most in moments like this, and our priority is achieving the strongest possible available outcome for users. Multiple strong indicative commitments are now in place to join this effort toward restoring the backing of rsETH. This includes @LidoFinance, whose contributors have published a proposal today to their DAO to participate in the joint recovery effort. Lido is one of many partners who are stepping up. We will continue to announce further commitments as they are formalized.

Update on rsETH incident: @LlamaRisk has published a report outlining the rsETH incident, the immediate actions taken, its impact on Aave, and potential paths forward. All service providers have been working to assess the two potential bad debt scenarios on the Aave protocol. Aave DAO service providers are also leading an effort with ecosystem participants to address any bad debt. This effort already has several indicative commitments from various parties and we are grateful for the strong support we have received so far. We will share further updates as we have them. In the meantime, the full report can be read here: governance.aave.com/t/rseth-incide…

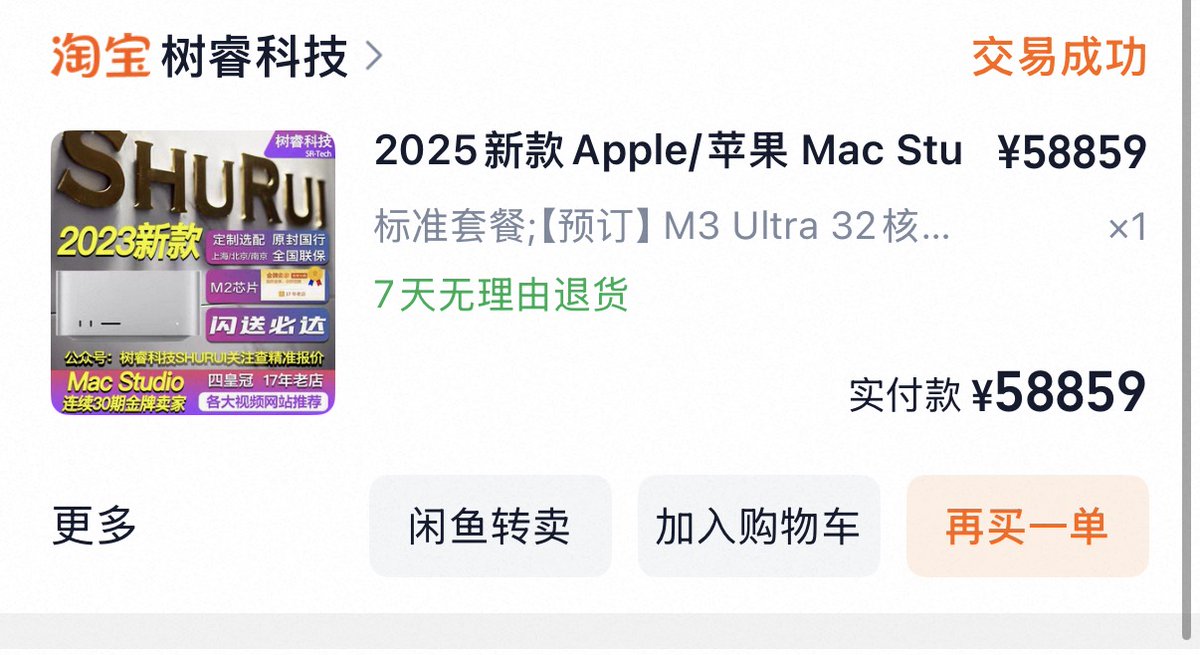

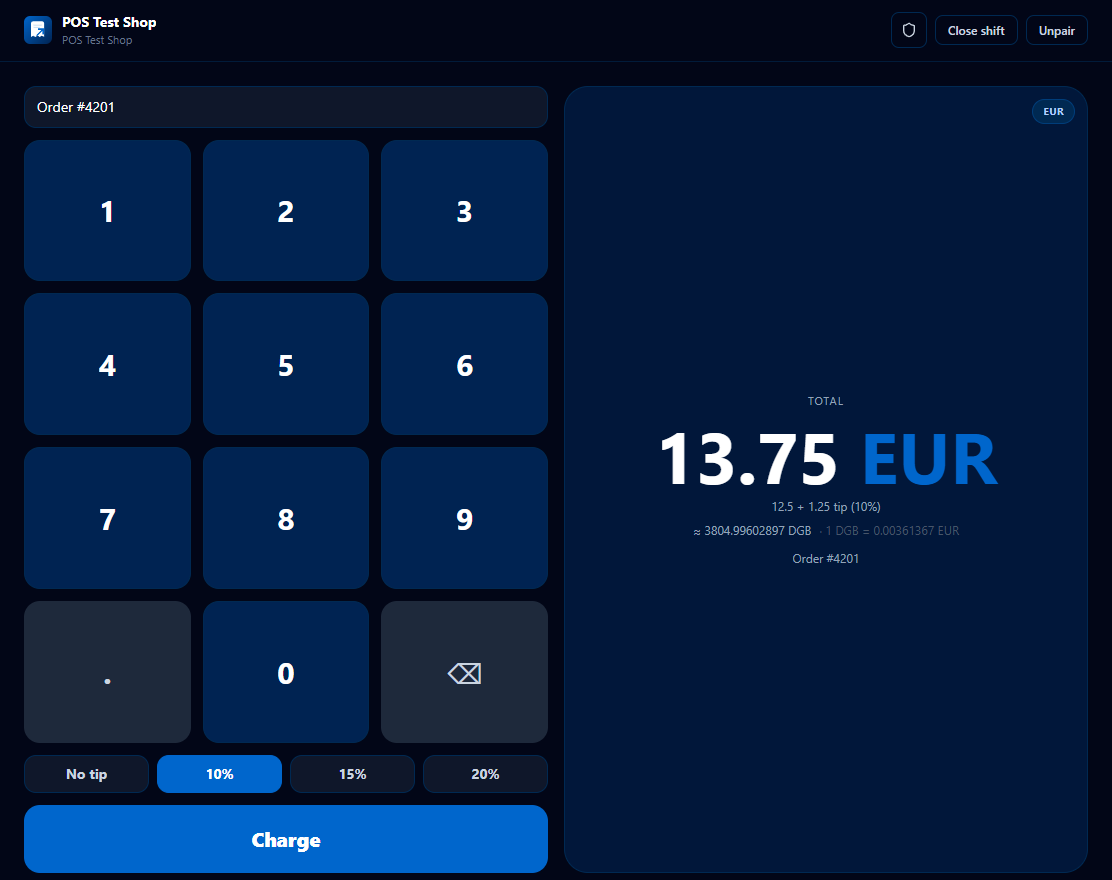

@CrypttoManiac_ #DigiByte y lo sabes 😎

Two days ago, Anthropic cut off third-party harnesses from using Claude subscriptions — not surprising. Three days ago, MiMo launched its Token Plan — a design I spent real time on, and what I believe is a serious attempt at getting compute allocation and agent harness development right. Putting these two things together, some thoughts: 1. Claude Code's subscription is a beautifully designed system for balanced compute allocation. My guess — it doesn't make money, possibly bleeds it, unless their API margins are 10-20x, which I doubt. I can't rigorously calculate the losses from third-party harnesses plugging in, but I've looked at OpenClaw's context management up close — it's bad. Within a single user query, it fires off rounds of low-value tool calls as separate API requests, each carrying a long context window (often >100K tokens) — wasteful even with cache hits, and in extreme cases driving up cache miss rates for other queries. The actual request count per query ends up several times higher than Claude Code's own framework. Translated to API pricing, the real cost is probably tens of times the subscription price. That's not a gap — that's a crater. 2. Third-party harnesses like OpenClaw/OpenCode can still call Claude via API — they just can't ride on subscriptions anymore. Short term, these agent users will feel the pain, costs jumping easily tens of times. But that pressure is exactly what pushes these harnesses to improve context management, maximize prompt cache hit rates to reuse processed context, cut wasteful token burn. Pain eventually converts to engineering discipline. 3. I'd urge LLM companies not to blindly race to the bottom on pricing before figuring out how to price a coding plan without hemorrhaging money. Selling tokens dirt cheap while leaving the door wide open to third-party harnesses looks nice to users, but it's a trap — the same trap Anthropic just walked out of. The deeper problem: if users burn their attention on low-quality agent harnesses, highly unstable and slow inference services, and models downgraded to cut costs, only to find they still can't get anything done — that's not a healthy cycle for user experience or retention. 4. On MiMo Token Plan — it supports third-party harnesses, billed by token quota, same logic as Claude's newly launched extra usage packages. Because what we're going for is long-term stable delivery of high-quality models and services — not getting you to impulse-pay and then abandon ship. The bigger picture: global compute capacity can't keep up with the token demand agents are creating. The real way forward isn't cheaper tokens — it's co-evolution. "More token-efficient agent harnesses" × "more powerful and efficient models." Anthropic's move, whether they intended it or not, is pushing the entire ecosystem — open source and closed source alike — in that direction. That's probably a good thing. The Agent era doesn't belong to whoever burns the most compute. It belongs to whoever uses it wisely.