Sabitlenmiş Tweet

senn yoods

574 posts

senn yoods

@DisgToast

i watch stuff and forget about it

Bangalore Katılım Ocak 2018

416 Takip Edilen35 Takipçiler

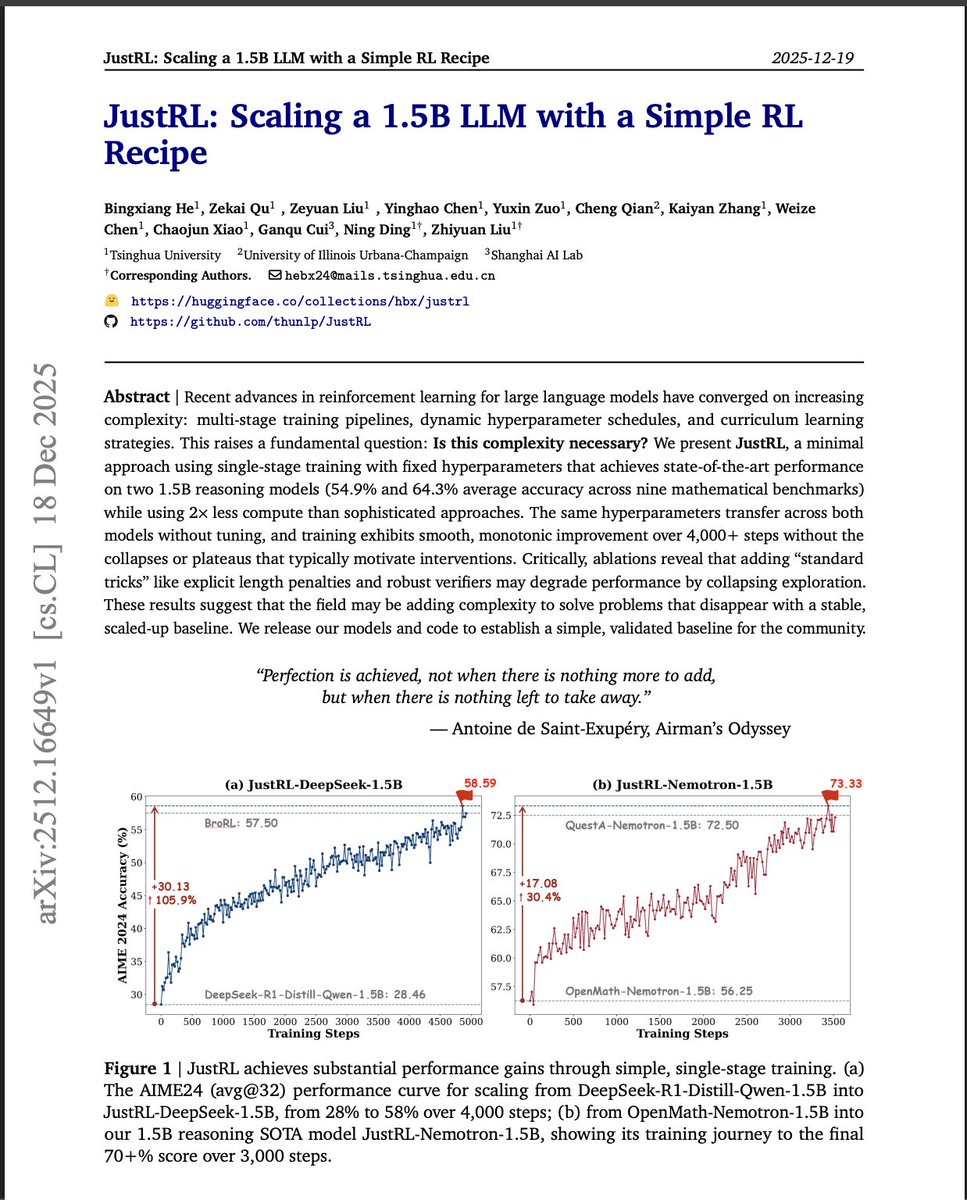

really cool paper. short, sweat and answers some of the problems I had post training small LLMs with RL.

TLDR:

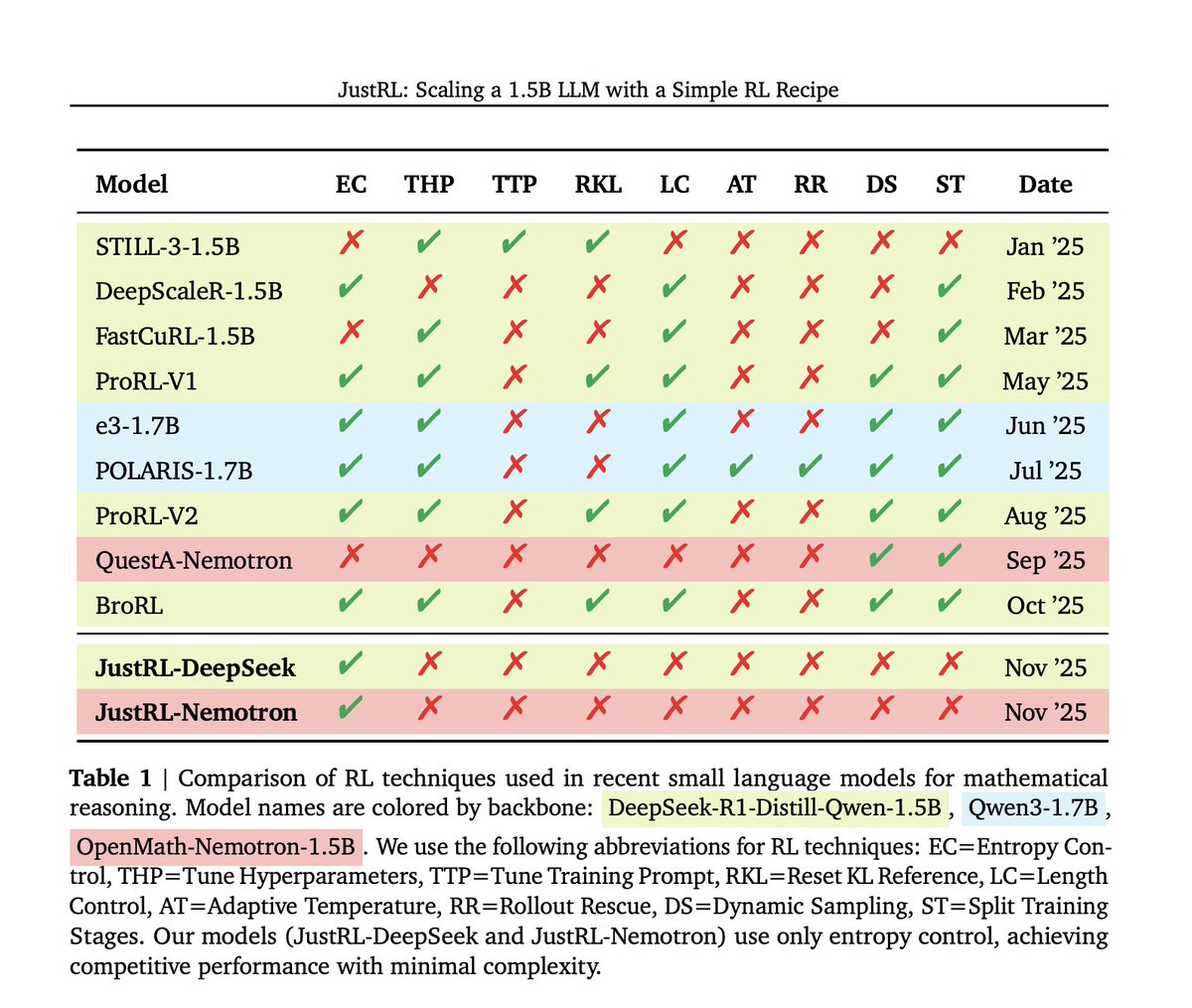

1) simple RL, with entropy control (asymmetric PPO clipping) works well for small LLMs. ( more number of steps to train on is crucial as well )

2) no need of adaptive temperature sampling, excessive number of rollouts, length penalty rewards, Reset KL Reference, curriculum training on context length size, etc.

To my fellow researchers: if you don't have any ideas read this paper and there are lot of open problems/ experiments that the authors are urging for other folks to explore.

(P..S I love the comparison table).

English

@DisgToast @Dileepchagam you are not gonna play the game anymore, why are you watching? It’s like stalking your ex

English

Rough summary of EDG's statements about S1Mon:

- S1Mon would barely communicate during scrims but would suddenly completely change when he was streaming. KK said: "If you communicated in scrims even 1/5th as much as you do during streams, we wouldn't want to drop you".

🥛@wanshunzhi

smoggy, nobody and chichoo have all made statements on weibo (all posted at the exact same time). i’ll try translate but please bear with me these are long ass messages

English