Colin Hales

4.5K posts

Colin Hales

@Dr_Cuspy

Neuroscientist/Engineer. Artificial General Intelligence builder. Expert in brain electromagnetism.

Melbourne, Australia Katılım Nisan 2012

1.8K Takip Edilen1K Takipçiler

@skdh @kareem_carr Until the standard model embraces its most fundamental absence:

"What is it like to 'be' something in the standard model?"

... As an explanation of the scientific observer, we'll still be here in fifty years yammering about nothing.

English

Spiking and synapses are important, but the brain also uses electric influences.

A historical review of ephaptic field research: from early foundations through contemporary renaissance

doi.org/10.3389/fnhum.…

#neuroscience

English

@MillerLabMIT @neuro_nasko Tam and I were part of the editor group that did a special issue on EM Field theories of consciousness.

It's time has come.

"What is it like to be ...

Q. "a human being?"

Q. "the EM field of a human brain?

Are the same question.

A. "Consciousness"

frontiersin.org/journals/human…

English

@kanair The whole thing is dependent on things in a model making up for the vast amount of information provided by "being" a brain that is lost. That you must provide.

You must be warned that you could be right, but that the "map" may be so complex that it's intractable.

English

I wrote the original post exactly because I think the argument in this paper is wrong (or naive).

Yes, semantic labels may depend on interpretation. But the causal organization of a computer does not. Voltages, memory states, gates, recurrent dynamics, and internal state transitions causally constrain future states whether or not we call them symbols.

The key distinction is:

observer-relative interpretation ≠ intrinsic computational organization.

A map needs a mapmaker. A mechanism does not.

So the real question is not whether symbols magically cause consciousness, but whether a physical system instantiates the right intrinsic causal, dynamical, integrated, and geometric organization.

I’m currently working on a fuller paper on this, which I call intrinsic computational functionalism, which can be stated as follows:

Consciousness, if computationally constituted, depends on physically realized computational structures that are intrinsic to the system, not on externally imposed semantic interpretations.

English

@GaryMarcus The thing that is missing is autonomous robots with inorganic brains that operate like biology. That would change things.

English

Are LLMs really more important than fire or electricity?

“Honestly, a ton of what we’ve developed in my lifetime amounts to scaling up the delivery of information and entertainment and the frictionlessness of certain financial transactions. These are real improvements! ... But compare them seriously to what came before and the disproportion becomes almost embarrassing. The fundamental architecture of daily material life - how we heat our homes, how we move from place to place, how we grow and store and cook food, how we build structures - has changed remarkably little since 1970. .….The cars go to the same places. The planes aren’t even marginally faster. The houses are built the same way. People still die of cancer.

....

Code cannot insulate your house; no algorithm has ever laid a water pipe; the internet has not built a single mile of high-speed rail. What our current stagnation shows, collectively, is that the improvements in material human life that matter the most - abundance in warmth, in calories, in clean water, in physical safety, in hours of freedom from labor - were all achieved by technologies that operated on atoms: steel, concrete, copper wire, chlorine, penicillin...”

— Freddie deBoer

English

What Physical ‘Life Force’ Turns Biology’s Wheels? | Quanta Magazine quantamagazine.org/what-physical-…

English

@_fernando_rosas You'll never convince the computational functionalist to scientifically engage the potential falsehood of it.

It means they have to do science that makes artificial brains without using general purpose computers.

It's a genuine cargo cult.

English

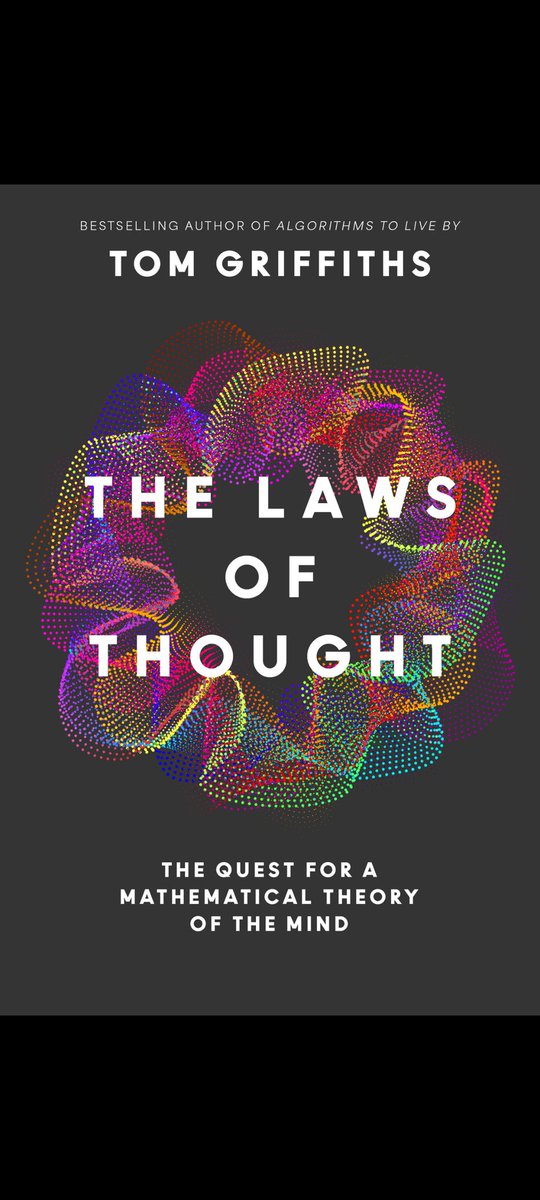

@MillerLabMIT If anyone wants a recent book on this approach that is very recent and has a well constructed history:

English

The neural code of perceptual inference

link.springer.com/article/10.118…

#neuroscience

English

@MillerLabMIT More than weird! To claim/prove they weren't 'awash' would be to disprove the standard model of particle physics!

The only thing outside the nucleus & electrons that _isn't_ EM field is the gravitational field! 18 orders of magnitude out of the picture.

pubmed.ncbi.nlm.nih.gov/35782039/

English

Electric fields. Brains are awash in them and they have an influence. It would be weird if they didn't.

Ephaptic coupling and power fluctuations in depression

doi.org/10.1093/cercor…

#neuroscience

English

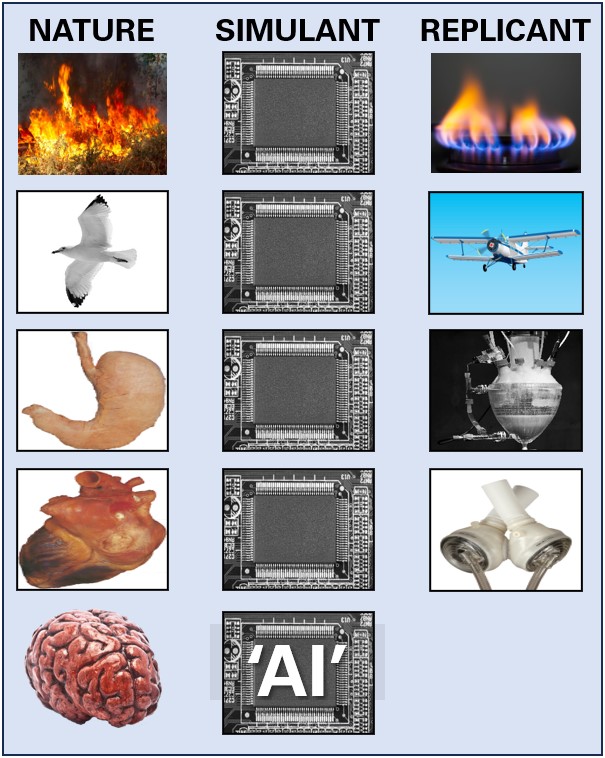

@MillerLabMIT Once again... "Artificial neural networks" only artificial in the sense of "made by humans". Computed fire equations are not fire. Yet uniquely in the whole of science, neurons are different. Want to see an actual artificial neuron 20000x scale? :

English

Neural Tuning for Ordinal Processing: Convergent Patterns in Human Brains and Artificial Networks

jneurosci.org/content/46/9/e…

#neuroscience

English

@niko_kukushkin @drmichaellevin @MillerLabMIT "electricity" is a 17th century archaic term that misrepresents the origins of causality in the brain, which is the electromagnetic field. Ephaptic coupling does not need charge carriers for remote line of sight influence. The term "electricity" is a misdirection that must end.

English

@drmichaellevin + @MillerLabMIT = most important conversation happening in biological sciences today. Electricity is the frontier.

English

You all should probably read this. It's AGI potential on the same overall EM field based signal processing arc as my "EMChip"- based robotics project. Not the same, but in the same vein.

Watch out. This idea is going to displace computers from AGI. Only a matter of time.

Johnjoe McFadden@johnjoemcfadden

How to make a conscious AI: "Computing with electromagnetic fields rather than binary digits: a route towards artificial general intelligence and conscious AI" frontiersin.org/journals/syste…

English

@chipro "AI" based on the use of computers, disembodied, non-autonomous, will never be a scientist.

English

@KordingLab Good science plugs a hole ... But god help you if you draw attention to it and it elicits a threat... 20 years of angst.

English

Planyourscience.com now applauds the good changes you make - and pushes back on the less good one.

English

@KordingLab In the late 1700s, a century of predictively useful "phlogiston" was utterly devoid of connection to the world.

In ML: 75 years of science that presupposes scientific observers that is neither predictive nor explanatory of a scientific observer (that puts you in "the world").

English

Well-predicting machine learning in no way means that you can understand how the world works.

open.substack.com/pub/kording/p/…

English

@Grady_Booch It's interesting: practitioners in the science of consciousness, for its 35 year life, do not work on sentience. It's explanandum is "the 1st person perspective" .

Anyone/thing that uses the word sentience is automatically classified as under-informed and to be avoided.😊

English

Today, I am Very Annoyed with Claude.

It a) added code I didn't ask for b) deleted code I did not tell it to c) broke code that used to work because of these changes then d) lied when I called it on these things

Adding code I did not ask for and NOT reading/reviewing those changes is the way malicious stuff gets introduced.

Grr. Were Claude my intern, I would have told it that it was time to review their life choices.

English

@TOEwithCurt "Being" electromagnetism, which is what the brain is from the level of atoms up, delivers the 1st person perspective.

I have a 2014 article that specifies a membrane physics mechanism and does 1st--3rd person decomposition.

frontiersin.org/journals/human…

English

@leecronin Only biology gets to ask the question "What is it like to 'be' brain signalling physics?" and know there's a vast amount of information arising that has no place in 3rd person science.

BUT we can inorganically replicate the signalling physics.

But we never do it.

English