@jack_peaches Do let me know of any feedback you may have on making it better for them.

English

Kording Lab 🦖

30.6K posts

@KordingLab

Konrad kording, @Penn Prof, deep learning, brains, #causality, rigor, https://t.co/tTJW05RRfa, https://t.co/qf7ZHxjaK1, Transdisciplinary optimist, Dad, Loves outdoors, 🦖

We have difficult news to share. Neuromatch's Office of Foreign Assets Control (OFAC) license renewal, which has allowed us to include participants residing in Iran since 2020, has been denied by the United States Government.

RIP data analysis and modelling jobs. Don't take my word for it. Take Nature's.

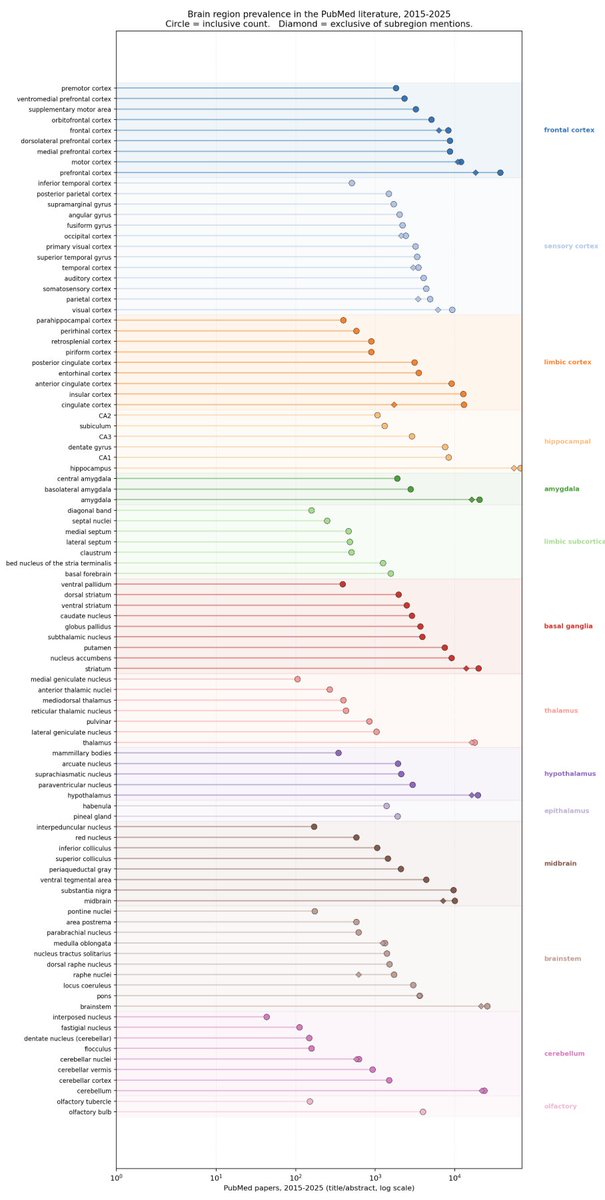

Here are the most popular neuromodulators in the published literature (thanks to Jay Henning for the request): github.com/koerding/neu...