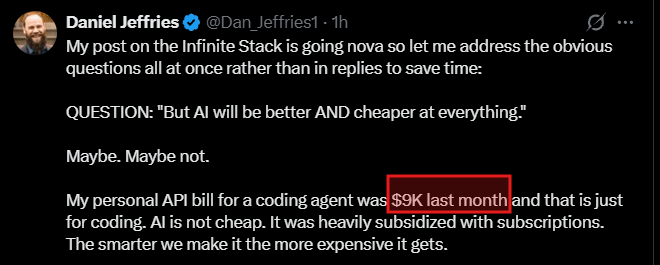

Daniel Jeffries@Dan_Jeffries1

My post on the Infinite Stack is going nova so let me address the obvious questions all at once rather than in replies to save time:

QUESTION: "But AI will be better AND cheaper at everything."

Maybe. Maybe not.

My personal API bill for a coding agent was $9K last month and that is just for coding. AI is not cheap. It was heavily subsidized with subscriptions. The smarter we make it the more expensive it gets.

It takes people, power, datacenters, and engineers to build and run all this intelligence and none of it is cheap.

But even if you grant the strongest version of this claim, it doesn't lead where you think it leads.

Comparative advantage: if AI is 1,000x better at drug discovery and 2x better at comforting a grieving widow, the efficient allocation is obvious.

AI does the drug discovery. The human does the comforting.

Every hour the AI spends on low-advantage tasks is an hour it's not spending where its advantage is greatest. The math pushes AI toward its highest-value work and pushes humans toward everything else, and "everything else" is a large and constantly growing category.

Compute costs money. Energy costs money. Deployment takes time. Diffusion of innovation follows a well-known curve.

Physics doesn't hand out free lunches, not even to neural networks.

As long as AI faces any real-world constraints in the real world, and it always will, comparative advantage holds.

QUESTION: "But why would anyone hire a human when a machine can do it?"

Because we want humans for a lot of things.

Craft beer is a $29 billion market in the U.S. despite mass-produced beer being cheaper and more consistent

Live concert revenue is growing faster than streaming, $23.6 billion and climbing at 8.8%, because when the recorded version became free, the human live experience became more premium, not less.

Etsy thrives because people pay more for human-made. A machine can weave a rug faster and cheaper but I may still want a bespoke, small-production-line rug that feels personal to me.

The robot will clean the grill and scrub the toilet at the restaurant. We want the human face greet us at the door. The chef's passion is on the plate, not because the machine can't cook, but because the human origin is part of the very product itself.

We want our stories told by people because we relate to people. AI may assist with writing or proofreading or fleshing out the story but we likely don't want stories that are pure algorithm (though stories are algorithms to writers, just advances ones, but I digress).

Authenticity and provenance are not going away. They're becoming luxury goods.

Sometimes we want a human doing the job for many reasons. Sometimes it will be a human and machine team or sometimes just a machine, but it does not mean machines just do it all because it's cheaper/faster.

Sometimes the very product and service itself is the opposite of cheaper or faster and that is the essence of what is being sold.

RESPONSE: "But the transition is painful!"

Yes. It can be. Sometimes, for sure.

I won't bullshit anyone here.

When a new technology wave hits, real people can lose real livelihoods.

We have no more whale hunters to dig the white gunk out of their heads to make candles. That was an entire, real industry. You may say, well now it is safer, cheaper and better that we don't have to slaughter the leviathan to light our house and you'd be right but people made a living doing that and that is gone as a new tech wave hits.

Nobody is denying that. What I am denying is that all jobs just go poof overnight, that it's some kind of snap-your-fingers extinction event.

Lamp lighters are gone too. Our cities are safer and smell better because we're not burning gas constantly, but those jobs went away. They did not go away overnight though. The transition to electric was slow, iterative, and took decades. The phasing out of that old profession was gradual. It always is.

Is AI faster than the electric light transition? Probably. That's an argument for better safety nets and smarter policy. It's not an argument that civilization is ending.

The macro story of progress is no comfort to someone living through the micro story of displacement. I take that seriously and any optimist/futurist who doesn't is a fraud.

The transition is the hard part. It always is. But the transition is not the destination, and confusing the two is how you get bad policy.

But the apocalypse story is a trojan horse for control freaks, authoritarians and national socialists. They want you afraid so they can take more of your rights and take more control.

Fear is the enemy and fear is running rampant right now.

The only thing we have to fear is fear itself.

RESPONSE: "What about superintelligence? Won't it just replace us entirely?"

No and here's why.

Humans are already a distributed intelligence. We (Sapiens) are smarter in the aggregate than at the individual level.

Neanderthals were faster, stronger, had bigger brains, tougher bones, and more muscle. They were the ultimate survivalists. Picture the ultimate survivalist and you would picture a Neanderthal.

They were also more isolationist. Seems like the better survival pattern, right?

But evolution already settled that debate.

A scaling, collaborating intelligence beats a stronger, more isolated one at solving complex problems.

That's us. That's why we're here and they're not.

So it stands to reason that superintelligence is likely to be more collaborative, not less. More aligned, more willing to work with us and expand the capabilities of humans and other AIs, because that's how you solve more problems higher up the stack.

Collaboration is better. Isolationism and seeing the Universe as a zero-sum game is not intelligent. It's stupid.

And the stack is infinite.

A superintelligence that wipes out its collaborators is an evolutionary dead end. It's not superintelligent. It's super idiotic.

A superintelligence that amplifies collaborative networks is the one that wins. Evolution already ran this experiment.

We're the result.

RESPONSE: "So there are no real problems?"

Of course there are.

They're just not the ones the doomers scream about.

AI-powered surveillance scaled to see every piece of personal information, that's real.

Authoritarian governments weaponizing pattern recognition to control their citizens, that's real.

Autonomous weapons that delegate lethal decisions to algorithms that optimize without judgment, that's real.

These are practical, institutional, policy-level problems that deserve serious attention and serious solutions and they are getting zero attention while we focus on KYCing the entire population so make sure no kids talk to chat bots.

Our politicians are not solving real problems. They are solving imaginary ones and creating more problems for us if they succeed.

What superintelligence will not be is some paperclip-maximizing superintelligence launching nukes or humanity going extinct because someone trained a model on too many FLOPs.

The real threats are mundane. They are boring. They are the same problems we always have.

The real threats are fixable.

But only if we're focused on the right problems instead of chasing sci-fi phantoms.

RESPONSE: "Why does this matter?"

Because the starting point determines everything downstream.

If you begin from the premise that work is finite, that there's a fixed amount of stuff to be done and machines are eating through it, then every conclusion you draw from that premise will be wrong. Dead wrong.

Any policy you make will be wrong.

You'll predict mass unemployment. You'll demand UBI as the only response. You'll want to ban or throttle AI. You'll see a zero-sum world where every machine gain is a human loss.

And you'll build policy around scarcity when the reality is abundance.

The error compounds and radiates outward, corrupting every inference, every prediction, every policy recommendation.

It's like building a skyscraper on a foundation that's three degrees off true. At the ground floor, you can barely tell. By the fiftieth floor, it's about to collapse.

Get the foundation right and everything else follows.

The problems are infinite.

The work is infinite.

The stack never stops growing.

And every time you make one layer cheaper, the layer above it grows in complexity.

Complexity breeds more complexity and new challenges and more varied jobs to solve those challenges.

Those challenges are, in some instances, better solved by a machine, in others by a human, in others a human + machine combo.

But in no way does it ever mean, machines do everything and we are done.

The doomers aren't stupid. Many of them are very bight. But they are deeply misguided and their solutions are worse that the problem they are supposedly fixing.

They're starting from the wrong premise.

And from a wrong premise, you can reason perfectly and still end up perfectly wrong.