Epsilon Guanlin Lee

2.2K posts

Epsilon Guanlin Lee

@Epsilon_Lee

PhD, MLer, CLer (NLPer), ML Engineer at https://t.co/gX6Lem59Co, have belief in interpretability research of AI/ML/NNs

Can LLMs adapt continually without losing base skills? Fast-Slow Training (FST) pairs "slow" weights with "fast" context. FST vs. RL: • 3x more sample-efficient • Higher performance ceiling • Less KL drift (better plasticity) • Continual learning: succeeds where RL stalls

@AnthropicAI is publicizing its Natural Language Autoencoders work and reporting that they will incorporate it into their alignment evals. But after reading into the details of the technical report, this seems terrifying. Based on their own methodology and results, it seems like using NLAs on consequential tasks is a good way to get burned. First, optimizing a natural language encoder and decoder jointly for reconstruction doesn't do anything to ensure that the intermediate text between them has the same meaning to the decoder as its meaning in English. The fact that they used a KL divergence penalty (see first screenshot) to make the English intermediates readable is strong evidence that NLAs are NOT good at faithfully representing the model's thoughts. They are literally putting optimization pressure on latent text for the sole purpose of oversight. Wasn't that a faux pas that Anthropic's own researchers have been warning us about in the past year? Won't using this type of method *actively select* for simplistic and confabulatory explanations? Second, Anthropic gave a positive spin to a pretty damning result (see second screenshot). After finding that NLAs produced, in some cases, false but contextually-related information >=50% of the time, they still spun it as a positive, saying "However, most claims are at least somewhat related to the input context." But shouldn't this terrify them? Doesn't this suggest that NLAs, by default, should be expected to produce plausible yet misleading explanations? Bear in mind that this experiment was a toy one in which the ground truth could reasonably be inferred. Given this, shouldn't we expect NLAs to be even more unreliable in cases that matter when the ground truth isn't so simple? It seems dishonest and safety-washy to me for Anthropic to structure its media strategy around cherry-picked demos seeming to illustrate successes while burying this result deep in the paper.

MoEs are everywhere in frontier models, and they are deployed as a monolith system. But many applications only need a narrow slice of capabilities, e.g., math, code, biomedical, etc. So what if "modularity" is actually the missing opportunity for MoEs? Today, we're releasing EMO: an end-to-end pretrained MoE where modularity emerges naturally, enabling selective use of experts!

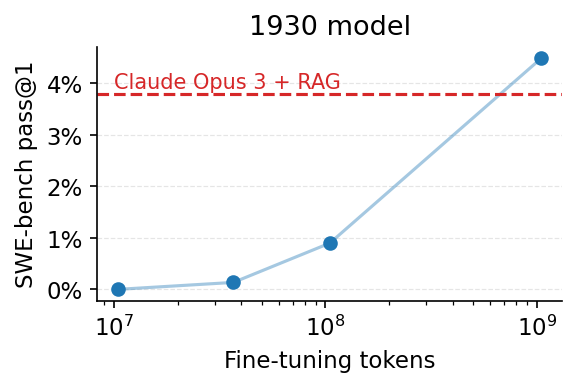

We fine-tuned Alec Radford’s 1930 vintage LLM to solve SWE-bench issues. After just ‼️250‼️ training examples, the model solves its first issue, a simple patch to the xarray library. 🧵👇