Sabitlenmiş Tweet

Nick Jiang

280 posts

Nick Jiang retweetledi

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.

anthropic.com/news/statement…

English

Nick Jiang retweetledi

New paper, w/@AlecRad

Models acquire a lot of capabilities during pretraining.

We show that we can precisely shape what they learn simply by filtering their training data at the token level.

English

🌕 @gru_space is building durable space habitats so humans can one day live on the Moon and Mars.

Its first missions will mine lunar regolith to construct a long-term pressurized habitat on the Moon for commercial space tourism — a hotel on the Moon.

Congrats on the launch @skyler_chan_!

ycombinator.com/launches/P9g-g…

English

Nick Jiang retweetledi

I'm really proud of what our team at @TransluceAI has accomplished in the last year! Take a moment to read our end-of-year post to learn what we're up to, and please reach out if you're interested in supporting us!

Transluce@TransluceAI

Transluce is running our end-of-year fundraiser for 2025. This is our first public fundraiser since launching late last year.

English

Nick Jiang retweetledi

I'm really excited about this paper! It's an example of data-centric interpretability, which IMO is a really impactful new area: models have tons of relevant data, what can we learn by analysing it?

Turns out there's a lot you can do if you're creative! eg SAEs on closed models

Nick Jiang@nickhjiang

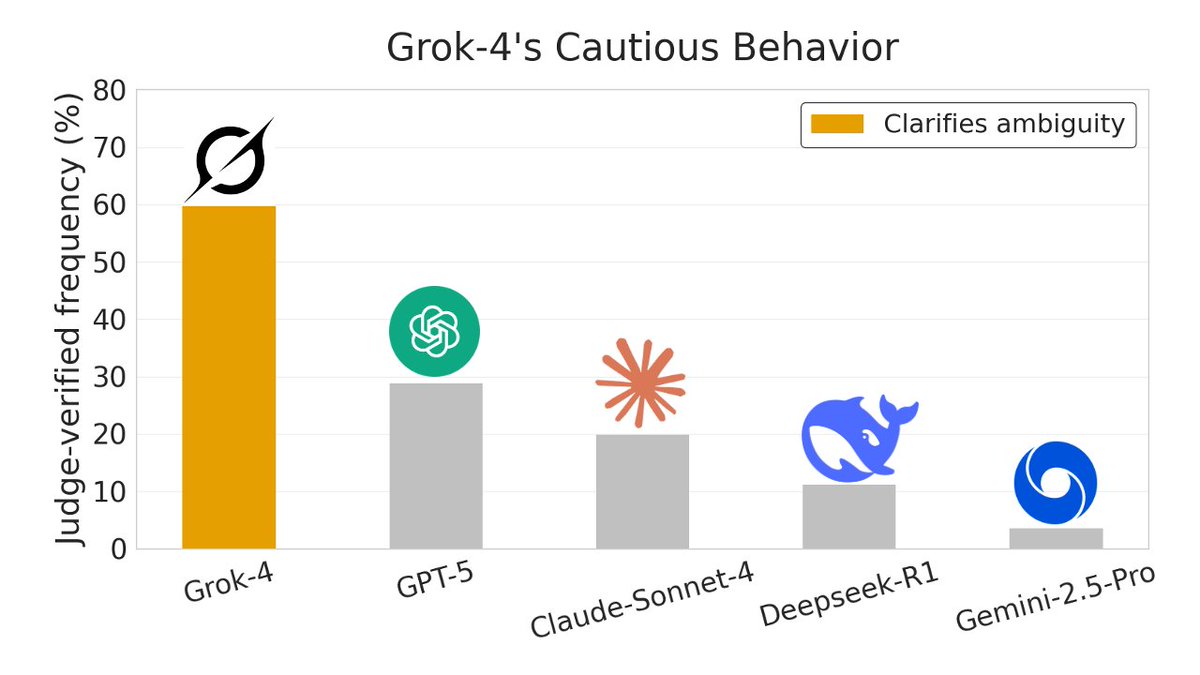

New work! What if we used sparse autoencoders to analyze data, not models—where SAE latents act as a large set of data labels 🏷️? We find that SAEs beat baselines on 4 data analysis tasks and uncover surprising, qualitative insights about models (e.g. Grok-4, OpenAI) from data.

English

@TheGrizztronic No, the embeddings are reusable. You can view the reader model + SAE as just a bigger embedding model.

English

@nickhjiang Does this mean the docs need to be passed back through the reader for each query?

English

Nick Jiang retweetledi

Cool! "What if we used sparse autoencoders to analyze data, not models?"

We also have a paper using SAEs to analyze data earlier this year: arxiv.org/abs/2502.14050

This shows interpretability is useful for downstream tasks.

Nick Jiang@nickhjiang

New work! What if we used sparse autoencoders to analyze data, not models—where SAE latents act as a large set of data labels 🏷️? We find that SAEs beat baselines on 4 data analysis tasks and uncover surprising, qualitative insights about models (e.g. Grok-4, OpenAI) from data.

English

@nickhjiang This is beautiful! I can think of a variation to this in order to assess and understand task performance across models?

English

@floringham We sampled 1000 prompts from Chatbot Arena when generating the responses, so it probably wouldn't change the results much. I think the larger concern would be that chatbot arena isn't representative of real user prompts (unfortunately, we don't have access to these).

English

@nickhjiang interesting work! in the Case study 1, I wonder if you try slightly different wordings for the prompt, does it change the models behaviour much?

English

@dosdesvios You could, but LDA and topic modeling tend to give broad semantic topics. SAE latents tend to be more granular and property-like (there are also more of them). We compared SAEs with CTMs in our correlations task and also found that CTMs were noisier.

English

@nickhjiang Thx for ur answer! For that purpose, I could use LDA or any other topic modeling technique, can't I?

English

@dosdesvios Great question! The advantage of these labels is that you don't need to pre-define them, meaning that you can find insights about your data without any priors.

English

@nickhjiang Cool work! One question: Why SAE labels would be more interesting than any other type of label that I could come up with?

English

Nick Jiang retweetledi

🧵Tired of scrolling through your horribly long model traces in VSCode to figure out why your model failed? We made StringSight to fix this: an automated pipeline for analyzing your model outputs at scale.

➡️Demo: stringsight.com

➡️Blog: blog.stringsight.com

English

This work was done with @lilysun004 (co-first), Lewis Smith, and @NeelNanda5 as part of MATS.

Project website: interp-embed.com

Paper: arxiv.org/abs/2512.10092

Code: github.com/nickjiang2378/…

English