Sabitlenmiş Tweet

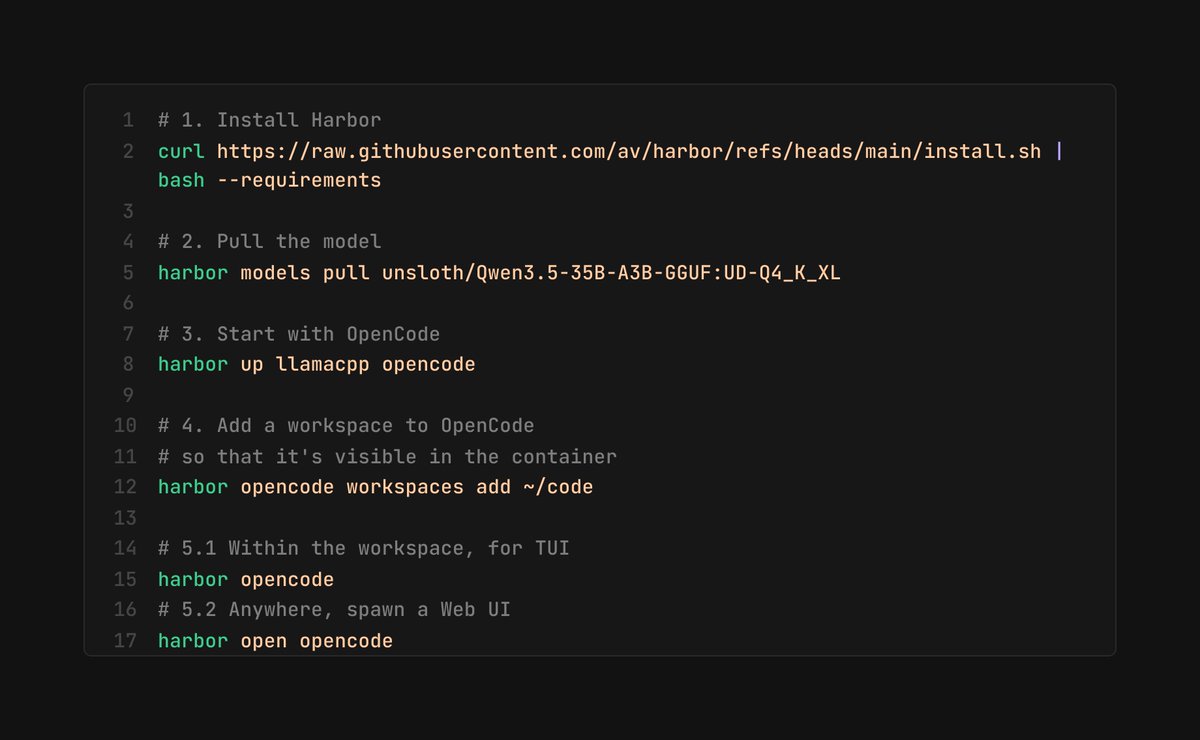

You don't even need Kimi 2.5 for a decent local LLM setup.

- llama.cpp

- Unsloth's Qwen 3.5 35B A3B with UD Q4 K XL quants

- OpenCode

- av/harbor

It'll take a while to download/install, but otherwise it's something that mid-range hardware (>32GB RAM, ~8GB VRAM) can run today.

Fynn@fynnso

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

English