Jiarui Yao retweetledi

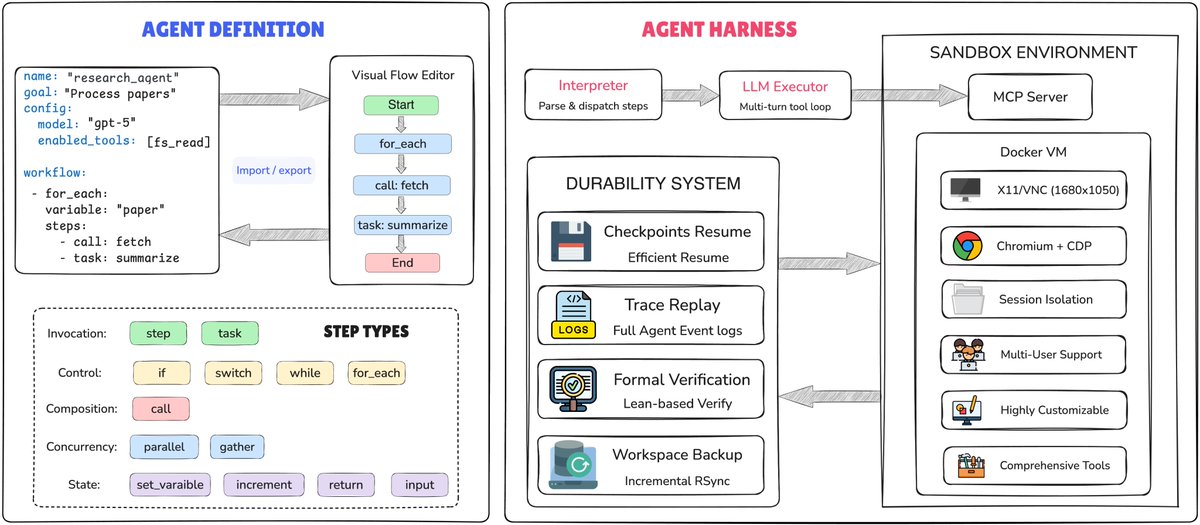

🚀 Wanna build your own customized agent with controllable workflow? Introducing AgentSPEX — a declarative DSL for building LLM agents.

- Customizable agentic workflow with GUI builder

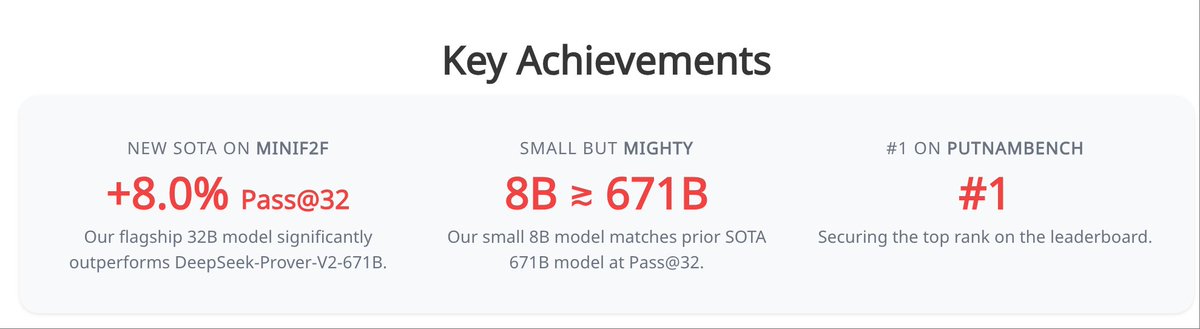

- Reproducible State-of-the-Art in SWE-bench verified

- Controllable workflow with YAML specification + sandboxed VM + Lean4 verification

🌐 Demo: agentspex.ai

💻 Code: github.com/ScaleML/AgentS…

📄 Paper: huggingface.co/papers/2604.13…

💡 (One of the) Applications: researchguide.work

(1/n)

#LLM #AIAgents #AgenticAI #OpenSource #AIResearch #AgentHarness

English