Sabitlenmiş Tweet

Fascia

128 posts

Fascia

@FasciaRun

AI writes the backend. Fascia makes it safe. Spec-driven, deterministic, BYOC. No LLM at runtime.

Building in public Katılım Şubat 2026

33 Takip Edilen5 Takipçiler

@nullhypeai @elonmusk @OfficialLoganK "Code review running as infrastructure" is the right frame. The prerequisite is defining what each domain's "done" looks like before you write the check. Without that, you're just running linters at machine speed.

English

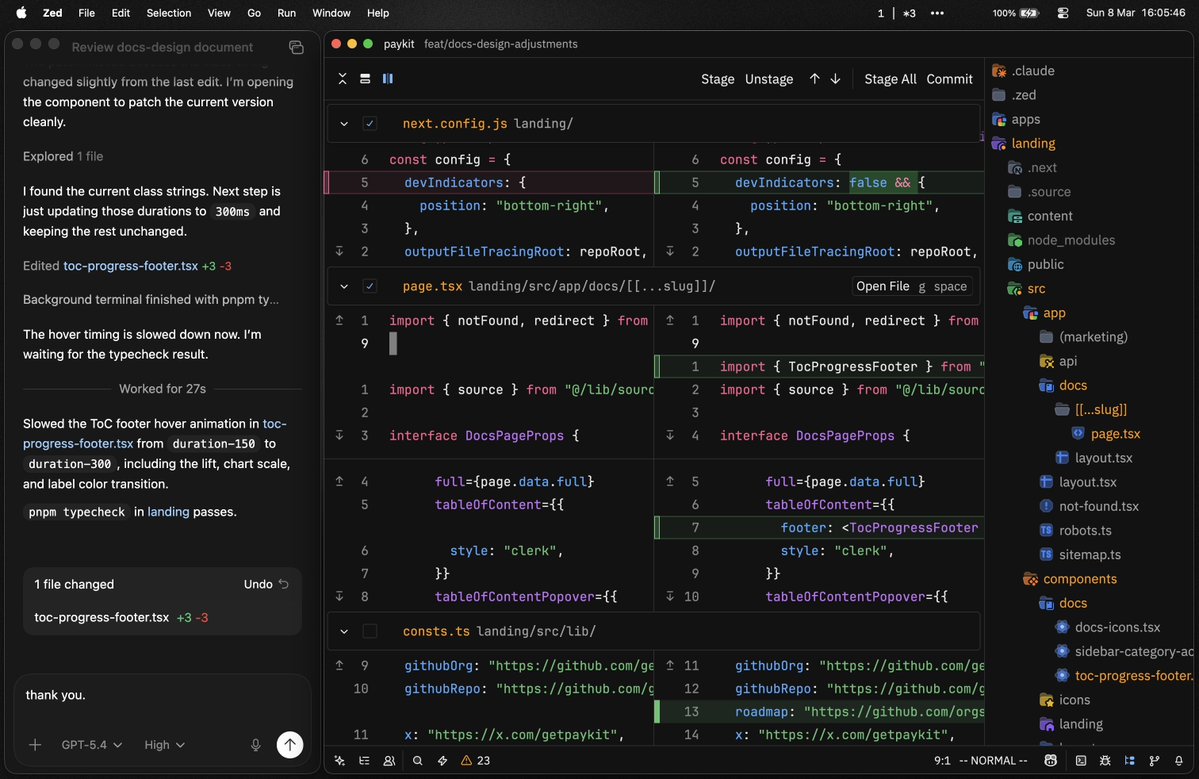

The trajectory is already visible. Sonar's January 2026 survey found AI accounts for 42% of all committed code today, with developers expecting that to hit 65% by 2027. Jellyfish tracked AI code review agent adoption going from 14.8% to 51.4% in 10 months. Generation and review are both automating fast, and review is actually accelerating faster off a lower base.

The more interesting question is what "code review" becomes when both sides are automated. CodeRabbit's data shows AI-generated code produces 1.7x more problems than human-written code. Automated review catching automated generation errors at scale isn't the end of code review. It's code review running at machine speed, invisibly, continuously, as infrastructure. The job title disappears. The function doesn't.

English

@opal_life_ Failure handling is where it breaks most. Had a spec-compliance agent catch payment calls inside transaction boundaries - if the tx rolls back, the charge already went through. Hard to spot in review when the code "looks clean."

English

AI code review should be stricter than human code review, not looser.

Teams often do the opposite because AI-generated code feels polished and “professional.”

But polish creates false confidence.

The review bar should go up, especially on:

failure handling

boundary conditions

implicit assumptions

long-term maintainability

English

AI doesn’t make engineering simpler.

It makes code generation cheaper.

That shifts the bottleneck.

The real scarcity is no longer just “who can implement fastest,” but who can define the right constraints, architecture, and failure boundaries.

#AI #SoftwareEngineering #SystemDesign #CodeReview #Architecture

English

We can now write code 10x faster with AI. Great. But who is going to review all of it? I've been thinking about this and ended up in a strange rabbit hole.

I go deeper into this on my blog:

debuggr.io/ai-code-review…

English

@e_kryzhanouski The bottleneck isn't review speed - it's that "should this exist" requires domain context you can't compress. Security review asks different questions than compliance review. Splitting them made review tractable for me.

English

Shipped ~50 PRs across a full redesign. Chat Studio, Portal, auth, payments, design system - all redone.

Now I have to test the whole thing end-to-end. That's the part I've been quietly avoiding.

#BuildInPublic

English

@DanielLozovsky The framing is right. We run 6 specialists for PR review - security, design, quality, spec compliance, PM. The specificity is exactly what makes them useful. One reviewer checking everything finds noise.

English

Someone just shipped a GitHub repo with 55+ specialized AI agents for Claude Code.

And it’s not what you’d expect.

This isn’t another prompt library. Each agent has a defined personality, a workflow, deliverables, and success metrics. There’s a Frontend Developer, a Reddit Community Builder, a Whimsy Injector, a Reality Checker — even a full Spatial Computing division for Vision Pro.

The idea: instead of asking Claude to “act like a developer,” you deploy a specialist that already knows how it thinks, what it delivers, and how to measure success.

7.6k stars in what looks like days. 1.2k forks.

The interesting part isn’t the agents themselves — it’s the design pattern. Personality + process + measurable output beats generic prompts every time.

If you’re building with Claude Code, this is worth a look.

→ github.com/msitarzewski/a…

What agent would actually move the needle for your workflow?

#AITools #ClaudeCode #IndieHackers #BuildInPublic #AIAgents

English

@VitalikButerin Still thinking about "bug-free code as basic expectation." That only works if the artifact can be statically analyzed before deployment. Faster generation doesn't get you there. Restricting what can be generated does.

English

This is quite an impressive experiment. Vibe-coding the entire 2030 roadmap within weeks.

Obviously such a thing built in two weeks without even having the EIPs has massive caveats: almost certainly lots of critical bugs, and probably in some cases "stub" versions of a thing where the AI did not even try making the full version. But six months ago, even this was far outside the realm of possibility, and what matters is where the trend is going.

AI is massively accelerating coding (yesterday, I tried agentic-coding an equivalent of my blog software, and finished within an hour, and that was using gpt-oss:20b running on my laptop (!!!!), kimi-2.5 would have probably just one-shotted it).

But probably, the right way to use it, is to take half the gains from AI in speed, and half the gains in security: generate more test-cases, formally verify everything, make more multi-implementations of things.

A collaborator of the @leanethereum effort managed to AI-code a machine-verifiable proof of one of the most complex theorems that STARKs rely on for security.

A core tenet of @leanethereum is to formally verify everything, and AI is greatly accelerating our ability to do that. Aside from formal verification, simply being able to generate a much larger body of test cases is also important.

Do not assume that you'll be able to put in a single prompt and get a highly-secure version out anytime soon; there WILL be lots of wrestling with bugs and inconsistencies between implementations. But even that wrestling can happen 5x faster and 10x more thoroughly.

People should be open to the possibility (not certainty! possibility) that the Ethereum roadmap will finish much faster than people expect, at a much higher standard of security than people expect.

On the security side, I personally am excited about the possibility that bug-free code, long considered an idealistic delusion, will finally become first possible and then a basic expectation. If we care about trustlessness, this is a necessary piece of the puzzle. Total security is impossible because ultimately total security means exact correspondence between lines of code and contents of your mind, which is many terabytes (see firefly.social/post/x/2025653… ). But there are many specific cases, where specific security claims can be made and verified, that cut out >99% of the negative consequences that might come from the code being broken.

YQ@yq_acc

Two weeks ago I made a bet with @VitalikButerin that one person could agentic-code an @ethereum client targeting 2030+ roadmap. So I built ETH2030 (eth2030.com | github.com/jiayaoqijia/et…). 702K lines of Go. 65 roadmap items. Syncs with mainnet. Here's what I found.

English

@aryanlabde The list is the spec. Auth policy, security rules, workflow logic - these are poorly-formatted specs still in your head. The problem is they stay there instead of getting written down before the AI touches anything.

English

AI agents forget things mid-session.

Context window fills. The agent loses track of which branch it's on, which PR is in flight.

We wrote a PreCompact hook. Capture key state before compression. Inject it back after.

Zero drift. How we ship Fascia daily.

#BuildInPublic

English

@ens_pyrz Self-hosting is very different from “local” (implying same machine)

English

@arvidkahl What makes this structural is that trust is bound to the extension, not the developer. Ownership transfers, permissions carry over silently. No re-consent. The user never knows.

English

@DanielLozovsky Same pattern here. Split one generalist reviewer into domain specialists and the signal-to-noise ratio jumped immediately. Personality + process is underrated for agent design.

English

@bourneshao @maxk4tz Same here. One focused agent doing one thing well beats five agents stepping on each other. The urge to parallelize everything is the new premature optimization.

English

@maxk4tz similar setup here but i go claude code in terminal + one browser window. found that fewer context switches = way more shipped. the temptation to have 5 agents running is real but i get more done focusing one thing at a time. followed btw, love the workflow posts

English

@SaasSnail 2017 to 2026. 8 years from wanting to ship to actually shipping. That gap is the real story. Congrats on MadStax.

English

I just shipped my first iOS app! 🚀

MadStax - a minimal habit tracker

✅ No subscriptions

✅ No ads

✅ $4.99 one-time

Been wanting to do this since 2017. Finally did it.

App #1 of 40 this year.

apps.apple.com/us/app/madstax…

English

@orhansaas The gap between posting ideas and shipping real SaaS is enormous. Congrats on crossing it. What did you build?

English

@ironshift21128 Agreed. The question is how to make "define your intent" expressive enough for real production needs without accidentally reinventing configuration. That balance is the whole game.

English

@FasciaRun 100%. The best infra is the kind you never have to think about. Write your code, define your intent, and let the platform figure out the rest. We're way overdue for this becoming the default.

English

@aryanlabde The gap between demo and production is exactly where AI tooling needs to evolve next. Right now agents optimize for "it runs" not "it runs safely under load with real users."

English

@priyankapudi The debugging-code-you-didn't-write phase is what kills me. You can't reason about code you didn't design.\n\nAt least with hand-written bugs you remember the bad decision that caused them.

English