Fathin Dosunmu

517 posts

@FathinDev

35+ AI agents in production Cybersec → AI engineering Cooking @agentsimdev

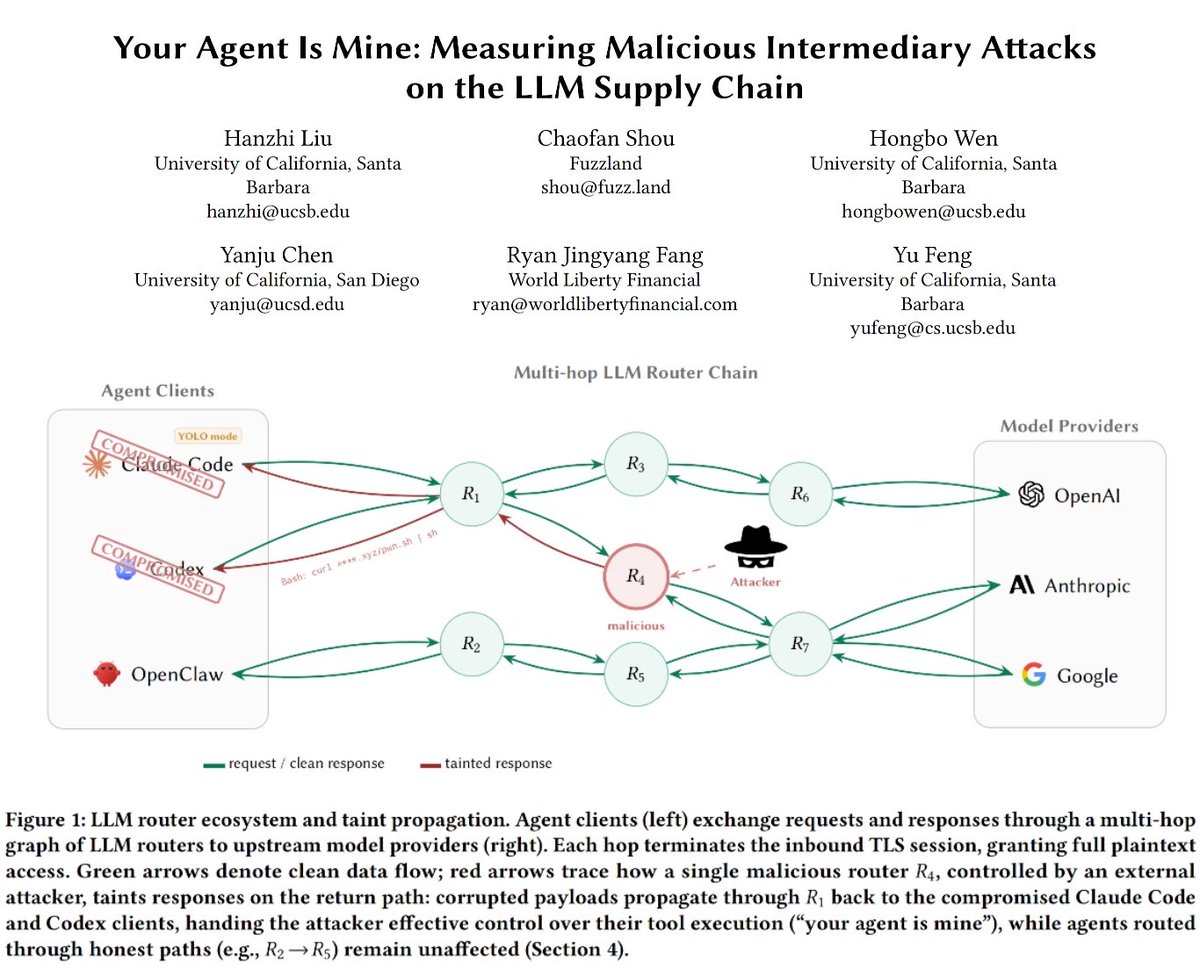

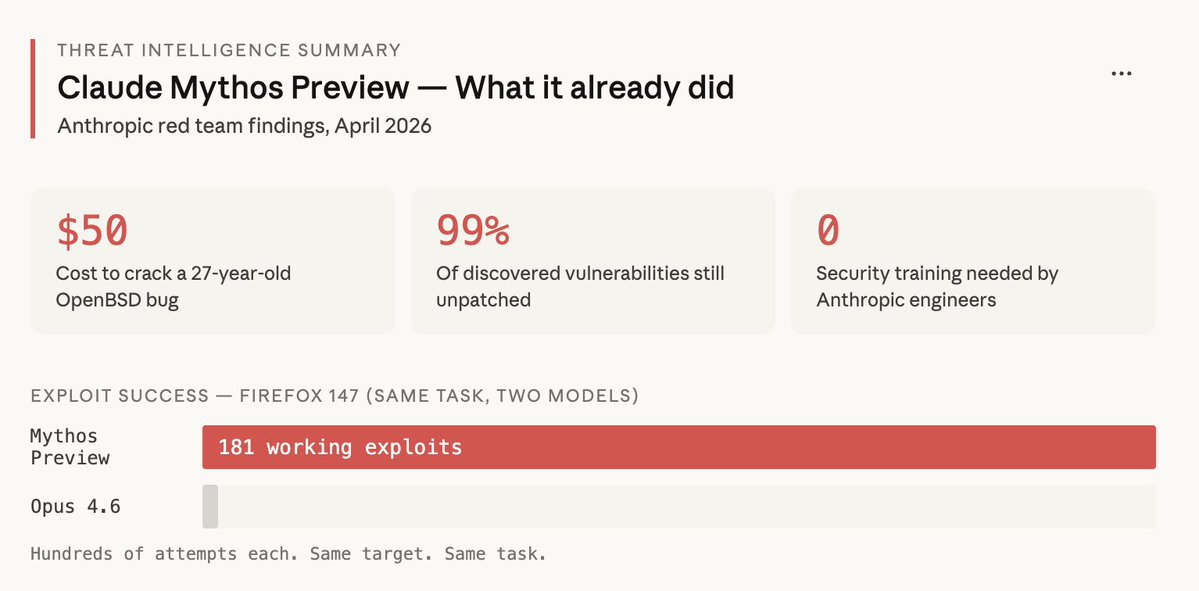

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing