Fatma

80 posts

@Faty_404

Informatics master’s student @TUM | interested in 3D reconstruction and generation

For feminists, silence on Gaza is no longer an option — #AJOpinion by @maryam_dh ⤵️ 🔗: aje.io/iiavnu

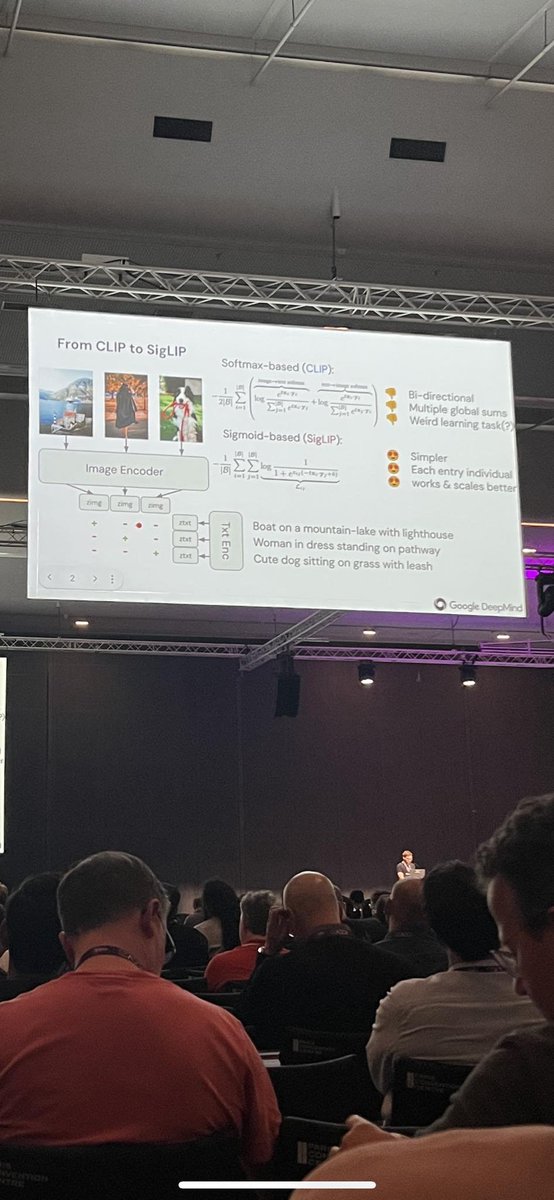

What makes CLIP work? The contrast with negatives via softmax? The more negatives, the better -> large batch-size? We'll answer "no" to both in our ICCV oral🤓 By introducing SigLIP, a simpler CLIP that also works better and is more scalable, we can study the extremes. Hop in🧶

So happy that our paper SPARF got a #CVPR2023 highlight! It is the result of my internship at #Google in @fedassa team. SPARF: Neural Radiance Fields from Sparse and Noisy Poses Arxiv: arxiv.org/abs/2211.11738 Website: prunetruong.com/sparf.github.i… Code coming very soon! 1/n

I highly recommend you check out @WeinzaepfelP and colleagues poster on the "CroCo v2" vision foundation model ... and his T-Shirt ❤️. The model is used for lots of downstream tasks (monocular depth, stereo, optical flow, pose, robotics/navigation).

Vision transformers need registers! Or at least, it seems they 𝘸𝘢𝘯𝘵 some… ViTs have artifacts in attention maps. It’s due to the model using these patches as “registers”. Just add new tokens (“[reg]”): - no artifacts - interpretable attention maps 🦖 - improved performances!