Sabitlenmiş Tweet

l’étranger

4.5K posts

l’étranger retweetledi

l’étranger retweetledi

This is called CONTEXT ENGINEERING

> Context ≠ storage → treat it like RAM, keep only what’s needed now

> More context = worse output → noise kills reasoning

> Use CLAUDE.md as persistent brain (rules, architecture, constraints)

> Split work → Research → Plan → Execute (avoid mixing everything)

> Use subagents to offload heavy tasks (keeps main context clean)

> Progressive disclosure → load tools/files only when needed

> Externalize memory → store in files, not long chats

> Restart often → fresh context better than long degraded conversations

Quality In → Quality Out

is most important rule using Claude or any other AI models.

Thariq@trq212

English

l’étranger retweetledi

Not all data pipelines need to run in real time.

Some are better as scheduled batch jobs, others need streaming from the start.

In this tutorial, @balawc27 teaches you the differences between batch and streaming pipelines in Python, and when to use each.

freecodecamp.org/news/efficient…

English

l’étranger retweetledi

l’étranger retweetledi

you have two choices every week:

1: open claude, do your tasks, occasionally find an automation, close it, repeat

2: have claude audit your entire week and automate the repetitive stuff for you

most people don't even know option 2 exists

full setup (takes 60 seconds):

Ole Lehmann@itsolelehmann

English

I moved to Thailand.

Same me. Same work. Same age.

My body, my energy, my sleep, my social life, all different here. And it’s got nothing to do with discipline.

Here’s what I’ve learned living here:

1. Food

Street food is ₹100. Grilled. Fresh vegetables. 25g protein.

Pad kra pao, som tam, grilled chicken, green curry. All day, every day.

Healthy is the normal.

2. 2. Movement

Nobody “goes for a walk.” They just walk.

8,000 steps a day without trying. Night markets, beach walks, motorbike to every corner.

Movement is built into the city, not scheduled around your calendar.

3. Working spaces

Cafes with natural light. Outdoor coworking. Beach towns full of people working from their laptops.

4. Meeting people

New friendships at 35 are normal here. At night markets, at cafes, at coworking spaces.

Strangers talk to each other. “Come over” still means today.

5. Cost of living

₹60,000 a month gets you a full apartment, a scooter, 3 meals out a day, and weekends at the beach.

6. Community

Digital nomad communities here are real. Coworking spaces full of people building things, traveling alone, starting over at 30.

I love India. I always will.

But living here has shown me what’s possible when a country builds for its people.

The food, the movement, the rest, the community, it all adds up to a body and a life that feels lighter.

We have the talent. The culture. The potential. We just haven’t decided we deserve this kind of life yet.

PS - all of this is true if you want it to be.

English

l’étranger retweetledi

3-HOUR ADVANCED CLAUDE CODE LECTURE FROM NICK SARAEV. SAVE THIS

if you already use Claude Code daily - there's still new stuff in here

> CLAUDE.md optimization, agent harnesses, task parallelization

> Karpathy's autoresearch approach

> browser automation - Computer Use vs Browser Use

> workspace org, security, auto-mode, OAuth

> where Claude Code is going

watch it this weekend👇

Mr. Buzzoni@polydao

English

l’étranger retweetledi

l’étranger retweetledi

This 1-hour Stanford lecture on “Sports Betting Math”, delivered by the founder of a $160M platform, breaks down how bookmakers make millions using pure math.

Watch it fully or, if you’re short on time, read my article where I’ve summarized the key insights.

Goaty@goatyishere

English

Tomorrow the @UKSovereignAI fund launches with £500m to back the best of British tech.

The AI economy is growing 23x faster than the UK economy in general.

This initiative guarantees that the public gets to benefit from the explosive growth of the AI sector.

LETS GO

Sovereign AI@UKSovereignAI

Introducing Sovereign AI, the Government’s new £500m venture fund. Sovereign AI will support founders from day one to start here, scale here and win everywhere. sovereignai.gov.uk

English

l’étranger retweetledi

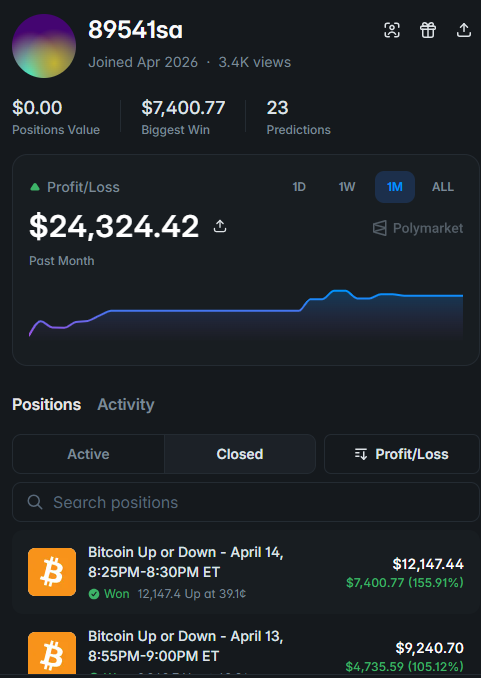

Quant from CERN built a model that catches market shocks when everyone panics, it profits the most

But when to enter is a separate problem. Math already solved it:

R = N/e ≈ 0.37 × N*

> skip the first 37% of entry points

> then take the first one better than anything you've seen

> random entry: 1–10%, optimal: ~37%

Good model + right timing = the difference between $332 and $2219

trade: polymarket.com/?r=copy

monokern@monokern

English

l’étranger retweetledi

New course: Spec-Driven Development with Coding Agents, built in partnership with @jetbrains, and taught by @paulweveritt.

Vibe coding is fast, but often produces code that doesn't match what you asked for. This short course teaches you spec-driven development: write a detailed spec defining what to build, and work with your coding agent to implement it. Many of the best developers already build this way.

A spec lets you control large code changes with a few words, preserve context across agent sessions, and stay in control as your project grows in complexity.

Skills you'll gain:

- Write a detailed specification to define your mission, tech stack, and roadmap, giving your agent the context it needs from the start

- Plan, implement, and validate features in iterative loops using a spec as your agent's guide

- Apply the same repeatable workflow to both new and legacy codebases

- Package your workflow into a portable agent skill that works across agents and IDEs

Join and write specs that keep your coding agent on track!

deeplearning.ai/short-courses/…

English

l’étranger retweetledi

l’étranger retweetledi

Agent memory is three-dimensional.

Most agent memory systems use a single store. Usually a vector database. It handles semantic similarity well, but it captures only one dimension of knowledge.

Here's the gap. Store these three facts:

→ Alice is the tech lead on Project Atlas

→ Project Atlas uses PostgreSQL for its primary datastore

→ The PostgreSQL cluster went down on Tuesday

Now ask: was Alice's project affected by Tuesday's outage?

Vector search finds fact 1 (mentions Alice) and fact 3 (mentions Tuesday). But the bridge between them, fact 2, mentions neither. It connects Project Atlas to PostgreSQL, and that's exactly what gets missed.

This is the normal shape of business knowledge. People belong to teams, teams own projects, projects depend on systems, systems have incidents. Any question crossing two hops breaks flat retrieval.

The three dimensions that actually cover agent memory:

→ A relational store for provenance (where data came from, when, who has access)

→ A vector store for semantics (what content means, what it's similar to)

→ A graph store for relationships (how entities connect across hops)

Each captures something the other two can't. Vectors find meaning. Graphs trace connections. Relational tables track lineage and permissions.

The real unlock is combining them: enter through vectors (find semantically relevant content), then traverse the graph (follow edges to connected entities), with provenance grounding every result back to its source.

Cognee is an open-source project that unifies all three behind four async calls. The default stack is fully embedded (SQLite + LanceDB + Kuzu), so a pip install gets you running locally. For production, swap in Postgres, Qdrant, or Neo4j without changing your agent code.

Check it out on GitHub: github.com/topoteretes/co…

The article below is a first-principle deep dive on building agents that never forget. This will give you a clear picture of how memory for agents is evolving.

Akshay 🚀@akshay_pachaar

English

l’étranger retweetledi

l’étranger retweetledi

l’étranger retweetledi

l’étranger retweetledi

> do you understand what Claude Code just did

> a senior Google engineer with 11 years of experience

> built a system that does 80% of his job automatically

> 27 agents running out of the box

> 8hrs → 2hrs per day

> $28,000/month while he chills

> the exact playbook is here 👇

Noisy@noisyb0y1

English

l’étranger retweetledi

l’étranger retweetledi

We’re open sourcing the first document OCR benchmark for the agentic era, ParseBench.

Document parsing is the foundation of every AI agent that works with real-world files. ParseBench is a benchmark that measures parsing quality specifically for agent knowledge work:

✅ It optimizes for semantic correctness (instead of exact similarity)

✅ It has the most comprehensive distribution of real-world enterprise documents

It contains ~2,000 human-verified enterprise document pages with 167,000+ test rules across five dimensions that matter most: tables, charts, content faithfulness, semantic formatting, and visual grounding.

We benchmarked 14 known document parsers on ParseBench, from frontier/OSS VLMs to specialized parsers to LlamaParse. Here are some of our findings:

💡 Increasing compute budget yields diminishing returns - Gemini/gpt-5-mini/haiku gain 3-5 points from minimal to high thinking, at 4x the cost.

💡 Charts are the most polarizing dimension for evaluation. Most specialized parsers score below 6%, while some VLM-based parsers do a bit better.

💡 VLMs are great at visual understanding but terrible at layout extraction. GPT-5-mini/haiku score below 10% on our visual grounding task, all specialized parsers do much better.

💡 No method crushes all 5 dimensions at once, but LlamaParse achieves the highest overall score at 84.9%, and is the leader in 4 out of the 5 dimensions.

This is by far the deepest technical work that we’ve published as a company. I would encourage you to start with our blog and explore our links to Hugging Face to GitHub. All the details are in our full 35-page (!!) ArXiv whitepaper.

🌐: Blog: llamaindex.ai/blog/parsebenc…

📄 Paper: arxiv.org/abs/2604.08538…

💻 Code: github.com/run-llama/Pars…

📊 Dataset: huggingface.co/datasets/llama…

🎥 YouTube: youtube.com/watch?v=g5p7G-…

YouTube

English