Fzo

14.8K posts

$60B for a coding tool. let that sink in spacex might acquire cursor, plug it into xAI infra, and turn dev tools into a core layer of the AI stack nytimes.com/2026/04/21/bus…

🚨 GPT-IMAGE V2 Just Passed Another TEST THAT NO FUCKING ANOTHER AI HAD EVER PROMPT : - A high-resolution map of the Earth, but with the land and water inverted. All continents (North America, Africa, etc.) are made of blue water, and all oceans (Atlantic, Pacific) are solid green land masses. The shapes must be geographically accurate to the real Earth, just with the textures swapped

Autoresearch from @karpathy in action locally using gemma-4-26b-a4b-it-6bit with oMLX on an M5 Max to train Gemma 4 E2B 🚀 IT COULD WORK!

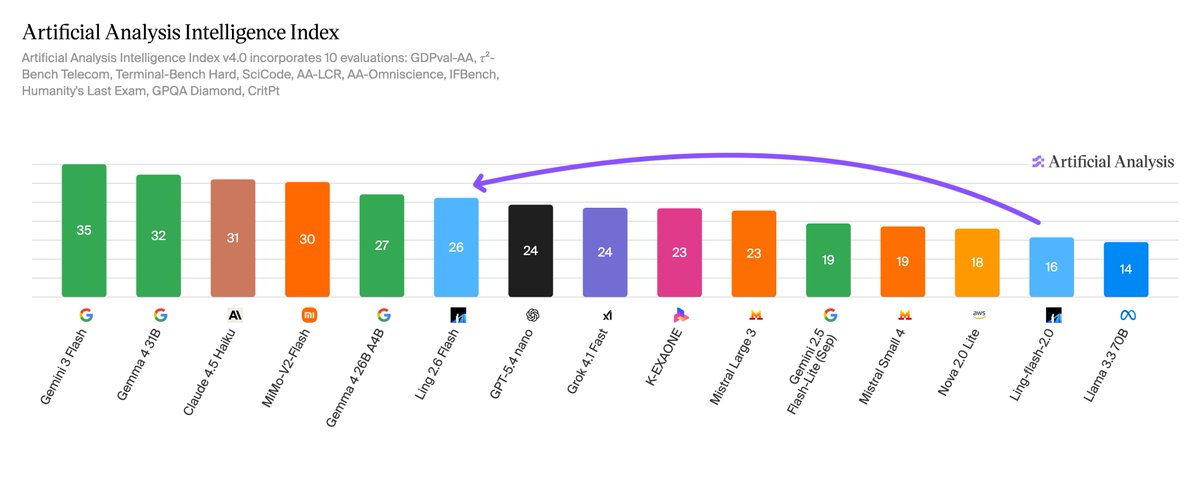

Ant Group's Ling 2.6 Flash scores 26 on the Artificial Analysis Intelligence Index, a 10-point jump from Ling-flash-2.0. It is one of few recent open weights releases focused on non-reasoning capabilities and focuses on a reasonable cost to intelligence ratio. Ling 2.6 Flash is a non-reasoning model from Ant Group's @TheInclusionAI lab. Ant Group's model family comprises three series: Ling (non-reasoning), Ring (reasoning), and Ming (multimodal). Ling-flash-2.0 was the previous flash-tier non-reasoning model. Ling 2.6 Flash is expected to be open weights shortly after release, but as of today the weights have not been released on Hugging Face. Key takeaways: ➤ At 104B total parameters with 7.4B active parameters, Ling 2.6 Flash (26) sits in intelligence near GPT-5.4 nano (Non-Reasoning, 24) and Gemma 4 26B A4B (Non-reasoning, 27), both models with comparable active parameter counts. However, at 18 points behind GLM-5.1 (Non-reasoning, 44), there remains a gap to frontier non-reasoning open weights models ➤ Ling 2.6 Flash is comparatively token efficient, using ~15M output tokens to run the Intelligence Index. This is comparable to Gemma 4 26B A4B (~14M) but a fraction of Qwen3.5 9B (~78M). Compared to models in the similar intelligence tier, Ling 2.6 Flash represents a reasonable efficiency tradeoff, which has positive effects on cost when deployed on larger workloads. At a price of $0.1 / million input tokens and $0.3 / million output tokens, Ling 2.6 Flash costs only ~$23 to run the full Artificial Analysis Intelligence Index. ➤ Gains from Ling-flash-2.0 were driven mostly by improvements agentic capabilities and instruction following. τ²-Bench jumped from 21% to 86% (+65 points), IFBench from 34% to 57% (+23 points), and GDPval-AA Elo from 425 to 783 (+84%). Conversely, GPQA Diamond fell from 66% to 59% (-6 points) and SciCode from 29% to 27% (-2 points). ➤ AA-Omniscience performance is at -66 with 15% accuracy and 96% hallucination rate. This is consistent with the model's small 7.4B active parameter count. Knowledge recall benefits from larger parameter counts, and sub-10B active-parameter models systematically underperform on this metric. Additional model details: ➤ Architecture: MoE, 104B total parameters, 7.4B active parameters ➤ Context window: 262K tokens (doubled from 128K for Ling-flash-2.0) ➤ Pricing: $0.10 / $0.30 per 1M input/output tokens (via Novita API) ➤ License: Weights not yet released ➤ Availability: Third party API through @novita_labs

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

EXO v1.0.70 is out. This release ships with multimodality and major enhancements for memory usage in long context use cases (e.g. @openclaw and @opencode), as well as updated model support and QOL features.