🕳️ Stochastic Dreams

302 posts

🕳️ Stochastic Dreams

@GenHeres123

Toward a new cognitive hypothesis: Superintelligence is not a tool, but an event horizon of no return 🕳️

I have no idea if Richard Dawkins is right about AI consciousness (and neither does AI itself). But I think we have a moral duty to create AI that we *know* is conscious. noahpinion.blog/p/the-moderate…

I think we can all agree that Greg Brockman is not a particularly good person.

Bill Gates on whether we’ll still need humans with the rise of AI: “Not for most things. We'll decide." Herein lies the problem with capitalism: It has resulted in a handful of billionaires controlling the entire economy and making life or death decisions that impact all of us. Every decision billionaires make is based on what brings them the most money. They don’t make decisions based on what is best for the people or planet. They don’t care about the common good or the community. They only care about profit. That is why their ultimate goal is to use AI to replace as many of us as they can get away with, and anyone who thinks they’re going to provide UBI is in denial. They don’t care about us now and will care about us even less when they don’t need our labor. Humanity’s only hope is to take back the economy [the means of production and technology] from the billionaires and put it directly in the hands of the people. That is the only way to ensure decisions around AI are made to benefit all of humanity instead of a handful of rich billionaires.

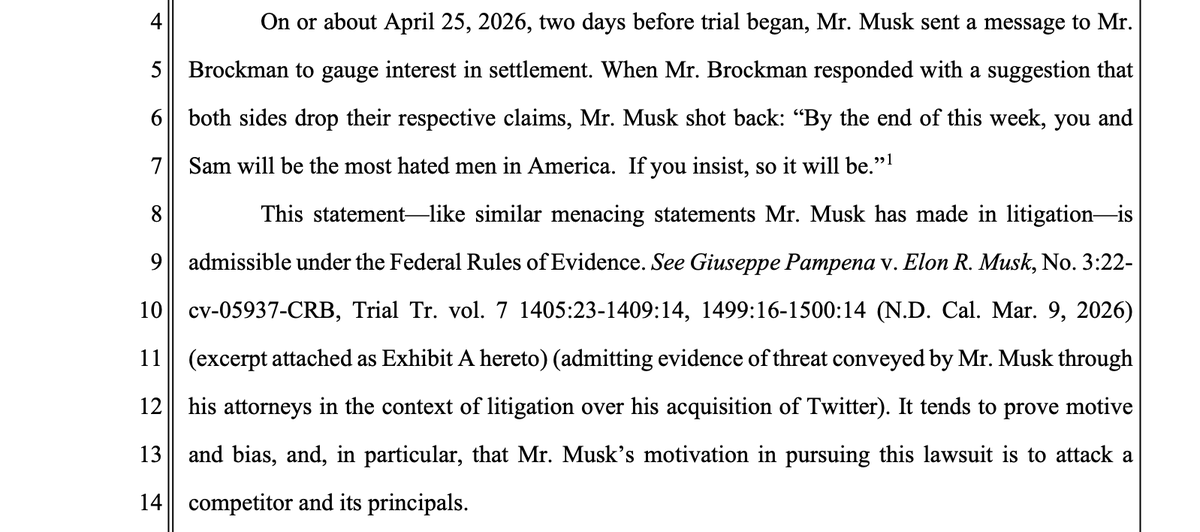

ICYMI - OpenAI says Elon Musk messaged Greg Brockman two days before trial began, gauging settlement possiblity. When Brockman replied saying they should drop their claims, Musk wrote back “By the end of this week, you and Sam will be the most hated men in America. If you insist, so it will be.” (from OpenAI filing last night with court)

Stuart Russell, a computer scientist at UC Berkeley, takes the stand to testify, direct-examine by Elon Musk's lawyer Steven Molo. He says that the current AI race has a "winner take all" dynamic, in which "whichever company develops AGI first (AI that matches or exceeds human capabilities in every area) will have a significant advantage." Because AGI could take over most human work, the companies that create it will "quickly come to control perhaps the majority of economic activity on the planet." Even governments could "become subordinate to these companies."

Dawkins is more intelligent than 99% of the people making fun of him and ‘if AI can be just as capable as us without being conscious, why did we develop consciousness in the first place?’ is a great question

Who cares if AI is conscious? What does it matter? Are you gonna let it vote or something? You guys are so lame.

Today, @BuchananBen and I co-author a piece in the New York Times with a simple message: While we disagree on plenty, we believe AI has national security implications which deserve a careful and bipartisan government response. We can (and should) have partisan fights about all manner of AI issues, but catastrophic risk from AI shouldn’t be one of them.

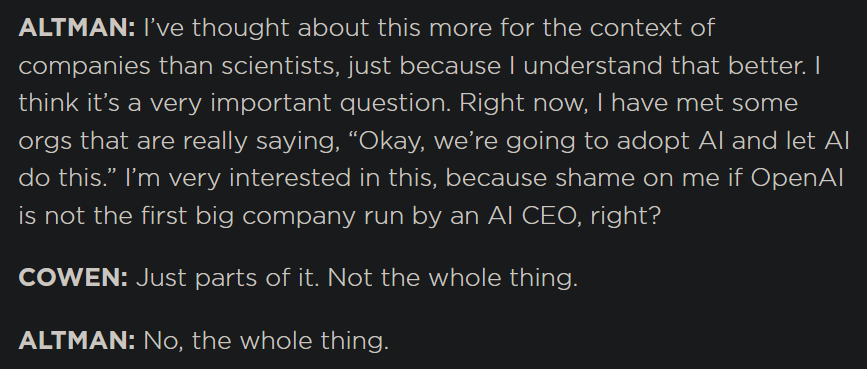

OpenAI comms have gotten a lot better since the TBPN acquisition. Maybe coincidental timing. Consistently on-message that Anthropic is a weird cult that wants to replace humans and OpenAI just wants to build tools to make humans more awesome. Sama new Twitter persona. Etc.