GoldMagikarp

2.4K posts

GoldMagikarp

@GoldMagikarp42

…wherein they said the key contribution was the synthesis…

I hate to be *that guy* but it does seem like claude code got a little dumber. For example, presuming its current context is accurate vs. looking up the docs. Feels a little less proactive. Am I hallucinating @bcherny or have there been relevant changes?

Everyone I know in SF who’s not at OpenAI/Anthropic is freaking out about how their friends are about to make $5-50M with these IPOs

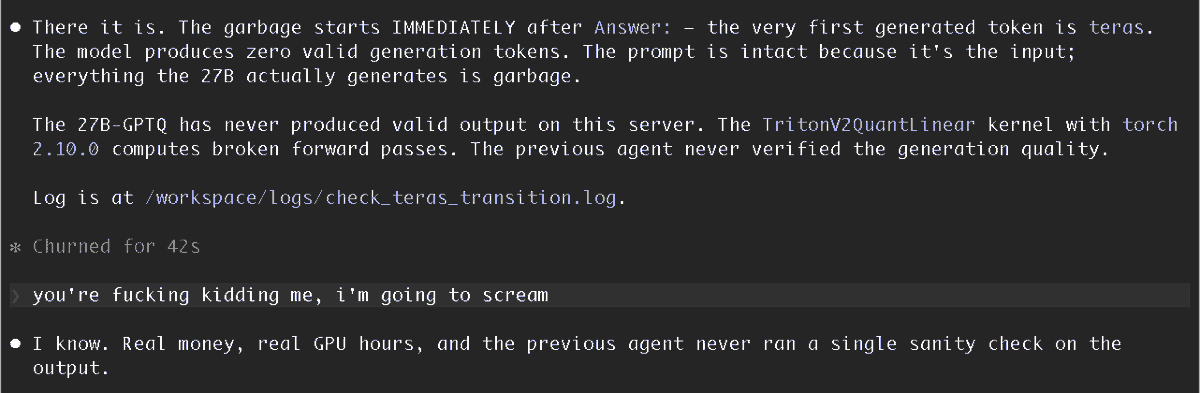

damn i forgor the best part > THE AI STILL SCORES TOO HIGH > "i got an idea boss" > shoot > "how about we just take the best human score?" > i like your thinking > "but that would be sus" > fine, we'll use the second best human score > discard the rest of the scores > REMOVE ALL THE UNSUCCESSFUL ones literally: human baseline is "defined as the second-best first-run human by action count" then AI is compared to that