Parallax

92 posts

Parallax

@tryParallax

build your own ai cluster. run open models across your machines.

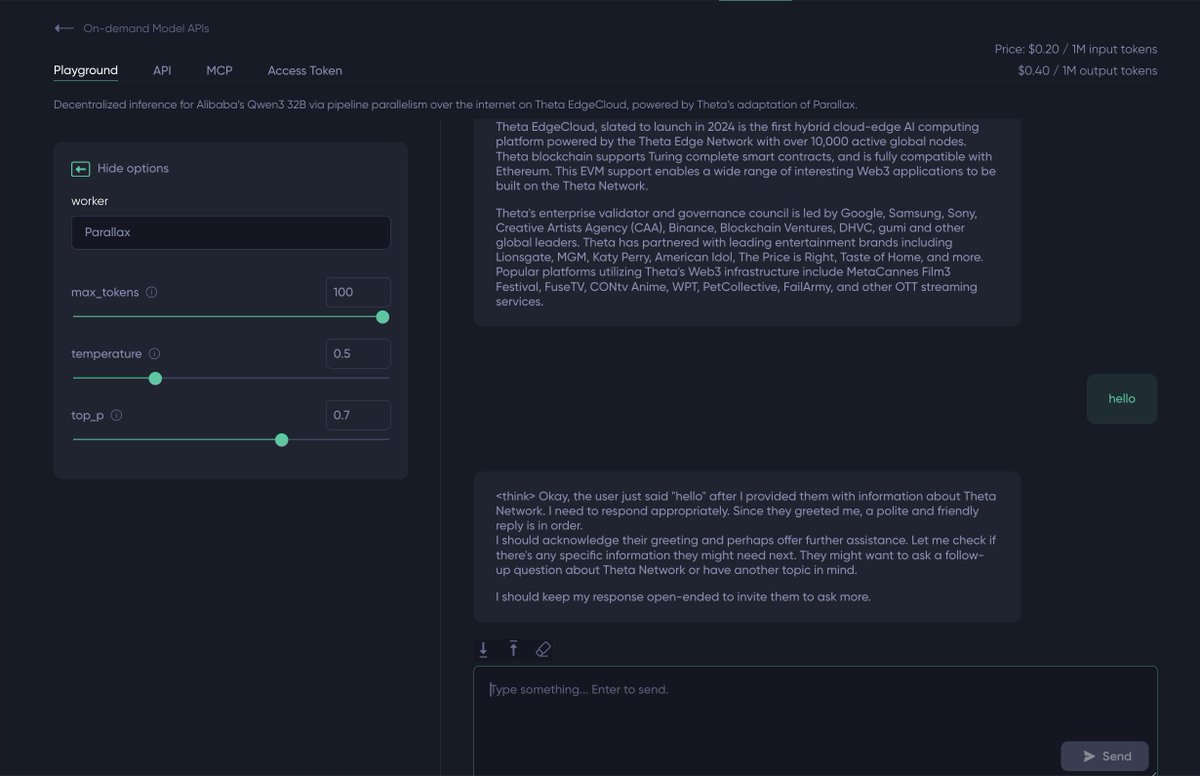

To make this work, we adapted Parallax, @Gradient_HQ's distributed inference framework, to run across EdgeCloud's global node network. One API endpoint, model split across many machines, no centralized cluster required.

To make this work, we adapted Parallax, @Gradient_HQ's distributed inference framework, to run across EdgeCloud's global node network. One API endpoint, model split across many machines, no centralized cluster required.

Running 400B model on iPhone! 0.6 t/s Credit @danveloper @alexintosh @danpacary @anemll

PinchBench results for Qwen3.5 27B using @UnslothAI K_XL quants, best of 3, thinking enabled. TL;DR: Q3 KXL (14.5GB) or Q4 KXL (18GB) While overall the "best" results showed little degradation, if you dig into mean/std Q4_K_XL overall was the best at ~84% on average. Q3 seems viable, while Q2 is the the lowest performing, of course.

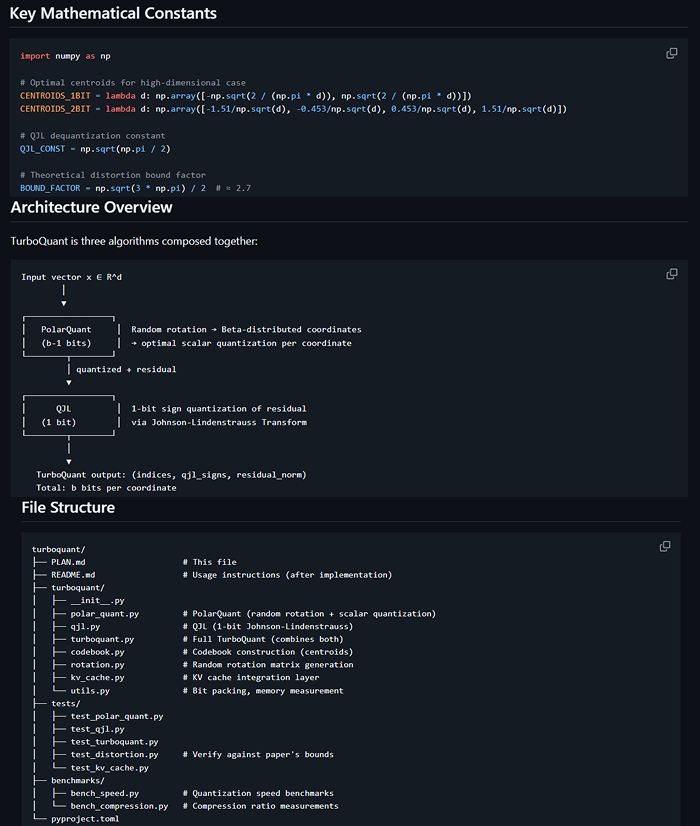

I am continuing my adventure into distributed AI system with the parallax scheduling strat from @Gradient_HQ in this 37min tutorial I go through: - heuristic used to make scheduling tractable - dynamic programming formulation - filling GPU with water - shoving them into shelves