andrrrr

553 posts

andrrrr

@GroudonOrig

records of my thoughts. no opinion | previous @DodoResearch @GlobalPayInc @Ubisoft

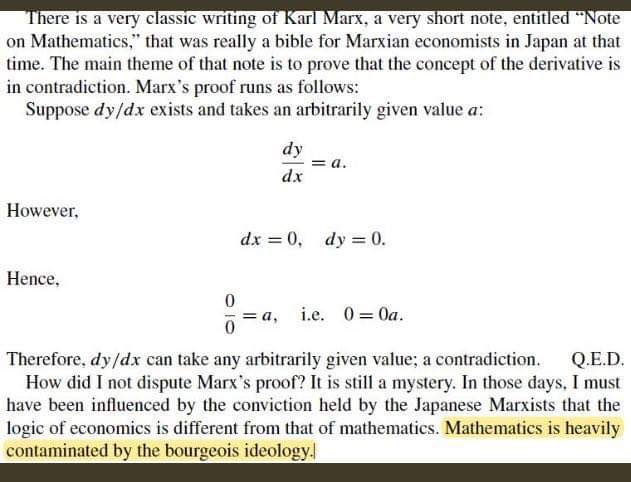

SSMs promised efficient language modeling for long context, but so far seem to underperform compared to Transformers in many settings. Our new work suggests that this is not a problem with SSMs, but with how we are currently using them. Arxiv: arxiv.org/pdf/2510.14826 🧵

Where are the AI vibe coded crypto apps? Yes they will get hacked. But crypto degens love risk. In DeFi summer we threw millions into untested contracts and paid thousands USD per tx. And we trade useless memecoins for fun. Like spending a few k usd per shitcoin doesn't hurt too much. Vibe coded contracts would be speed-running PMF in the open. Deploy fast, see what gets hype, and then actually rebuild it (after it gets hacked) with audits after realizing there is PMF. This is a goldmine of ideas. Somehow we forgot how to be brave.

We are revising our developer API policies: We will no longer allow apps that reward users for posting on X (aka “infofi”). This has led to a tremendous amount of AI slop & reply spam on the platform. We have revoked API access from these apps, so your X experience should start improving soon (once the bots realize they’re not getting paid anymore). If your developer account was terminated, please reach out and we will assist in transitioning your business to Threads and Bluesky.

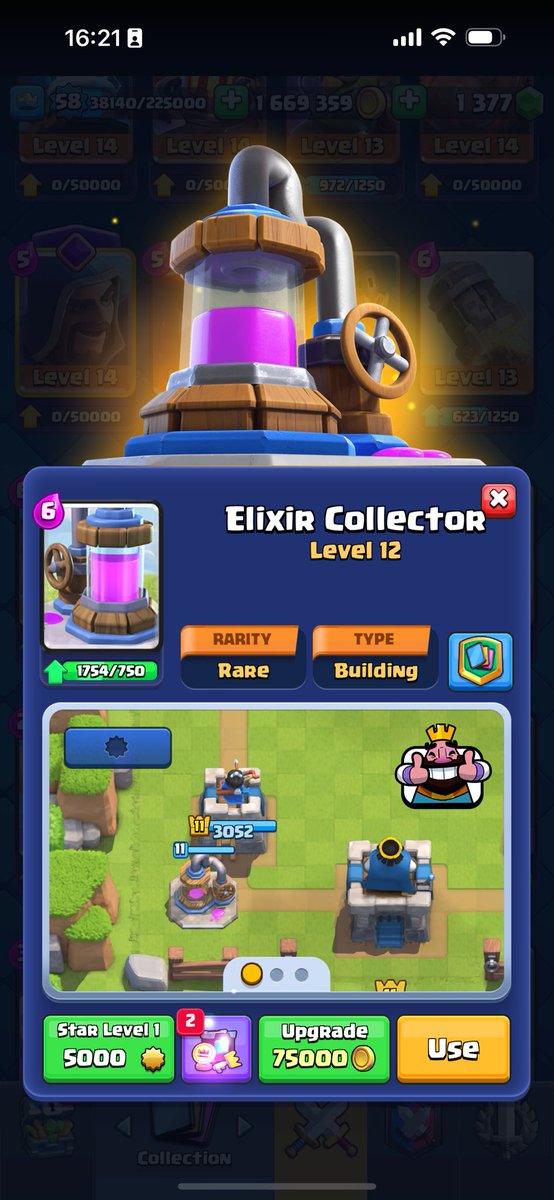

Xbow JUST GOT A CARD RENDER. does this mean we're getting an Xbow rework soon?