Guangxing Han

106 posts

@GuangxingHan

Research Scientist at Google DeepMind

My role at Meta's SAM team (MSL, previously at FAIR Perception) has been impacted within 3 months of joining after PhD. If you work with multimodal LLMs for grounding or complex reasoning, or have a long-term vision of unified understanding and generation, let's talk. I am on the job market starting immediately. #metalayoffs #FAIR #MSL #SAM

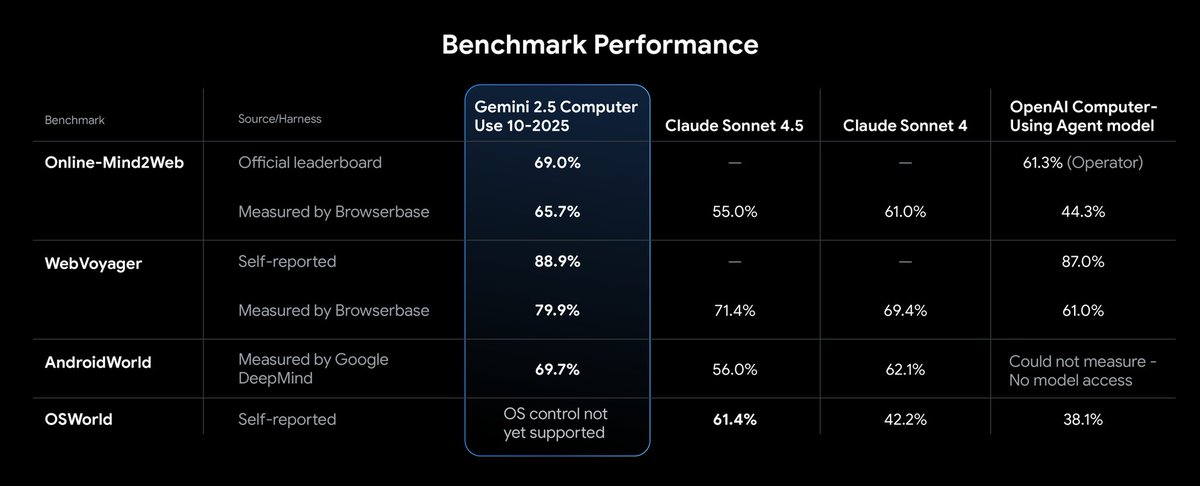

Very excited to share that @windsurf_ai co-founders @_mohansolo & Douglas Chen, and some of their talented team have joined @GoogleDeepMind to help advance our work in agentic coding in Gemini. Welcome to our new team mates from Windsurf! theverge.com/openai/705999/…

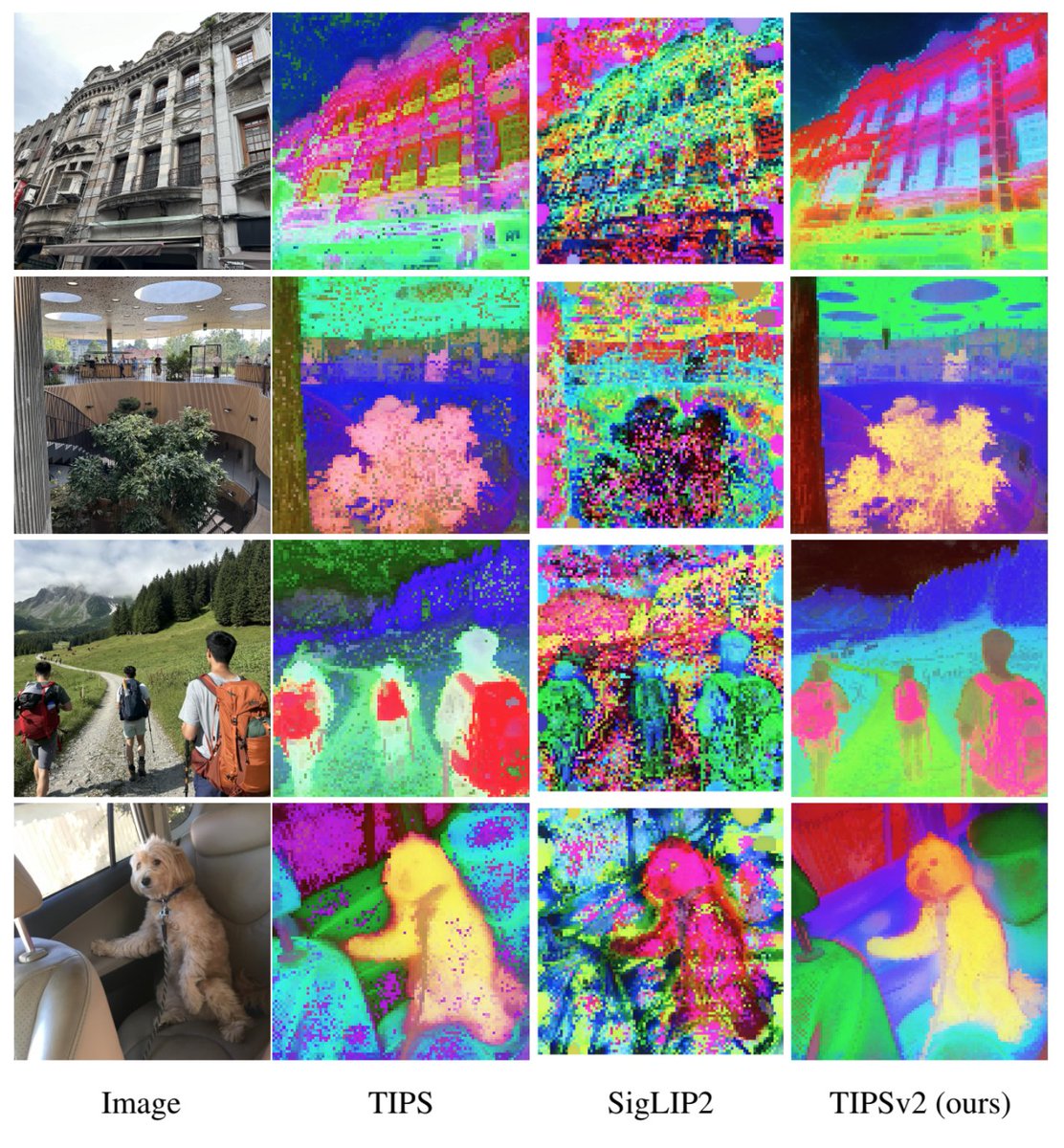

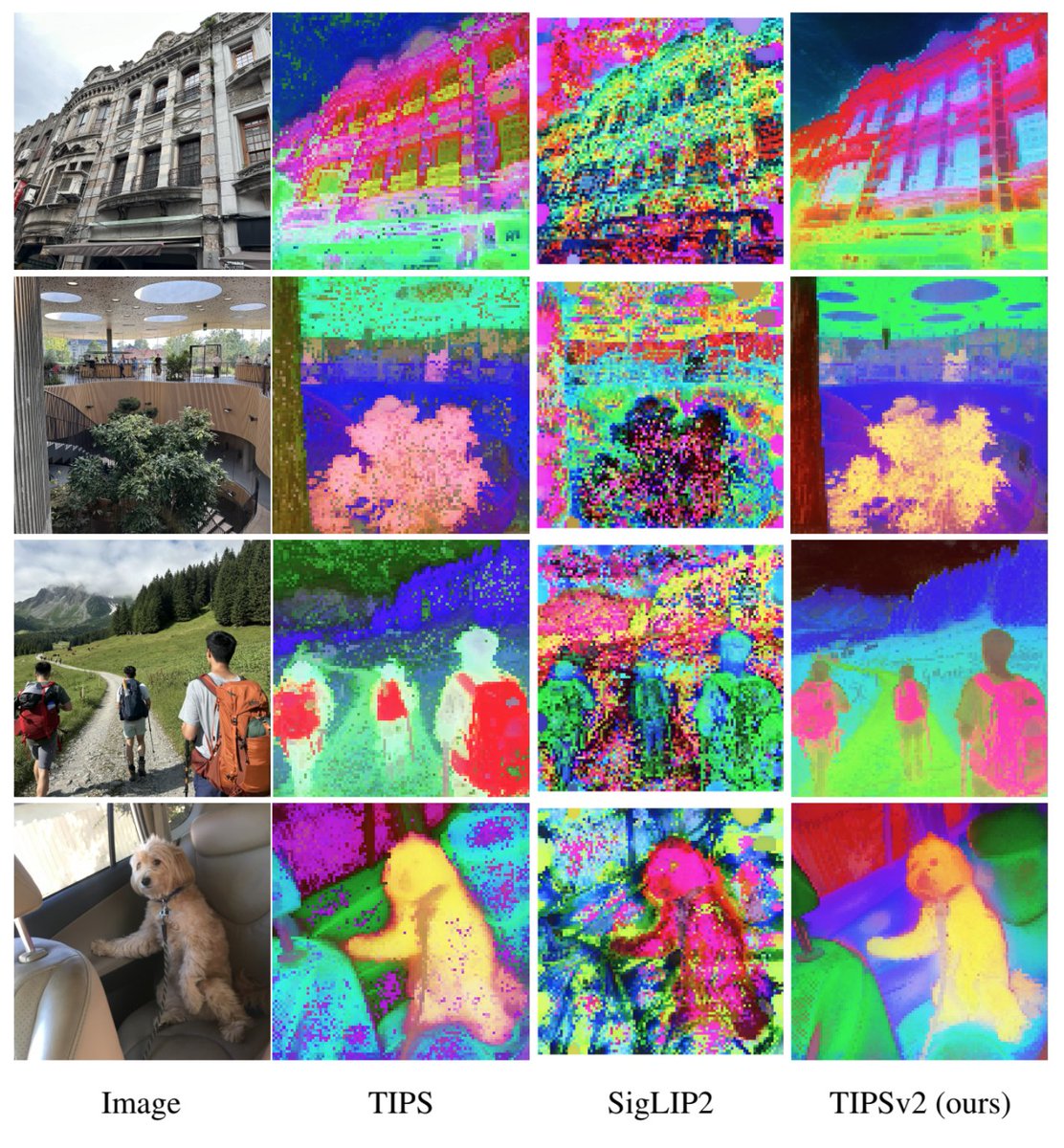

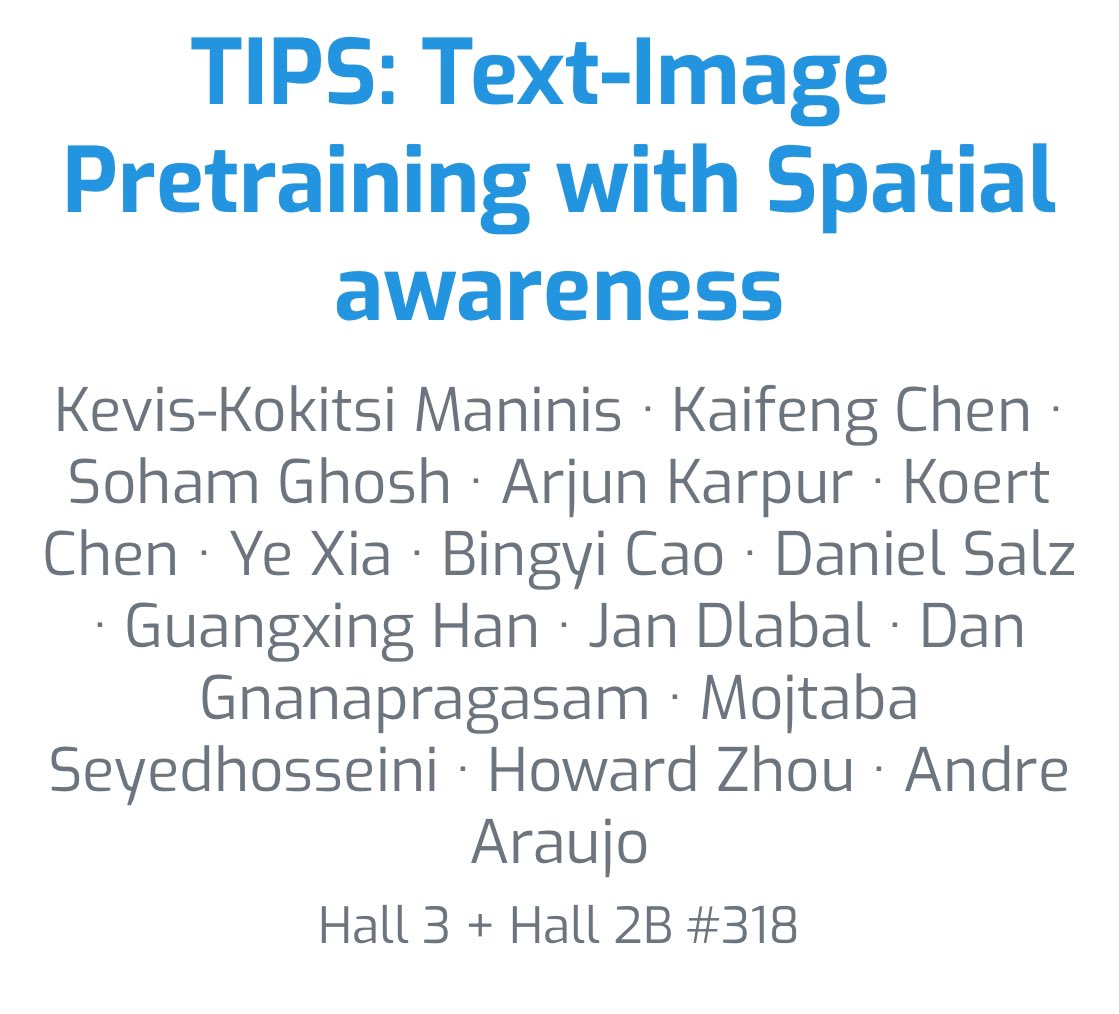

Want some TIPS? Well, then check out “Text-Image Pretraining with Spatial awareness” :) TIPS is a general-purpose image-text encoder, for off-the-shelf dense and image-level prediction. Finally image-text pretraining with spatially-aware representations! arxiv.org/abs/2410.16512

Introducing SigLIP2: now trained with additional captioning and self-supervised losses! Stronger everywhere: - multilingual - cls. / ret. - localization - ocr - captioning / vqa Try it out, backward compatible! Models: github.com/google-researc… Paper: arxiv.org/abs/2502.14786

Announcing the #ECCV2024 workshop on Instance-Level Recognition (ILR)! This is the 6th edition in our workshop series, with amazing keynote speakers: @CordeliaSchmid, @jampani_varun and @g_kordo. Call for papers now open! All information on our website: ilr-workshop.github.io/ECCVW2024/