Sabitlenmiş Tweet

Pedro E. Caparrós Torres

2K posts

Pedro E. Caparrós Torres

@Guelug

👨💻 Developer | 📊 Marketeer | 🔍 Analyst | ⛓️ Blockchain adopter | 🎮 Gamer | 🦞Openclaw user 🕯️ RIP: Google Stadia Early adopter |Thoughts and geek things

Barcelona, España Katılım Haziran 2010

646 Takip Edilen118 Takipçiler

@QingQ77 One works on device… and it’s just inpainting model… the others 2.. by they way are the same Samsung use Gemini behind.. and it’s a nano bana 2 one of the most powerful models not capable to run in a phone…

English

¡Pura adrenalina! 🧬 Ya disponible lo nuevo de #ResidentEvil en #NocheCero. Checa la reseña completa en #PalomaYNacho: 👉 bit.ly/4n1T705 palomaynacho.com/blog/estreno-d…

Español

@Anas_founder @ptrve Still cheap flight to Spain buy it and come back! All in same budget.

English

Been running Kimi k2.6 Code-Preview for a few days and it's a noticeable step up from k2.5.

The code generation is tighter—fewer syntax errors on first pass, better at maintaining context across longer files. It's not just incremental; there's actual architectural improvement in how it handles complex logic and tool calling.

So far its awesome with Hermes agent and Openclaw (talk , This is the best Kimi has been.

Anyone else comparing the two?

English

Claude Opus 4.7 dropped with better coding and vision... but users are reporting performance issues.

Anthropic getting backlash for quality decline. Meanwhile pushing Claude Cowork with enterprise analytics.

Compute crunch is real. When scaling this fast, quality control gets messy.

Anyone else noticing differences? So far so good but if you test it out of the box be carefull with deletions...

English

@thekitze You forget to say, you did the tínker web with Neo , its over!!!

English

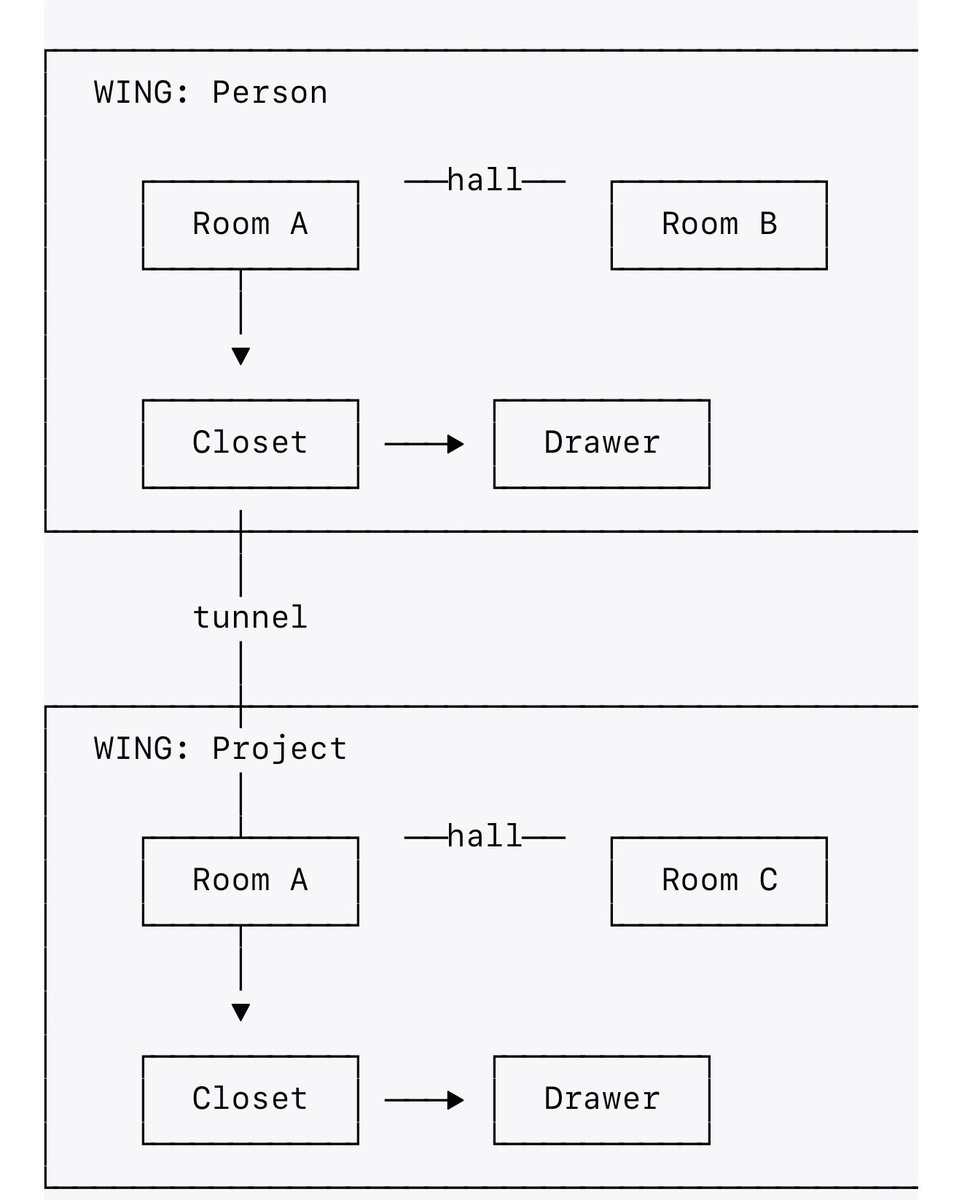

So Mila jovovich and @bensig release Mempalace. I will be testing it with openclaw and Hermes. Still didn’t have time to read more than the concept!

English

Imagine you have a big pretend house... and every friend gets their own room... and every type of toy gets its own drawer.

When you want to find your red truck, you don't dump out every toy box in the whole house. You just go "that's in Jake's room, in the toy car drawer" and grab it.

That's mempalace. But for robots. They talk to people all day and forget everything when they go to sleep ("good morning, who are you? who am I?").

So, we built them a pretend house where they put all their conversations in drawers. When they wake up, they check their house and remember everything.

English

30 second explanation of the MemPalace by Milla Jovovich.

By day she’s filming action movies, walking Miu Miu fashion shows, and being a mom. By night she’s coding.

She’s the most creative, brilliant, and hilarious person I know. I’m honored to be working with her on this project… more to come.

English

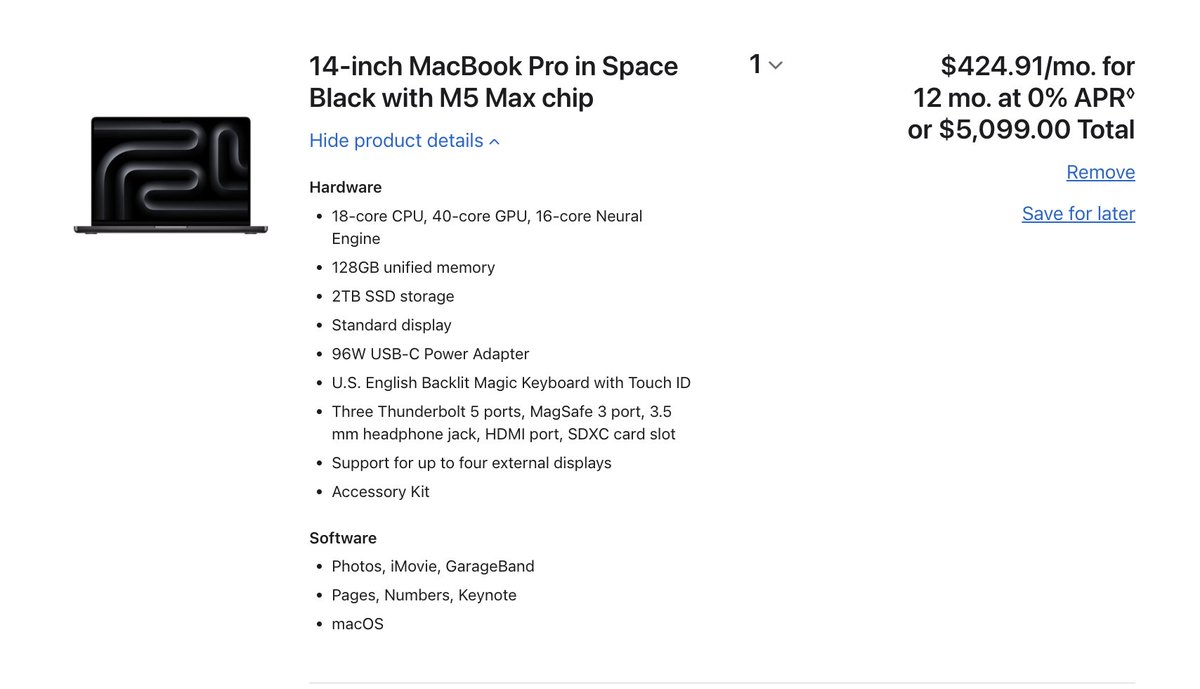

@tipofthespear78 @ashen_one @AlexFinn You can probably run the mobile version, something more than 9B would be hard with that memory.

English

@ashen_one @AlexFinn I'm on an m4 mini 16gb. Guess I'm a long way from a functional local. Models requirements.

Guessing that's going to change quickly though.

Thanks.

English

@thekitze Raycast with ollama!

llama already update to use native mlx optimial inference on the models so you get the fastest it can go. Probably soon could get even faster with turboquant, which you can use the custom cpp already and test it!

English

Claude third party tools block is only for Openclaw!! This is funny! Been a while using Kimi 2.5 and Minimax 2.7! I'm excited to the improvements coming in the next patch for gpt subs.

Flor.@FlorianKluge

@steipete Well there you go. Tested and confirmed:

English

And all those stupid wasters become client , bought Mac minis increasing sales and got 200$ Mac tier account that before everyone normal was thinking it’s expensive and place it into the normal tier and transform into mentality it’s a worker for those ‘stupids’ how many of those stupids also would use it as much as more efficient … there are probably those rich people putting money to let it stays there asking the weather … still so much people unwilling to pay 200 $ max tier make the upgrade even people having more than one account … ChatGPT or others just decide place a slow pipeline for those users and make it work to keep earning money! Models as kimi or minimax make it cheaper. I would be they could even push only using sonnet and optimize their models and make it work, but they decide just shut the door!

English

For every one that did that (and those users didn’t need claw to do it) - there’s a thousand running aimlessly wasting tokens.

You got high on your own supply, cashed out and never once considered that people would misuse your creation to Maximize Slop with Zero return

Now Anthropic is forced to kill novel uses because your creation encourages wasteful token consumption in an unsupervised manner.

You ruined it for everyone because you didn’t once bother to consider the consequences of your actions and that products like this need to use Per Token pricing due to their nature of encouraging wasteful cron loops that the user isn’t even reading.

How many claws have been left running with the human forgetting them? How many are being used for tasks that they aren’t efficient at?

Anthropic did the right thing saying its subscription wasn’t meant for this wasteful slop usage. But hey - you got away with misappropriating their name, tweaking their mascot - you got paid - and are yet to once take responsibility for the security nightmare you created and the toxic ecosystem you spawned that is wasting tokens for near zero gains

English

@RubberDucky_AI @davemorin We must have different timelines, I see lots of people building awesome stuff or even brewing beer with it!

English

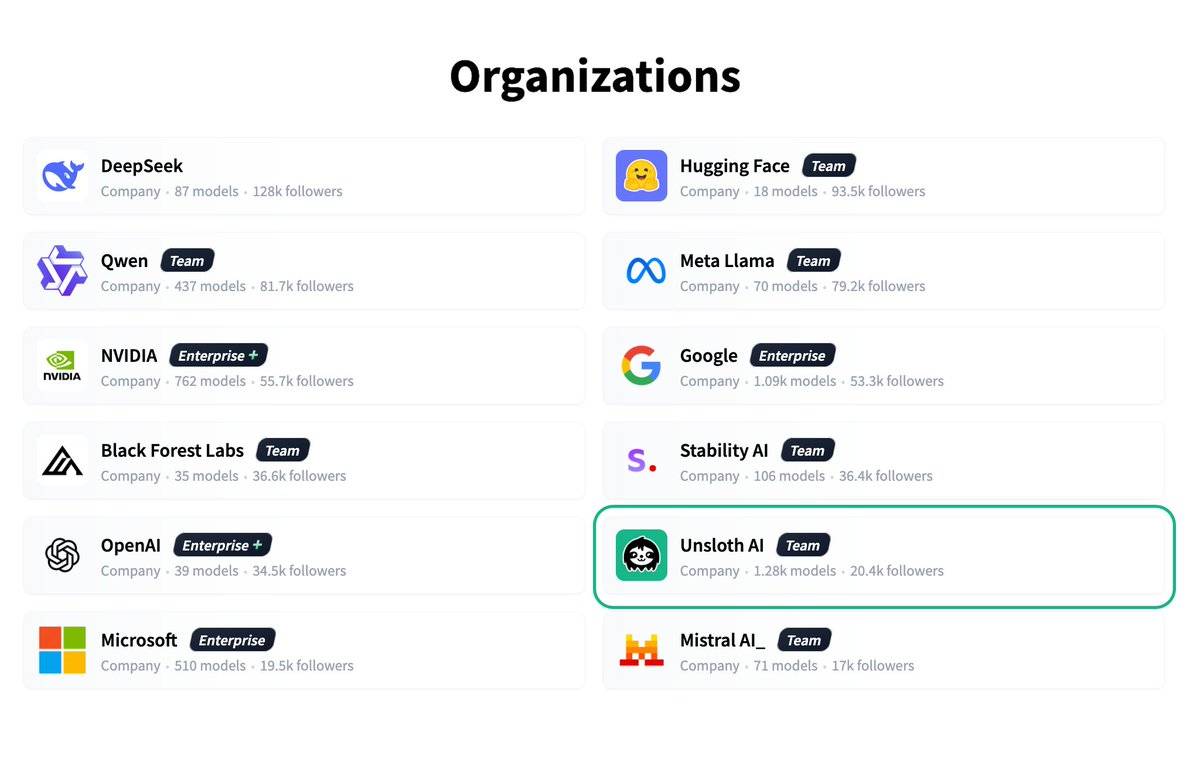

Exciting week for open source models.

Google finally listened. Gemma 4 is actually open source now — Apache 2.0 license, no weird restrictions. Previous versions had that custom license everyone hated. This is the kind of move that makes developers trust you.

Three flavors depending on your hardware:

→ Small (2B/4B) - runs on edge devices, literally in your browser

→ Dense 31B - local execution with server-grade performance

→ MoE 26B - activates 4B params per token but loads all 26B for speed

All models handle text, images, video, and audio. Context windows go up to 256K on the bigger ones. Native function calling for agentic workflows.

Memory reqs for the dense 31B:

• 58GB at 16-bit

• 30GB at 8-bit

• 17GB at 4-bit

How does it stack up against Qwen 3.6?

Qwen 3.6 Plus just dropped (free via OpenRouter) with 1M context window and always-on reasoning. Beats Claude 4.5 Opus on Terminal-Bench (61.6 vs 59.3), hits 78.8 on SWE-bench Verified. Hybrid MoE architecture with linear attention.

For agentic coding and reasoning, Qwen might edge ahead on pure benchmarks. But if you need full control, multimodal support baked in, or want to build something you actually own?

Grab it from Kaggle or Hugging Face. Build something cool? Let me know what you're working on.

English

@jtdavies It’s april … everything happens on aprils lucky day!

English

Holy shit, they've just got my instant respect!

From a screw to a major breakthrough in AI, got to love this industry!

Charly Wargnier@DataChaz

🚨BREAKING - HUGE MOVE: Anthropic is going fully open-source, rebrands to OpenClaude 🤯

English