Hardhat Chad

7.5K posts

Hardhat Chad

@HardhatChad

Foreman @OREsupply, Maintainer @STEELnew

The token cost to build a production feature is now lower than the meeting cost to discuss building that feature. Let me rephrase. It is literally cheaper to build the thing and see if it works than to have a 30 minute planning meeting about whether you should build it. It’s wild when you think about it. This completely inverts how you should run a software organization. The planning layer becomes the bottleneck because the building layer is essentially free. The cost of code has dropped to essentially 0. The rational response is to eliminate planning for anything that can be tested empirically. Don’t debate whether a feature will work. Just build it in 2 hours, measure it with a group of customers, and then decide to kill or keep it. I saw a startup operating this way and their build velocity is up 20x. Decision quality is up because every decision is informed by a real prototype, not a slide deck and an expensive meeting. We went from “move fast and break things” to “move fast and build everything.” The planning industrial complex is dead. Thank god.

Vibe coders after realizing they'll still have to dance on TikTok to market their SaaS.

insane sequence of statements buried in an Alibaba tech report

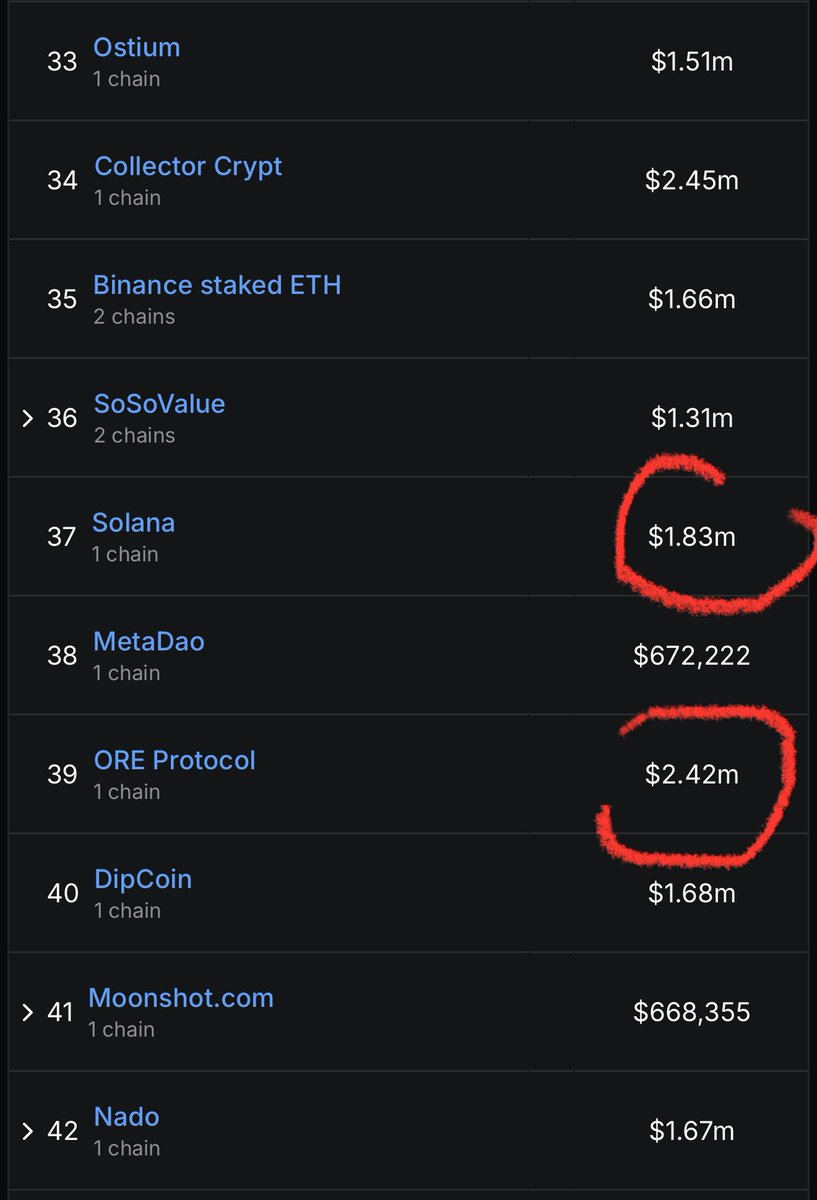

Top 10 Solana DApp by Revenue in the Last 30 Days 📊 1⃣@Pumpfun 2⃣@JupiterExchange 3⃣@AxiomExchange 4⃣@phantom 5⃣@tryfomo 6⃣@ant_fun_trade 7⃣@OREsupply 8⃣@MeteoraAG 9⃣@BagsApp 🔟@TrojanOnSolana

⚡️ INSIGHT: Solana Foundation President Lily Liu says, “If Solana doesn’t do it, nobody will.” Solana is the last serious contender for peer-to-peer electronic cash.

《作弊码》第一集 Solana Mobile is a cheat code📲@toly

The community will be hosting a "Minerside Chat" on Discord today at 7 PM EST, featuring @HardhatChad and the developers of @minemoreapp. Mark your calendars and we’ll see you there!

.@solanamobile is a special place right now. There is just enough interest and not enough noise for early stage founders to get their first 10k users.