Harsh Mehta

70 posts

Harsh Mehta

@HarshMeh1a

@MirendilAI, Past: AI R&D @AnthropicAI, @GoogleDeepmind, Gemini

We have been heads down but wanted to share a bit about what we are doing 🧵

Career update: I left xAI to start something new, closing my 7+ year chapter working at Twitter, X, and xAI with so much gratitude. xAI is truly an extraordinary place. The team is incredibly hardcore and talented, shipping at a pace that shouldn’t be possible. From the Home Timeline at X to Grok 2, 3, and 4 at xAI, I worked across product infra and model behavior post-training, from memory to coding infra, agents, and more. Pure startup mode, every day. Working closely with Elon across X and xAI, I saw what happens when you refuse to accept impossible as an answer. I learned to embody obsessive attention to detail, maniacal urgency, and to think from first principles. I’m deeply grateful to @elonmusk for the experience, to @wanghaofei for the trust and support throughout Twitter/X, and to @TheGregYang and @ibab for believing in me. And to the many incredible people I had the privilege to work with along the way, thank you! Now, I’m excited to take the leap and build something new, focused on accelerating science. More soon.

Introducing Claude Sonnet 4.5—the best coding model in the world. It's the strongest model for building complex agents. It's the best model at using computers. And it shows substantial gains on tests of reasoning and math.

Today we're releasing Claude Opus 4.1, an upgrade to Claude Opus 4 on agentic tasks, real-world coding, and reasoning.

Today, we are making an experimental version (0801) of Gemini 1.5 Pro available for early testing and feedback in Google AI Studio and the Gemini API. Try it out and let us know what you think! aistudio.google.com

@MLCommons #AlgoPerf results are in! 🏁 $50K prize competition yielded 28% faster neural net training with non-diagonal preconditioning beating Nesterov Adam. New SOTA for hyperparameter-free algorithms too! Full details in our blog. mlcommons.org/2024/08/mlc-al… #AIOptimization #AI

Today we have published our updated Gemini 1.5 Model Technical Report. As @JeffDean highlights, we have made significant progress in Gemini 1.5 Pro across all key benchmarks; TL;DR: 1.5 Pro > 1.0 Ultra, 1.5 Flash (our fastest model) ~= 1.0 Ultra. As a math undergrad, our drastic results in mathematics are particularly exciting to me! In section 7 of the tech report, we present new results on a math-specialised variant of Gemini 1.5 Pro which performs strongly on competition-level math problems, including a breakthrough performance of 91.1% on Hendryck’s MATH benchmark without tool-use (examples below 🧵). Gemini 1.5 is widely available, try it out for free here aistudio.google.com & read the full tech report here: goo.gle/GeminiV1-5

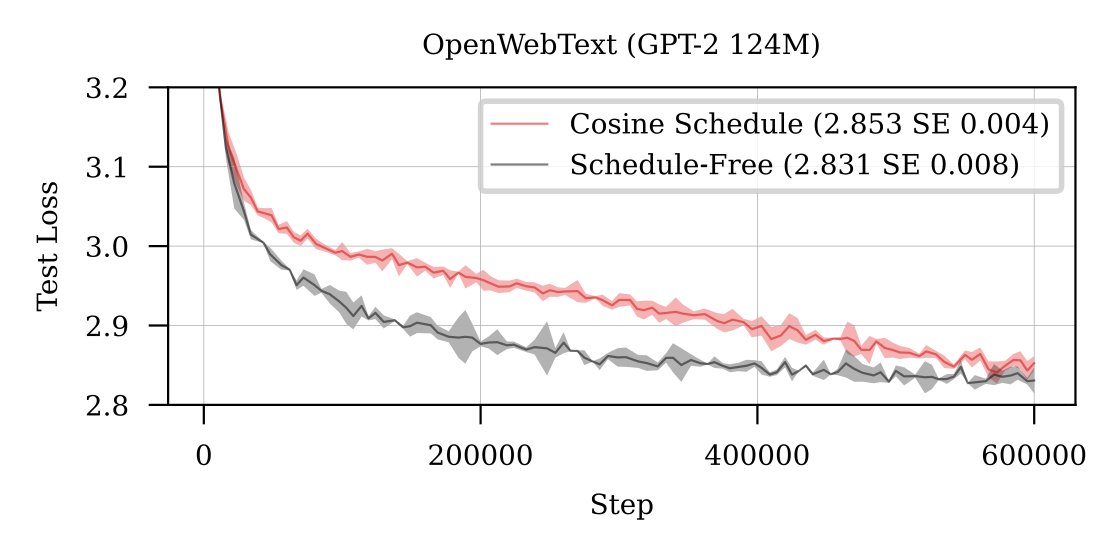

Schedule-Free Learning github.com/facebookresear… We have now open sourced the algorithm behind my series of mysterious plots. Each plot was either Schedule-free SGD or Adam, no other tricks!

I’m very excited to share our work on Gemini today! Gemini is a family of multimodal models that demonstrate really strong capabilities across the image, audio, video, and text domains. Our most-capable model, Gemini Ultra, advances the state of the art in 30 of 32 benchmarks, including 10 of 12 popular text and reasoning benchmarks, 9 of 9 image understanding benchmarks, 6 of 6 video understanding benchmarks, and 5 of 5 speech recognition and speech translation benchmarks. Gemini Ultra is the first model to achieve human-expert performance on MMLU across 57 subjects with a score above 90%. It also achieves a new state-of-the-art score of 62.4% on the new MMMU multimodal reasoning benchmark, outperforming the previous best model by more than 5 percentage points. Gemini was built by an awesome team of people from @GoogleDeepMind, @GoogleResearch, and elsewhere at @Google, and is one of the largest science and engineering efforts we’ve ever undertaken. As one of the two overall technical leads of the Gemini effort, along with my colleague @OriolVinyalsML, I am incredibly proud of the whole team, and we’re so excited to be sharing our work with you today! There’s quite a lot of different material about Gemini available, starting with: Main blog post: blog.google/technology/ai/… 60-page technical report authored by th Gemini Team: deepmind.google/gemini/gemini_… In this thread, I’ll walk you through some of the highlights.

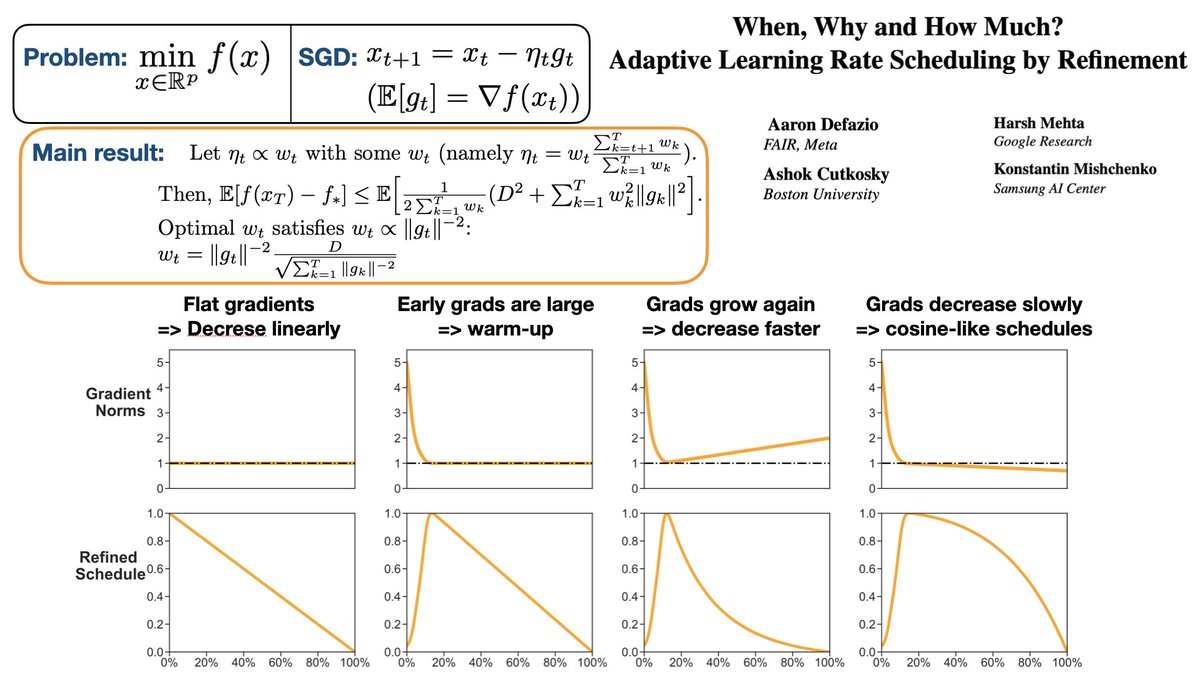

🚨 New Paper 🚨 A new approach to learning rate scheduling! Our refinement theory gives schedules that include warmup and annealing-to-zero automatically. arxiv.org/abs/2310.07831 It improves on strong baseline schedules across a majority of deep learning problems!